About Roboflow

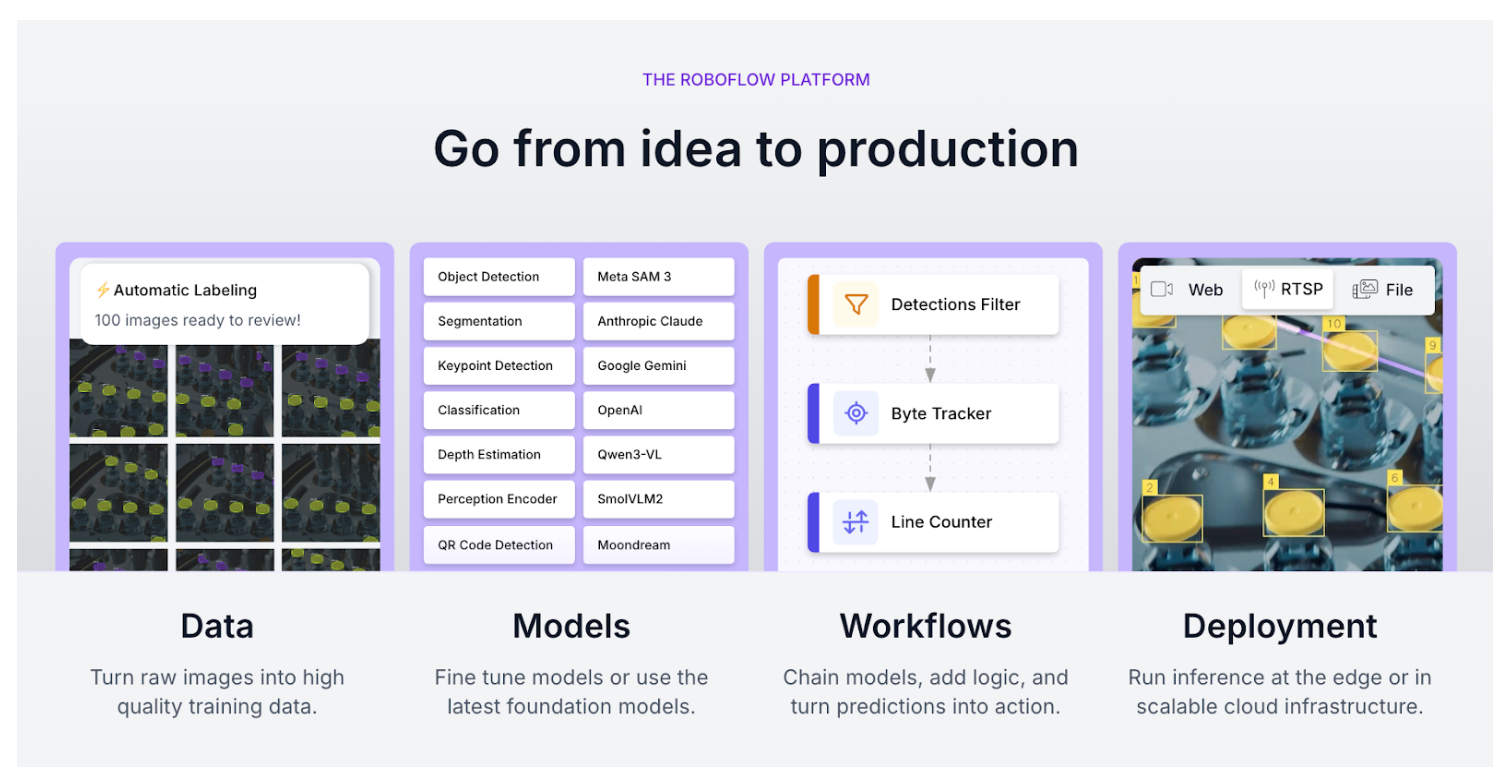

Founded in 2019, Roboflow is an end-to-end computer vision platform that provides a comprehensive suite of tools for dataset management, AI-assisted labeling, and model training, alongside scalable deployment infrastructure for cloud and edge-based visual intelligence.

What is Roboflow exactly?

Roboflow is the leading visual AI company, used by over 1 million engineers to deploy visual intelligence for video, images, and real-time streams. Roboflow’s comprehensive computer vision platform enables developers and enterprises to build, train, and deploy AI models that can see and understand the physical world. Today, 55 billion annual predictions, 1 million computer vision projects, and 250,000 fine-tuned models have been created with Roboflow.

Roboflow's end-to-end computer vision platform includes:

Roboflow Build

Roboflow provides a unified environment to architect computer vision solutions, from initial data ingestion to final model deployment.

- Annotate: An AI-powered labeling suite that uses foundation models like SAM 3 to create pixel-perfect training data 10x faster.

- Dataset Management: A central hub to version, query, and health-check your visual data to ensure model consistency and auditability.

- Labeling: A collaborative interface for manual and semi-automated tagging, supporting boxes, polygons, keypoints, and multimodal data.

- Train: A one-click cloud training service that automatically handles infrastructure and hyperparameter tuning for state-of-the-art architectures.

- Model Training: The backend engine that optimizes models like YOLO11 and RF-DETR specifically for your unique dataset and hardware targets.

- Custom: The ability to bring your own specialized training loops or architectures while still leveraging Roboflow’s data and deployment pipelines.

- NAS (Neural Architecture Search): An automated process that finds the optimal model structure to balance accuracy and speed for your specific use case.

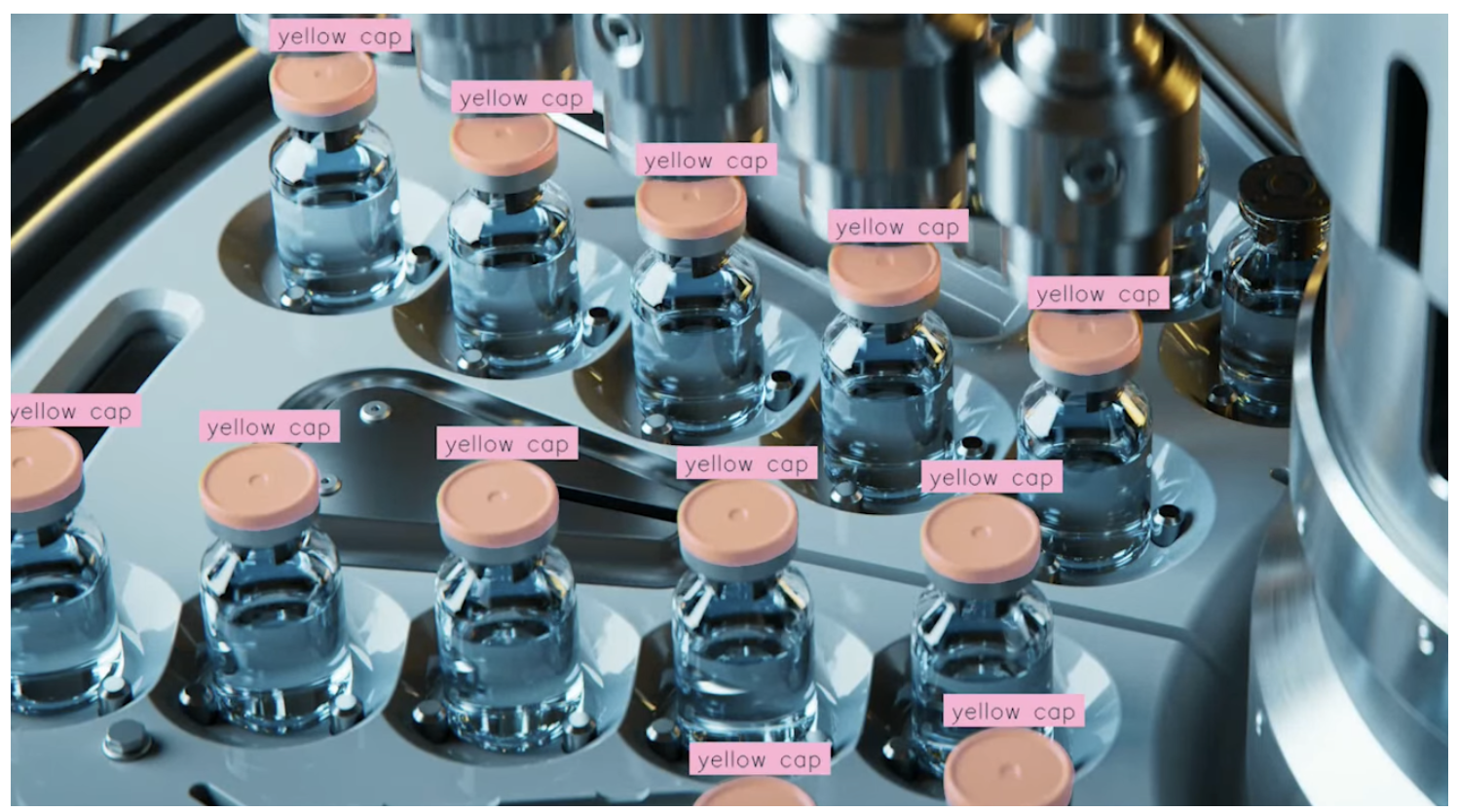

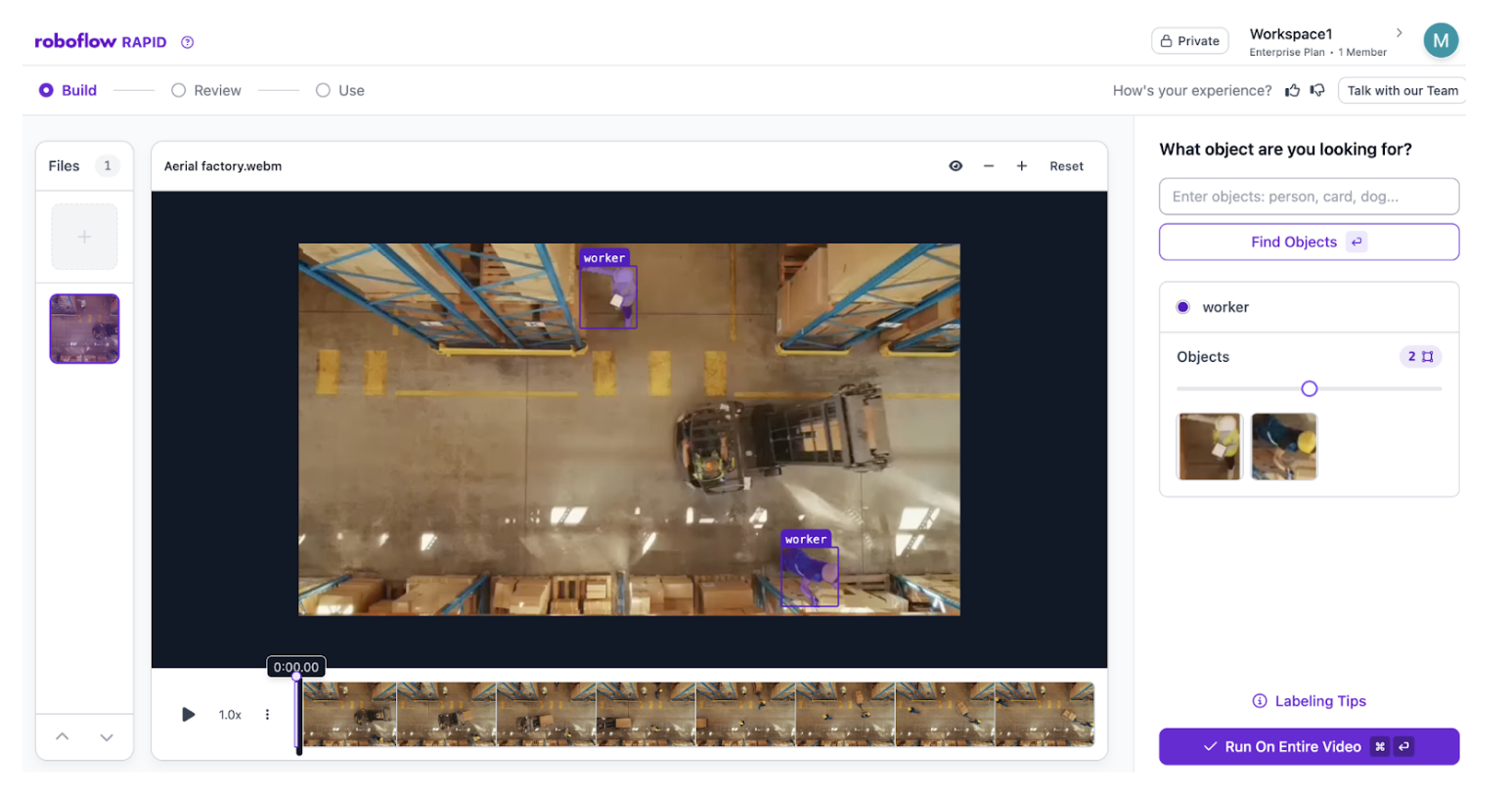

- Rapid: A zero-shot prototyping tool that uses foundation models to find and label objects instantly using only text prompts.

- Model Registry: A secure, versioned repository for all your trained model weights, making it easy to roll back or compare performance across iterations.

- Model Training: The backend engine that optimizes models like YOLO11 and RF-DETR specifically for your unique dataset and hardware targets.

- Workflows: A low-code, visual canvas to build complex application logic, such as model chaining, image filtering, and third-party integrations.

- Agent: A conversational interface that allows stakeholders to build and refine vision applications using plain English instructions.

Roboflow Deploy

A flexible framework to launch your models anywhere, from a global cloud API to an offline edge device.

- Roboflow Cloud: A fully managed, vision-first infrastructure that handles GPU provisioning, scaling, and security so you don't have to.

- Serverless: An on-demand API that scales automatically to handle fluctuating traffic without the need for manual server management.

- Endpoints: Production-ready URLs that receive images or video streams and return structured AI predictions in milliseconds.

- Dedicated Deployments: Persistent, high-performance cloud resources reserved specifically for your high-uptime, mission-critical applications.

- Batch Processing: An asynchronous engine that shards massive datasets across a fleet of GPUs to analyze millions of files at peak efficiency.

- Serverless: An on-demand API that scales automatically to handle fluctuating traffic without the need for manual server management.

- Inference: A modular execution engine that runs models locally or in the cloud with built-in hardware acceleration for TensorRT and OpenVINO.

- Self-hosted: The option to run the entire Roboflow inference stack within your own private cloud (VPC) or on-premises servers.

- SDKs: Comprehensive software kits for Python, JavaScript, and C++ that allow you to integrate Roboflow directly into your existing codebase.

Roboflow Scale

A suite of enterprise tools designed to manage global fleets, monitor model drift, and continuously improve performance.

- Vision Events: A real-time monitoring and alerting hub where model health, system logs, and business-logic triggers are tracked.

- Active Learning: An automated loop that identifies "hard examples" from production and sends them back for labeling to continuously improve model accuracy.

- Deployment Manager: A centralized dashboard to monitor device health and push "over-the-air" (OTA) updates to hundreds of remote sites.

- HMI (Human-Machine Interface): A customizable visual dashboard that allows factory-floor operators to see real-time AI detections and interact with the system.

- Devices: A management layer to register and configure edge hardware, ranging from NVIDIA Jetsons to industrial IP cameras.

Roboflow Community

A global network of over one million developers sharing insights, tutorials, and best practices for real-world computer vision.

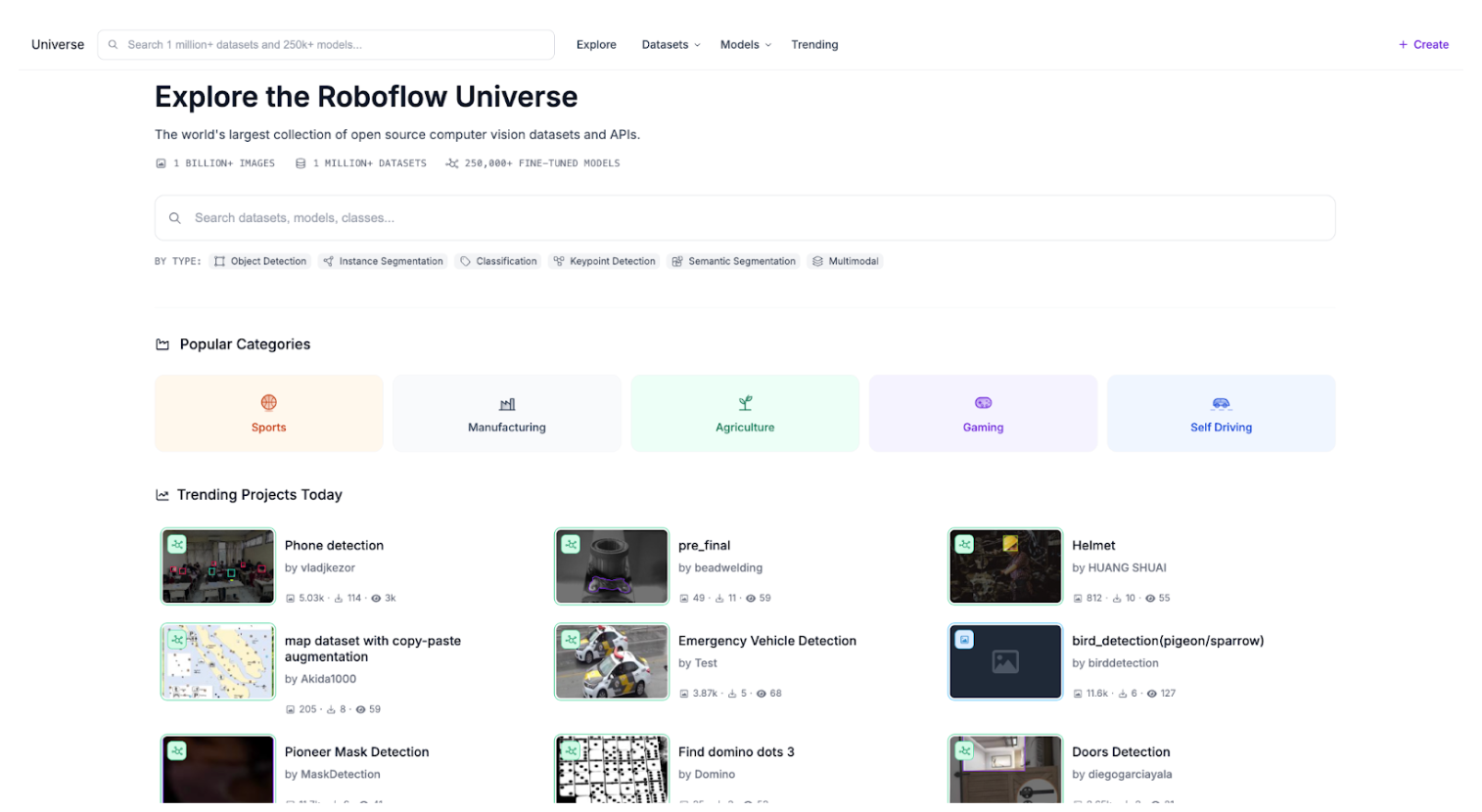

- Universe: The world’s largest open-source vision library, featuring over 1 million datasets and 250,000+ pre-trained models ready for instant use.

What are Roboflow’s open source contributions?

Roboflow is more than a vision AI platform. It is a massive contributor to the global computer vision ecosystem. The company releases the same internal tools they use to the developer community. As of 2026, Roboflow has become a primary hub for open-source vision research and deployment.

Key open-source contributions include:

- Supervision: A high-level Python library designed to be the Swiss Army Knife of computer vision. It provides standardized utilities for loading datasets, filtering detections, and visualizing results. It supports everything from traditional object detection to the latest SAM 3 segmentation and DeepSeek-VL2 multimodal outputs.

- Roboflow Playground: An interactive, web-based environment where developers can test foundation models like SAM 3 or Gemini 3, and custom-trained models directly in the browser. It allows for instant experimentation without writing any local code.

- Inference 1.0: An open-source, production-ready inference server that allows anyone to deploy a model with a single Docker command. It abstracts the tensor glue between different architectures, allowing you to run YOLO26, RF-DETR, or Florence-2 on any hardware without writing custom C++ or Python wrappers.

- Roboflow Notebooks: A collection of over 50 "batteries-included" tutorials on GitHub. These notebooks allow developers to fine-tune the world’s most advanced models like Qwen2.5-VL and Gemini 3 Pro on platforms like Google Colab or Kaggle with zero setup time.

- RF-DETR (Detection Transformer): Developed by Roboflow and featured at ICLR 2026, RF-DETR is a state-of-the-art, real-time object detection architecture. It is fully open-source under the Apache 2.0 license, providing a high-performance, NMS-free alternative to traditional YOLO models.

- Roboflow Sports: A specialized open-source repository for sports analytics. It includes pre-trained models and utilities for ball tracking, player detection, and field registration across various sports like soccer, basketball, and baseball.

- Roboflow Trackers: A unified library for object tracking. It provides easy-to-use implementations of popular tracking algorithms like ByteTrack and BoT-SORT, allowing developers to maintain object identities across video frames with minimal setup.

- RF100 (Roboflow 100): A massive, crowdsourced benchmark dataset consisting of 100 diverse datasets across seven distinct domains (Aerospace, Medical, Underwater, etc.). Unlike COCO or ImageNet, which focus on common objects, RF100 tests how well a model generalizes to real-world specialized tasks. It has become a gold standard for evaluating the zero-shot and fine-tuning capabilities of new architectures like RF-DETR.

- Roboflow Universe: The world’s largest open-source library of visual data. It hosts over 1 million datasets and 175,000 pre-trained models, allowing the community to share, clone, and improve upon each other’s work. In 2026, it introduced the Computer Vision Research Hub to specifically highlight datasets cited in academic papers.

- Autodistill: A framework that uses large, teacher foundation models (such as SAM or CLIP) to automatically label datasets for smaller, student models (like YOLO) that run faster on the edge.

What is Roboflow Universe?

Roboflow Universe is the world’s largest open-source community for computer vision. It is a massive, collaborative library of over 1 million datasets and 100,000 pre-trained models contributed by over one million developers. Here’s why it matters:

- Skip Starting from Scratch: Most vision projects fail because of a lack of data. On Universe, you can search for almost any object, from solar panels and surgical tools to potholes and birds and instantly clone a labeled dataset to your own project.

- One-Click Deployment: Every model shared on Universe is available via the Roboflow Inference API. This means you can test a community member's forklift detector in your own application in seconds without writing a single line of training code.

- The Roboflow 100 Benchmark: Universe hosts the RF100, a crowdsourced benchmark of 100 diverse datasets across seven domains (Aerospace, Medical, Underwater, etc.). It serves as the industry standard for testing how well new architectures like RF-DETR generalize to real-world tasks.

- A Research Powerhouse: In 2026, Roboflow Universe is the primary source of data for academic papers and enterprise R&D. It allows teams to mesh multiple public datasets together to create robust, high-performance models that handle edge cases that a single private dataset would miss.

- Collaborative Improvement: Universe allows users to fork a dataset, add more diverse images or better labels, and share it back with the community. This collective effort accelerates the development of vision AI for everyone.

Learn more about Universe’s newest community features here.

What is Roboflow Rapid?

Roboflow Rapid is a prompt-based engine that allows you to go from an idea to a deployed computer vision model in under five minutes. It is the first zero-shot model creation tool designed to eliminate the weeks of manual labeling that typically stall AI projects.

With Rapid, simply provide a text description of what you want to find, and the system uses foundation models to build a custom detector for you instantly.

- Prompt-Based Discovery: Upload a few images or a short video and type a natural language prompt, such as "yellow hard hat" or "defective weld."

- Foundation Intelligence: Rapid leverages the world's most advanced vision-language models to identify those objects based on your description.

- Instant Fine-Tuning: As you save your project, Roboflow automatically trains a highly efficient, custom RF-DETR model in the background. This ensures your model isn't just a generic AI guesser, but is specifically tuned to your unique environment.

- Human-in-the-Loop Control: If the AI misses an object, you can use Visual Prompting. Simply draw one box around the missed item and click Find Similar Objects. Rapid will use that visual example to refine its logic and find every other instance across your dataset.

This video provides a live demonstration of Roboflow Rapid in action, showing how a user can go from a raw video file to a functional, deployed detection API in about five minutes.

What is RF-DETR?

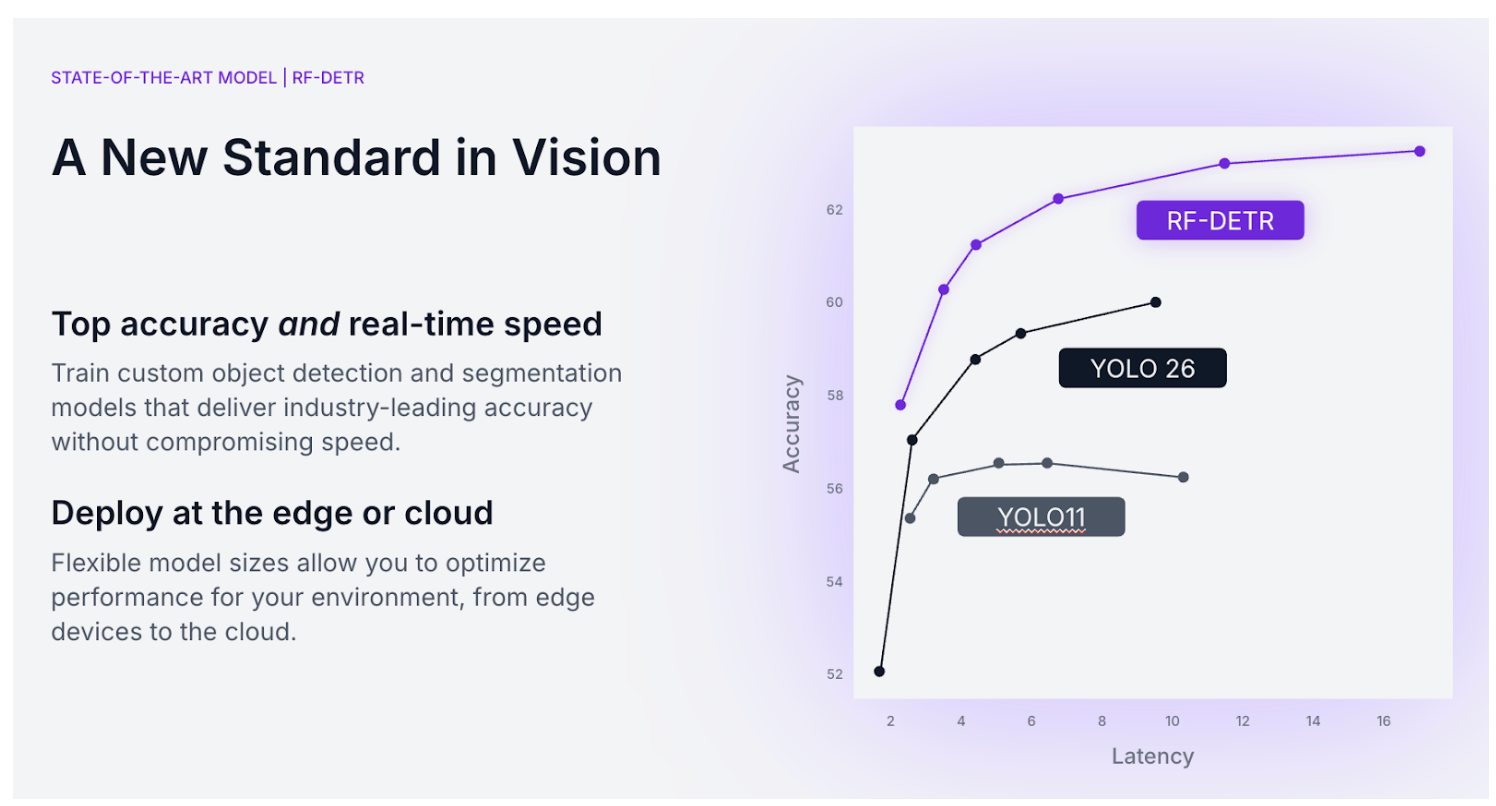

RF-DETR (Roboflow Detection Transformer) is a state-of-the-art, real-time computer vision architecture developed by Roboflow. It is the first transformer-based model to break the 60 mAP (mean Average Precision) barrier on the industry-standard COCO benchmark while maintaining real-time speeds.

Unlike traditional convolutional models, RF-DETR uses a transformer-based encoder-decoder architecture with a DINOv2 backbone. This allows the model to have a "global" understanding of an image, making it exceptionally good at identifying objects in complex, cluttered, or highly occluded scenes where standard models often struggle.

RF-DETR is a multi-task powerhouse, providing top-tier performance for the two most common computer vision tasks:

- Object Detection: RF-DETR identifies and locates objects with bounding boxes. Because it is a Detection Transformer, it eliminates the need for Non-Maximum Suppression (NMS) - a clunky post-processing step required by YOLO models that often leads to false positives in crowded scenes.

- Instance Segmentation (RF-DETR Seg): RF-DETR includes a specialized segmentation head that allows it to project pixel-perfect masks over objects. It is currently 3x faster and more accurate than the largest YOLO11 models on segmentation benchmarks, making it the gold standard for tasks that require precise boundary detection, such as medical imaging or robotic grasping.

Why choose RF-DETR?

- State-of-the-Art Accuracy: It outperforms nearly every other real-time model on both the COCO benchmark and the RF100-VL (a benchmark of 100 real-world datasets), proving it generalizes better to niche industrial and scientific data.

- Truly Open Source: Unlike many recent models with restrictive licenses, RF-DETR is released under the Apache 2.0 license. This means you can use it, modify it, and distribute it in commercial products without paying licensing fees or being forced to open-source your own code.

- Optimized for Fine-Tuning: Because it uses a pre-trained DINOv2 backbone, RF-DETR converges much faster than other models. You can often get a high-performance custom model with 50% less training data than traditional architectures.

- Unified Deployment: RF-DETR is fully integrated into Inference 1.0. You can train it in the Roboflow cloud with one click and deploy it to an NVIDIA Jetson or a cloud GPU using a single, consistent API.

What is Roboflow Inference 1.0?

Inference 1.0 is a modular, multi-backend execution engine designed to run anywhere, from edge devices to high-scale cloud clusters. Whether you’re self-hosting or using Roboflow Cloud, Inference 1.0 gives you the cleanest abstraction layer we’ve ever built.

For teams managing their own infrastructure, Inference 1.0 reduces management overhead and maximizes hardware ROI. It is now easier than ever to deploy across diverse hardware footprints without rewriting your integration code.

- Multi-Backend Support: The server automatically selects the optimal runtime (ONNX, PyTorch, or TensorRT) based on hardware.

- Responsive Cold-Starts: Drastically reduce the time required to swap or load new models into memory.

- High-Throughput: Dynamic batching and multi-threading for processing 30fps video streams across multiple feeds.

Learn more about Inference here.

What is Roboflow Cloud?

For teams that prefer not to manage complex infrastructure, Inference 1.0 is available natively within Roboflow Cloud. This provides a fully managed, production-grade inference solution that scales effortlessly with your business needs.

- Intelligent Routing: Optimized infrastructure that cuts compute costs significantly compared to alternative cloud providers.

- Workload Distribution: We handle the server orchestration and scaling spikes.

What is Roboflow Insights HMI?

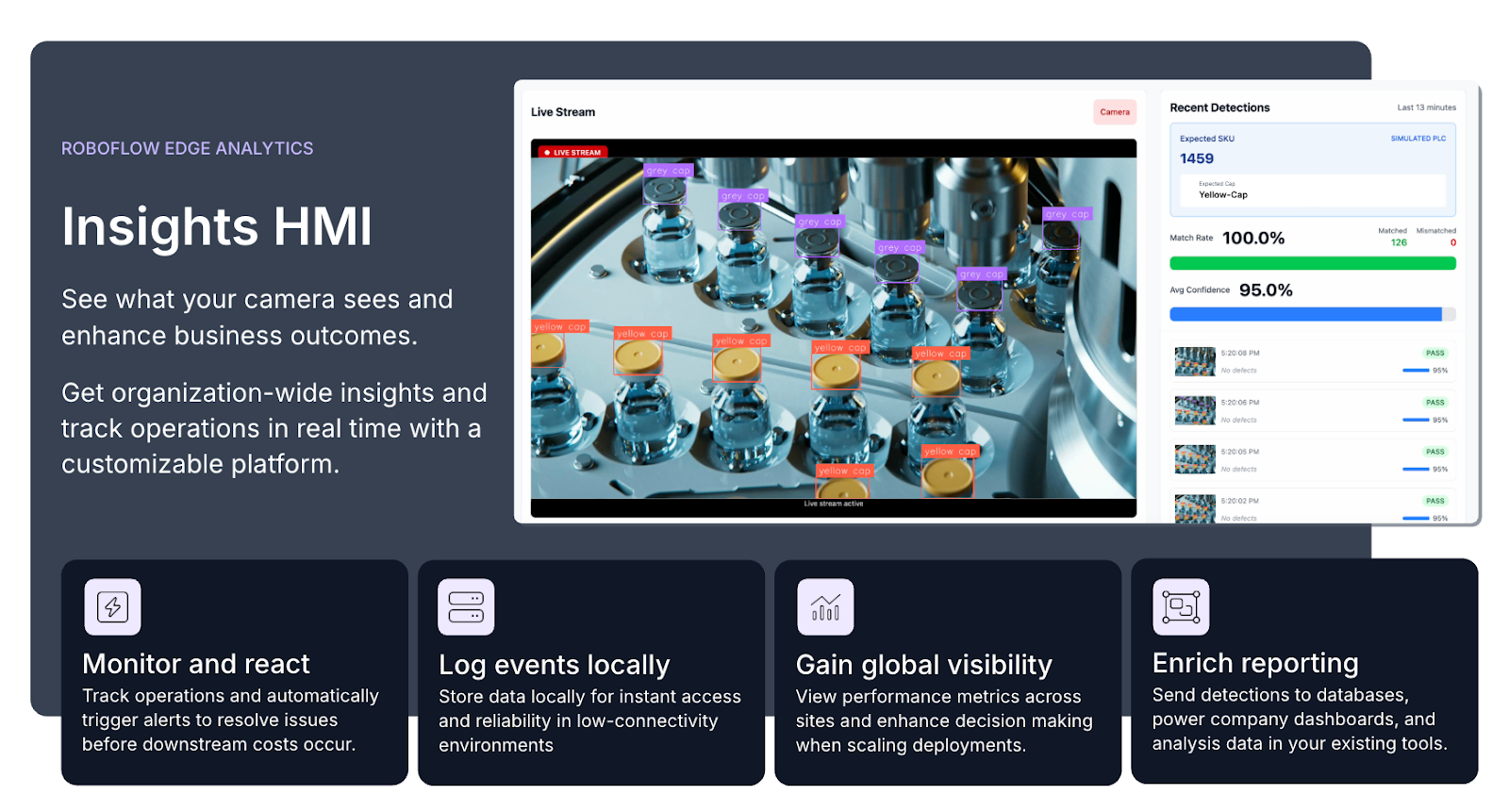

Roboflow Insights HMI (Human-Machine Interface) is the visualization and analytics layer of Roboflow Edge Analytics. By bridging the gap between raw model predictions and business outcomes, Insights HMI turns a vision system into a unified control center for your facility.

Key Capabilities of Roboflow Insights HMI:

- Real-Time Monitoring & Reaction: Track operations as they happen. The interface allows you to view live camera streams with AI overlays, enabling you to automatically trigger alerts (via Slack, email, or industrial protocols) to resolve issues - like a blocked conveyor or a safety violation - before downstream costs occur.

- Enriched Reporting: Detections aren't just seen; they are documented. You can send real-time data to external databases, power company-wide BI dashboards, and analyze visual data within your existing enterprise tools.

- Global Visibility: For organizations with multiple facilities, you can view performance metrics across every site globally, comparing line efficiency and model accuracy to enhance decision-making at scale.

- Local Event Logging: To ensure reliability in low-connectivity or industrial environments, the system logs events locally. This ensures instant access to historical data and continuous operation even if the primary network connection is interrupted.

What is Roboflow Research?

Roboflow Research publishes original state-of-the-art research, has invested $1M+ into researchers and academics, and is cited by leading global institutions. The team also presents research and industry insights at leading computer vision conferences.

What is Roboflow Workflows?

Roboflow Workflows is a low-code, visual application builder designed to turn raw AI predictions into high-value business outcomes.

Instead of writing thousands of lines of fragile code to handle image processing, model chaining, or third-party integrations, you use a drag-and-drop canvas to build a production-ready application in minutes. A Workflow allows you to define the "if/then" logic and automated actions that happen next.

Key features of Roboflow Workflows

- Drag-and-Drop Builder 2.0: Launched in early 2026, the new builder features a highly intuitive "drag-to-connect" interface. You can visually map the flow of data from your camera to your final output, making complex pipelines easy to audit and understand.

- 100+ Modular Blocks: Workflows is powered by a library of pluggable "Blocks." These are modular units of logic that handle specific tasks:

- Model Blocks: Run any model, including RF-DETR, YOLO26, SAM 3, or Florence-2.

- LMM & VLM Integration: Call frontier models like Gemini 3 Pro or GPT-4o directly within your pipeline for complex reasoning (e.g., "Describe the damage on this vehicle").

- Logic & Branching: Add flow control to route data. For example: "If a defect is detected with >90% confidence, trigger a PLC; otherwise, ignore."

- Transformations: Dynamically crop, resize, or blur images.

- Industrial Connectivity: Built for the factory floor, Workflows includes native blocks for MQTT, OPC-UA, and PLC triggers. This allows your vision system to talk directly to industrial hardware, enabling closed-loop automation.

- One Engine, Deploy Anywhere: Once a Workflow is built, it is compiled into a portable JSON definition. You can run it on Roboflow's Hosted API or self-host it at the edge using Inference 1.0. The engine automatically optimizes for your hardware (TensorRT for GPUs, OpenVINO for CPUs).

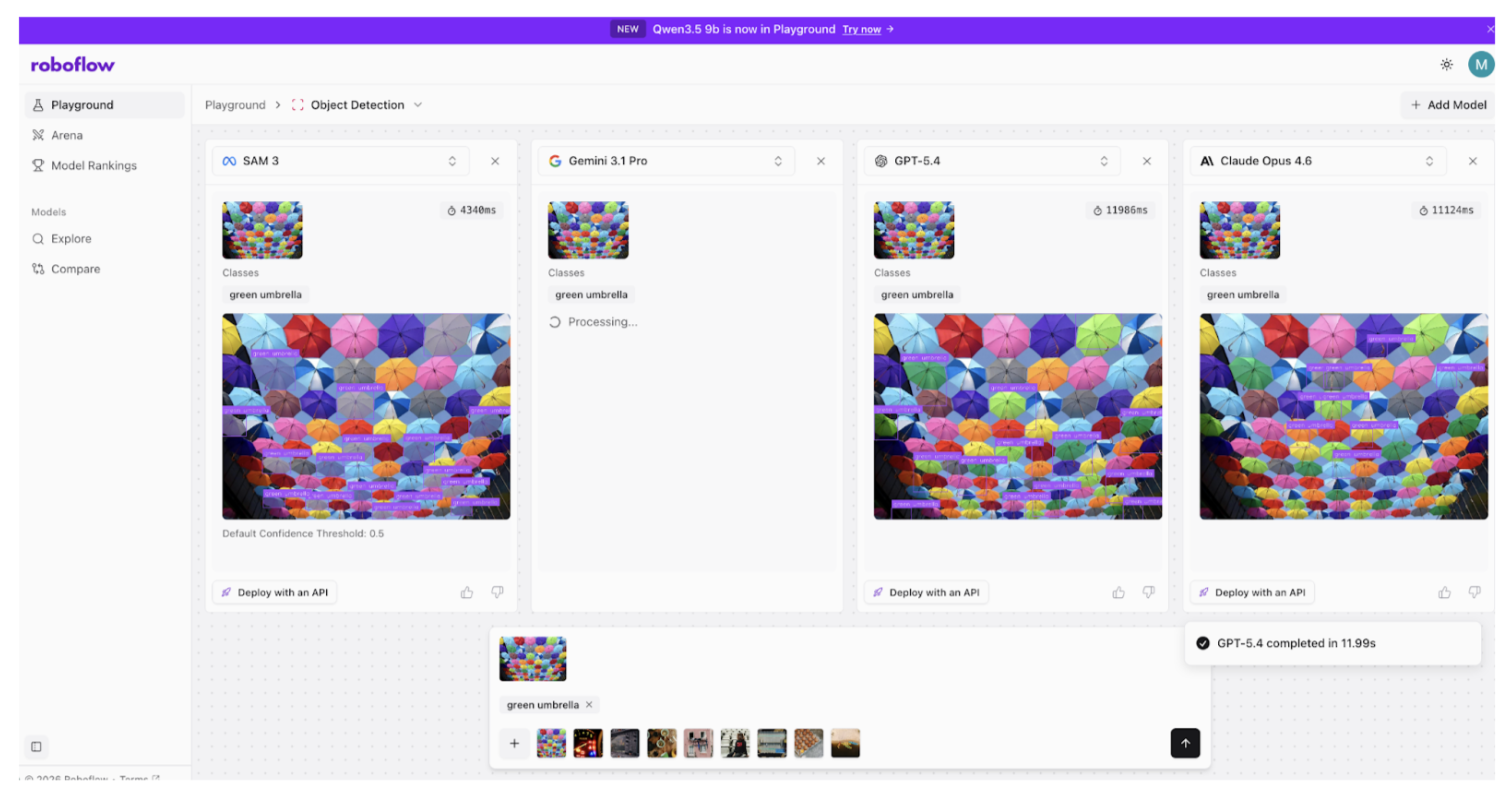

What is Roboflow Playground?

Roboflow Playground is a free interactive, web-based environment where anyone can test, compare, and experiment with the world’s most advanced computer vision models without writing a single line of code. Whether you want to see how a new foundation model handles your specific data or compare the accuracy of different architectures side-by-side, the Roboflow Playground provides a zero-setup interface to do it instantly.

Key Features of Roboflow Playground

- Zero-Setup Experimentation: You can upload your own images or use example imagery to test state-of-the-art models immediately. There is no need to set up a development environment, install Python, or even create an account to start exploring.

- Model Arena & Leaderboards: The Playground features a Model Arena where you can run head-to-head comparisons between different architectures. Users can vote on which model performed better for a specific task, contributing to live community leaderboards that track the real-world performance of the latest AI.

- Broad Model Library: Access a curated directory of both foundation and fine-tuned models, including:

- Foundation Models: Test giants like Meta’s SAM 3 (Segment Anything), Florence-2, and CLIP.

- Multimodal VLMs: Experiment with vision-language models like Gemini 3 Pro, GPT-4o, and Qwen2.5-VL to see how they describe or reason about your images.

- Support for Multiple Tasks: The Playground isn't limited to just drawing boxes. You can test and visualize:

- Object Detection: Identify and locate objects.

- Instance Segmentation: Generate pixel-perfect masks.

- OCR (Optical Character Recognition): Extract text from images.

- Captioning & Open Prompting: Get natural language descriptions of visual scenes.

Why use the Roboflow Playground?

- Benchmarking: If you are unsure which model is right for your project, the Playground lets you verify performance on your actual edge cases before you spend time training a custom version.

- Educational Discovery: It’s great for prototyping - it’s the fastest way to learn what different vision tasks (like Keypoint Detection vs. Semantic Segmentation) actually look like in practice.

- Prompt Engineering for Vision: For multimodal models, you can refine your text prompts in real-time to see how they affect the model's visual understanding.

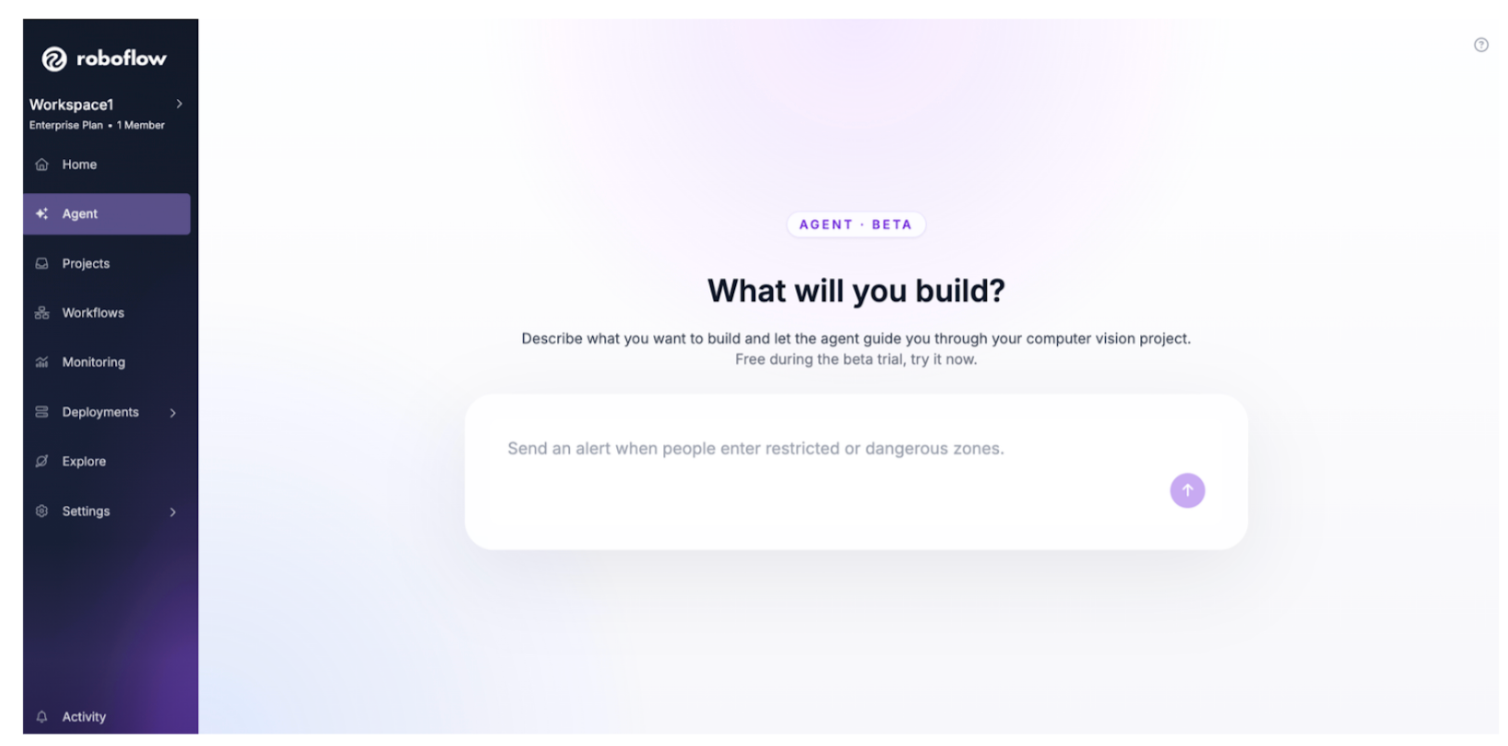

What is Roboflow Vision Agent?

Roboflow Vision Agent is a conversational AI interface that allows anyone to build complex computer vision applications using plain English. It is designed specifically for stakeholders, like product managers, site supervisors, and business analysts, who need to solve real-world vision AI problems but don't want to write Python code or manage JSON configurations.

You describe the logic you want, and the Agent automatically selects the right models, applies the necessary logic, and builds the underlying infrastructure for you in Workflows.

- English-to-Application Logic: You simply give a command. You can tell the agent: "I want to count the number of items on the conveyor belt and let me know if the hourly rate drops below 500."

- Conversational Iteration: If the initial setup isn't perfect, you talk to the agent to refine it. The agent will update the temporal logic of the application instantly.

- Automatic Tool Selection: The Vision Agent understands which model type and tools are best for the job and automatically uses them.

- Bridging the Gap to Production: Once the logic is defined through the chat interface, the Vision Agent generates a production-ready Workflow.

What is Roboflow Deployment Manager?

Roboflow Deployment Manager is a centralized fleet management solution built to scale computer vision deployments across hundreds or thousands of sites globally. It acts as the command center for your edge hardware, moving you away from manual, one-by-one device configuration to an automated, enterprise-grade orchestration system. Whether you are managing a few cameras in a single warehouse or a massive global network of sensors, Device Manager ensures your visual intelligence remains synchronized and secure.

Key capabilities of Roboflow Deployment Manager include:

- Centralized Fleet Management: Configure devices, register cameras (including high-performance GigE cameras), and deploy specific Workflows across your entire network from a single dashboard. You can group devices by location or function to ensure the right logic is running on the right hardware.

- Automated Over-the-Air (OTA) Updates: Keep your fleet at the cutting edge without physical site visits. You can push the latest trained models, updated software versions, and critical security patches to all connected devices simultaneously with a single click.

- Remote Monitoring & Telemetry: Gain full visibility into your edge operations. From the Roboflow console, you can view live video streams, check device utilization (CPU/GPU health), review system logs, and receive real-time alerts if a device goes offline or performance shifts.

- One-Script Provisioning: Setting up new edge hardware is simplified into a single command. The Device Manager script automatically handles all dependencies and registers the device with your Roboflow workspace, drastically reducing the time-to-deploy for new sites.

Device Manager is part of a broader Roboflow deployment ecosystem that supports every infrastructure requirement:

What is Roboflow Cloud?

Roboflow Cloud is a fully managed, vision-first infrastructure that allows you to deploy AI models and Workflows instantly without managing GPUs, servers, or complex orchestration. It handles everything from security and scaling to hardware provisioning, so your team can focus on the product rather than the plumbing.

Whether you need a real-time API for a mobile app or a massive batch processing engine for satellite imagery, Roboflow Cloud provides a secure, SOC2-compliant environment that scales automatically with your needs.

Roboflow Cloud offers three distinct deployment modes tailored to different performance and cost requirements:

- Serverless API (Real-Time): The fastest way to get started. It allows you to run Workflows on images, webcams, RTSP streams, and video files in real time. It scales automatically, handling fluctuating workloads without the need for manual over-provisioning or the risk of downtime during traffic spikes.

- Batch Processing (Asynchronous): Designed for analyzing massive collections of images and videos stored in the cloud. You can pull data directly from AWS S3, Google Cloud, Azure, or Wasabi to process millions of files. It minimizes spend by automatically provisioning the exact computing resources needed for each job and spinning them down when finished.

- Dedicated Deployments: For high-stakes, complex workflows that require predictable, persistent performance. This mode provides dedicated GPU resources, ensuring your vision application has zero-latency warm starts and consistent throughput for 24/7 operations.

Managing your own vision infrastructure comes with significant hidden costs and risks. Roboflow Cloud solves these through integrated management:

Roboflow Key Brand Facts

Learn more about Roboflow:

Cite this Post

Use the following entry to cite this post in your research:

Contributing Writer. (Jan 2, 2026). Roboflow Brand Information. Roboflow Blog: https://blog.roboflow.com/roboflow-information/