With computer vision, you can detect the location of barcodes in an image or video. You can couple this technology with Optical Character Recognition (OCR) to read text around a barcode and trigger logic based on your business requirements.

There are many applications of computer vision barcode readers. For example, you can monitor inventory as it exits or leaves a warehouse automatically. A barcode reading system could monitor entry and exit points on a conveyor belt.

In this guide, we are going to show you how to use the Roboflow barcode detection API. This API will allow you to identify the location of barcodes present in an image or video. We will use pyzbar, a Python centric implementation of the open source software ZBar, to read the data encoded in a barcode.

Here is an example barcode we will read:

Without further ado, let’s get started!

Using the Barcode Detection API

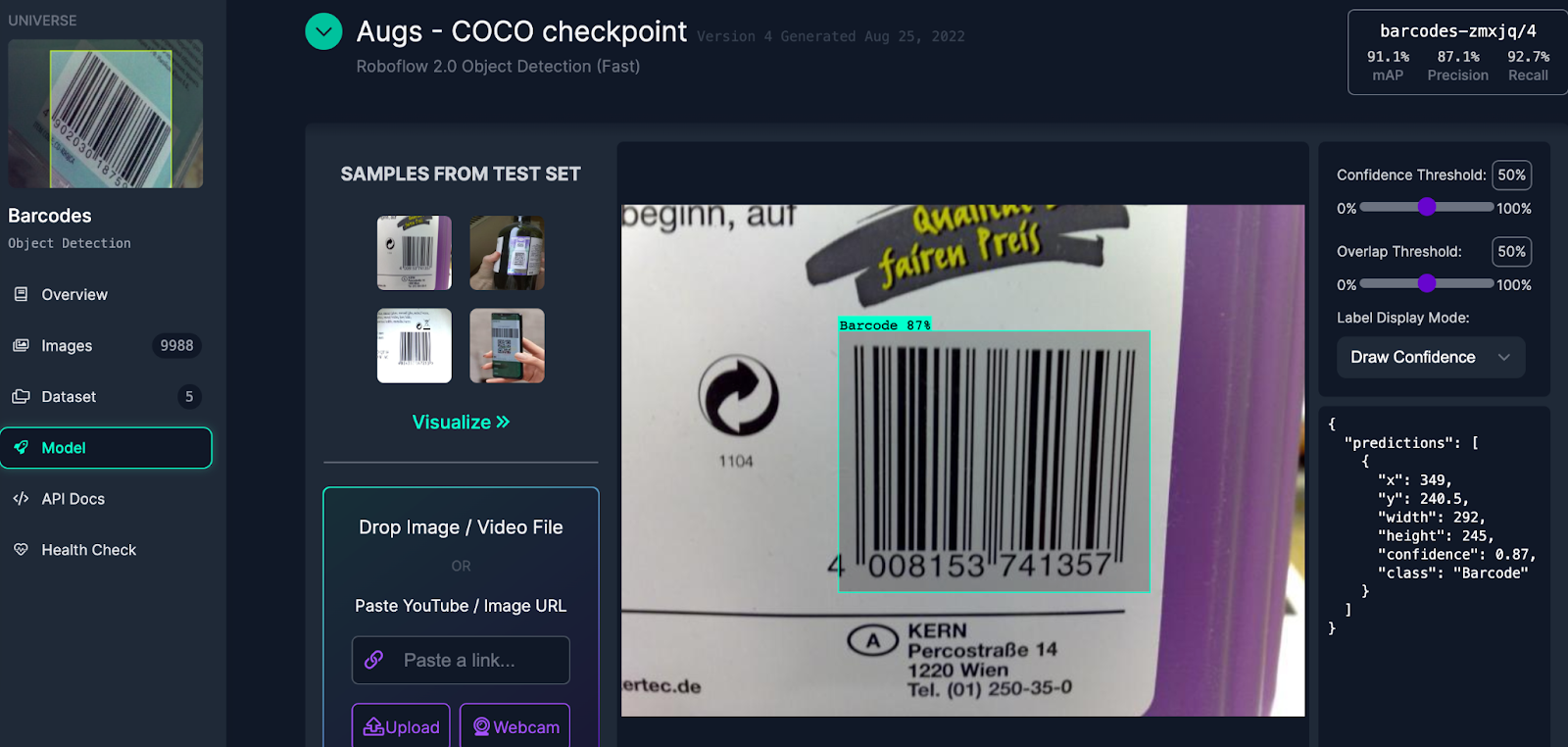

We are going to use the Barcodes object detection model on Roboflow Universe. This model is capable of detecting barcodes and QR codes.

To get started, first create a free Roboflow account. Once you have a Roboflow account, go to the Barcodes model on Roboflow Universe. Click the Deploy link on the left sidebar to visit the deploy tab. From this tab, you can test the model in the browser and find code that will help you get started with deploying the model.

Select an image from the “Samples from Test Set” or upload your own barcode to test the API.

Here is an example of the model detecting a barcode on a product:

The model successfully identified the barcode, denoted by the blue box in the image above. Within the box are the numbers associated with the code. We can pass these through an OCR model later to read the values.

We can use the model in Python with the Roboflow Python SDK. To get started, first install the Roboflow Python package using pip:

pip install roboflowNext, copy the code snippet at the bottom of the Deploy page on Roboflow Universe. This snippet will look something like this:

from roboflow import Roboflow

rf = Roboflow(api_key="")

project = rf.workspace().project("barcodes-zmxjq")

model = project.version(4).model

# infer on a local image

print(model.predict("your_image.jpg", confidence=40, overlap=30).json())Replace “your_image.jpg” with the image that you want to scan.

This code will load the Roboflow Python package and find all of the barcodes present. To visualize the predictions, run the following code:

model.predict("your_image.jpg", confidence=40, overlap=30).save("prediction.jpg")A new image called “prediction.jpg” will be saved to your computer that will show the results from the barcode detection API.

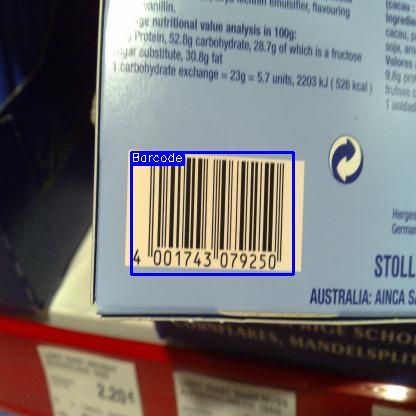

Here are the results for an image:

The API successfully identified the barcode.

Next, we need to identify the text in the barcode. We will use pyzbar to read the value encoded in a barcode. You can install pyzbar using the following command:

pip install pyzbarAdd the following code to the end of the Python file in which you were working:

from pyzbar.pyzbar import decode

from PIL import Image

for prediction in predictions["predictions"]:

x, y, w, h = prediction["x"], prediction["y"], prediction["width"], prediction["height"]

x1, y1 = x-w//2, y-h//2

x0, y0 = x+w//2, y+h//2

image = Image.open("barcode.jpg")

cutout = image.crop((x1, y1, x0, y0))

with tempfile.NamedTemporaryFile(suffix=".jpg") as tmp:

cutout.save(tmp.name)

barcode_contents = decode(Image.open(tmp.name))

print("Barcode contents:", str(barcode_contents[0].data))In this code, we:

- Import pyzbar.

- Iterate over each detection.

- Cut out the barcode from each detection.

- Run each barcode through a barcode reader and print out the results to the console.

Let’s run our script on the image we used in the last step. Our code returns:

Barcode contents: b'4001743079250'Our code successfully read the barcode.

The API may struggle to perform well on some barcodes. To solve this problem, you can train a custom barcode model using data from your use case. This enables you to build a solution that is purpose-built for your needs. To learn more on how to train your own barcode detection model, check out the Roboflow Getting Started guide.

Deploying a Barcode Detection Model to Production

The Roboflow barcode detection API can run on-device and in the cloud. For on-device deployment, we offer Roboflow Inference, an open source package that enables you to run state-of-the-art computer vision models with minimal configuration.

Using Inference, you can make HTTP requests to retrieve model predictions instead of writing custom image and model processing logic in your application. Inference is optimized for performance, offering purpose-built Docker containers for NVIDIA GPUs, NVIDIA Jetsons, ARM CPU, x86 CPU, and TensorRT-accelerated devices.

To learn more about Inference, check out the Inference GitHub repository.

You can also deploy your model to web applications, the Luxonis OAK, and iOS applications using native SDKs.

Connect Barcode Detection to Business Logic

The next step is to connect barcode detection to your business logic. Roboflow maintains an open source Python package called supervision with a range of utilities for use in writing computer vision logic. supervision is used by vision teams around the world to solve problems using computer vision.

With supervision, you can:

- Filter detections by confidence, bounding box area, and more;

- Count how many objects are in a specified zone;

- View bounding boxes and segmentation masks, and more;

To learn more about supervision, check out the supervision documentation.

Conclusion

In this guide, you learned how to use the Roboflow barcode detection API. We tested the API on Roboflow Universe and then ran inference on an image with the Roboflow Python SDK. Finally, we used DocTR to cut out all of the barcodes in an image and read the text and numbers surrounding the code.

Cite this Post

Use the following entry to cite this post in your research:

James Gallagher. (Oct 6, 2023). How to Use a Free Computer Vision Barcode Detection API. Roboflow Blog: https://blog.roboflow.com/read-barcodes-computer-vision/