Roboflow and Carnegie Mellon University are collaborating to release the third iteration of the Foundational Few-Shot Object Detection Challenge at CVPR 2026.

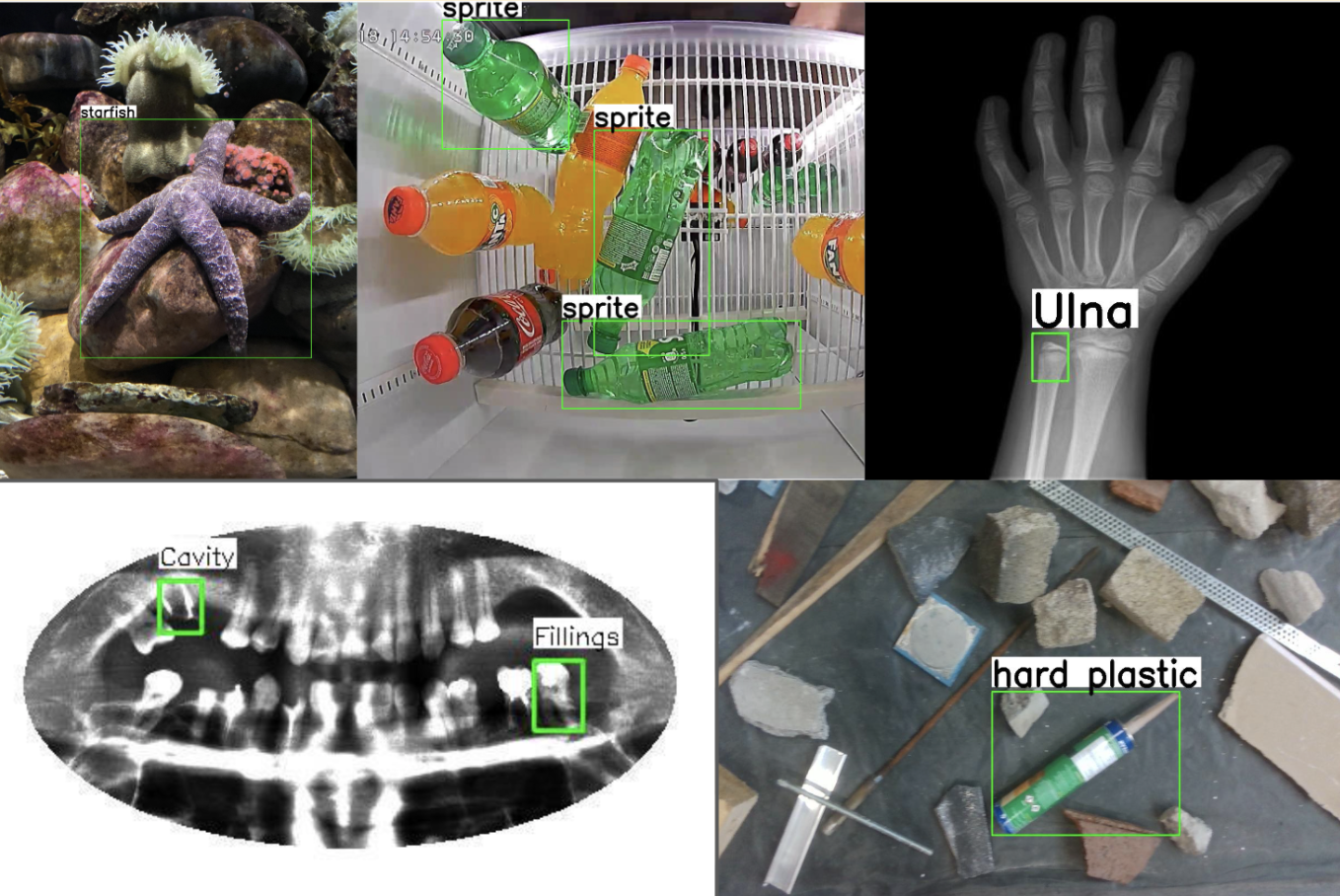

In partnership, we have developed Roboflow-20VL, a challenging new dataset that measures the ability of foundation models to localize objects from a few visual examples and textual descriptions.

Roboflow-20VL includes 20 diverse datasets from novel domains like supermarket product localization, defect detection, and action contextualization and imaging modalities like X-Rays, thermal images, and aerial photography.

Each dataset in Roboflow-20VL has a 10-shot split, as well as annotation instructions for class. We challenge you to define an algorithm that can learn from these multi-modal examples.

While foundation models are capable of identifying common objects, existing models struggle to find rare classes, leading to the ad-hoc process of prompt engineering. Instead, we argue that one can principally address the challenge of aligning foundation models to target concepts through the lens of few-shot recognition, by presenting VLMs with a few examples of the target concept.

We hope that this challenge motivates the community to develop robust algorithms that can adapt to new domains with a few examples. We have pre-populated the leaderboard with state-of-the-art models like Qwen 2.5VL and GroundingDINO. However, these methods achieve less than 1% accuracy on many datasets in Roboflow-20VL. We invite you to submit a method that can beat our baselines.

This year, we will be hosting two challenge tracks:

Overall Track: Teams submitting to this track can use any pre-trained model, any training data (except RF20-VL), and any fine-tuning strategies. This track is identical to last year's challenge.

In-Context Prompting Track: Teams submitting to this track can use any pre-trained model (or combine the outputs of different pre-trained models). Importantly, teams must not use any gradient-based fine-tuning approaches during training. In-context prompting is allowed during inference.

- 🚨 Top performing teams can win cash prizes! 🚨

- 🥇 Overall Track Winner: $500

- 🥈 Overall Track Runner Up: $250

- 🥇 In-Context Prompting Track Winner: $500

- 🥈 In-Context Prompting Runner Up: $250

To be eligible for prizes, teams must submit a technical report, open source their code, and provide instructions on how to reproduce their results. Teams must also beat our best performing official baseline and make their submission visible by the end of the competition to be eligible for prizes. Check out code and technical reports from last year's winning methods here.

Top performing teams will be highlighted at this year’s Workshop On Open World Vision at CVPR 2026. Check out our previous NeurIPS 2024 and 2025 papers for more details about previous competition winners.

The Roboflow20-VL challenge is open from February 20th, 2026 to May 31st 2026 at 11:59PM. Submit your method to our EvalAI leaderboard.

Questions about the challenge? The RF100VL Github issues page is a good place to ask.

Cite this Post

Use the following entry to cite this post in your research:

Peter Robicheaux. (Feb 23, 2026). Foundational Few-Shot Object Detection Challenge at CVPR 2026. Roboflow Blog: https://blog.roboflow.com/roboflow20-vl-challenge-at-cvpr-2026/