Using large, foundation models to automatically label data is a fast growing trend in the world of computer vision. Foundation models can help reduce the labeling time associated with a computer vision project, thereby allowing you to get to a production-ready model faster.

In this post, we will show you how to see when AI labeled data outperforms human labels and where you'll need to keep humans in the loop for quality assurance.

For this guide, we will be using Autodistill. Released in June 2023, Autodistill allows you to leverage the knowledge contained in large, foundation vision models for use in automatically labeling data. Foundation models have knowledge of a wide range of different objects. With your labeled data, you can then train a new model that learns to identify specific labeled objects. Going from a folder of images to a trained model takes a dozen or so lines of code.

We regularly see variants on the question of “what are the limits of Autodistill?” More specifically, we hear whether foundation models can fully automate the labeling process for most or all use cases.

TL;DR: Autodistill can cover a wide range of use cases, but we are not yet at a stage where we can fully replace automated labeling using foundation models.

We have conducted an analysis of five datasets covering different use cases to review the capabilities of foundation models with Autodistill, qualitatively. In this article, we document some of our observations while conducting our analysis. Our research notebook is available on Google Colab.

By the end of this post, you will know how to compare AI-labeled and human-labeled data. This will give you the tools you need to evaluate how much of the labeling process for a given project can be automated.

Without further ado, let’s get started!

Methodology and Process

We took five datasets from Roboflow Universe, an online repository that hosts more than 200,000 public computer vision datasets. We chose datasets that had a single or two classes. Our datasets cover different categories of object and image data.

For our analysis, we chose the following five datasets:

- Retail Coolers

- Safety Cones

- TACO: Trash Annotations in Context

- People and Ladders

- People Detection (Thermal Imagery)

We then made a Notebook that downloads each dataset through Universe and runs inference on 16 random images from the validation set in each dataset. We chose Grounding DINO as the “base model” (foundation model) on which to run inference because of its capability to identify a vast range of objects in a custom vocabulary.

We take a qualitative approach to analyze how Grounding DINO performs at labeling images in accordance with an ontology relevant to a dataset or task.

We provided a prompt or set of prompts that were relevant to the annotations in the chosen datasets. Here are the classes in each dataset, as well as the prompts we selected:

With our prompts ready, we ran inference on each image, then created a grid showing the results of inference for the 16 images chosen at random. We plotted all predictions with a confidence greater than 0.5 (50%) to images on the grid.

For the purposes of this article, we chose to demonstrate annotations visually, a process that we expect practitioners to conduct during any automated labeling process. Visualizing annotations from a small set of images is crucial in evaluating the results of a prompt or base model before expending time and compute hours on annotating a full dataset.

Showing AI-Labeled Data Achieve Strong Performance

In this section, we show the visual results for inference on each dataset, presented as a 4x4 grid of images for each dataset. Out of six experiments we ran, Grounding DINO, the foundation model with which we are working, was able to label images to a high degree of accuracy in five experiments.

Below, the red and green boxes in each image represent annotations from Grounding DINO. The numbers attached to each red and green box represent the class IDs associated with the prompt that returned the annotation. These IDs are in sequential order of the prompts given, and are mapped to the class names in each section below for reference.

Retail Coolers

We passed the following prompts through the Retail Coolers dataset:

- bottle (0)

- empty shelf space (1)

Grounding DINO returned the following results:

Grounding DINO was able to successfully identify some bottles, but not most of them. Furthermore, with a dataset that may not have been properly cleaned prior to creation, we have instances where Grounding DINO identified a human as a class in our dataset.

We also observed that shelves with a large number of bottles were left unannotated: while there were many bottles to annotate, all were missed. In other instances with close-ups of shelves with fewer bottles, Grounding DINO was able to identify some bottles, but not all.

For this particular use case, Grounding DINO could serve as a first pass for close-up images, allowing you to label those quickly. But, Grounding DINO could not fully annotate the dataset.

We also observed that the class “empty shelf space” could not be successfully identified, despite several instances of empty shelf spaces. This is likely because Grounding DINO has not encoded this abstract concept; while something may make sense to a human, Grounding DINO may not be able to interpret it.

In conclusion, abstract prompts that do not map closely to a specific object (i.e. “empty shelf space”) are difficult for foundation models to understand.

Safety Cones

Figure 2.0 shows the results of running inference on the Safety Cone dataset with one class: “safety cone”. Safety cones are placed in a range of different environments in the images on which inference was run, including on roads, next to crossing marks, in front of vehicles, on grass, and in the hand of a person. Across these environments, Grounding DINO was able to successfully identify safety cones.

TACO: Trash Annotations in Context (one class)

We ran two experiments on the TACO dataset, owing to the wide range of classes in the official TACO taxonomy (60 total classes):

- Using “trash” to detect general trash, without any specificity as to what type of trash was identified, and;

- Using all high-level classes in the TACO taxonomy.

In this section, we talk through the first experiment using “trash” as a general class for labeling our dataset.

Figure 3.0 shows the results of running inference on the TACO dataset with one class: “trash”. This class has the label “0”. Grounding DINO successfully identified many items of trash, including bottles, cups, lids, and plastic.

But, in some cases, Grounding DINO was unable to identify trash. For instance, in the fourth and eighth images, specific items of trash were missing.

This experiment shows that Grounding DINO is capable of annotating abstract classes (i.e. “trash”), but may not do so with complete accuracy.

The TACO dataset contains 60 classes, but for this example we ran inference on one class to show the variance in potential prompts. For instance, the “trash” prompt could be used for a general trash object identification model.

TACO: Trash Annotations in Context (all high-level classes)

Figure 3.0 shows TACO annotating trash with all of the high-level labels in the taxonomy. This approach yields significantly better results than giving a single prompt (“trash”). Grounding DINO has successfully identified a range of objects, and drawn tight bounding boxes around each of them.

The containers in 000062_JPG, for instance, were not identified with the prompt “trash”. Using each high-level label in the TACO taxonomy was enough to successfully identify each of these objects. This shows that taxonomy does matter: while Grounding DINO could identify the abstract class “trash”, it was able to achieve better performance as measured by a qualitative evaluation of the above images with a range of more specific prompts.

People and Ladders

Figure 4.0 shows the results of running inference on the People and Ladders dataset. The 0 class ID maps to the “person” class and the 1 class ID maps to the “ladder” class. In all images above, Grounding DINO was able to successfully identify both people and ladders, with humans on ladders in varying positions (i.e. the top of the ladder, on a step half-way down).

With that said, Grounding DINO did not identify every class successfully. In the first image in Figure 4.0, a scaffolding was identified as a ladder. In the eighth image, a ladder was missed.

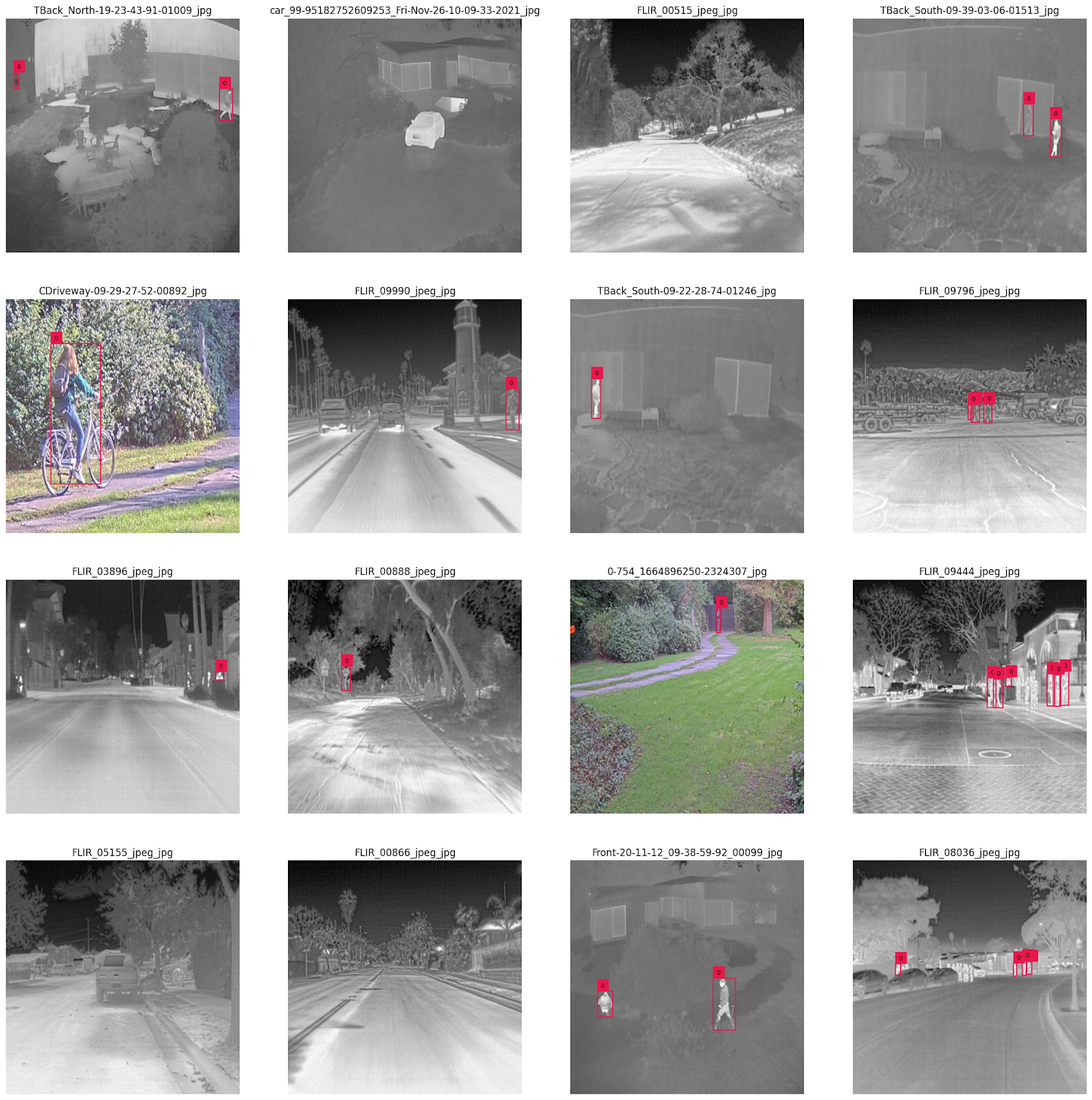

People Detection (Thermal Imagery)

Figure 5.0 shows the results of inference on the people detection model. From the 16 images annotated by Grounding DINO, we can see that the “person” prompt was sufficient to identify people across the images. Grounding DINO was able to respond well to both thermal and color images in the dataset, drawing tight bounding boxes around all the people in the dataset.

Conclusions

Foundation models are able to identify a vast range of objects in different contexts. Above, we demonstrated using Grounding DINO to identify people, bottles, empty shelf spaces in retail coolers, various items of trash, ladders, and people in thermal imagery.

Grounding DINO is one of many examples of foundation models, however. We expect more to be released that are capable of identifying a broader range of objects and understanding more semantics in the prompts given to find objects.

Foundation models can perform as well as humans in specific datasets, such as was the case in the Safety Cones and People and Ladders examples. However, there are limitations: Grounding DINO struggled to identify a few objects, such as bottles in well-stocked retail coolers.

We recommend following a similar approach that we took in this article to evaluate whether foundation models can help with your labeling process. If a foundation model can label 50% of the data in your dataset to a high degree of accuracy, you can realize notable cost and time savings.

Our analysis did not cover every realm of possibility. Rather, we chose a few examples in different domains and visual scenarios to start to scratch the surface of understanding the strengths and limitations of Grounding DINO.

To get started with Autodistill, check out the Autodistill GitHub repository and accompanying Quickstart notebook.

Cite this Post

Use the following entry to cite this post in your research:

James Gallagher. (Jun 28, 2023). Comparing AI-Labeled Data to Human-Labeled Data. Roboflow Blog: https://blog.roboflow.com/ai-vs-human-labeled-data/