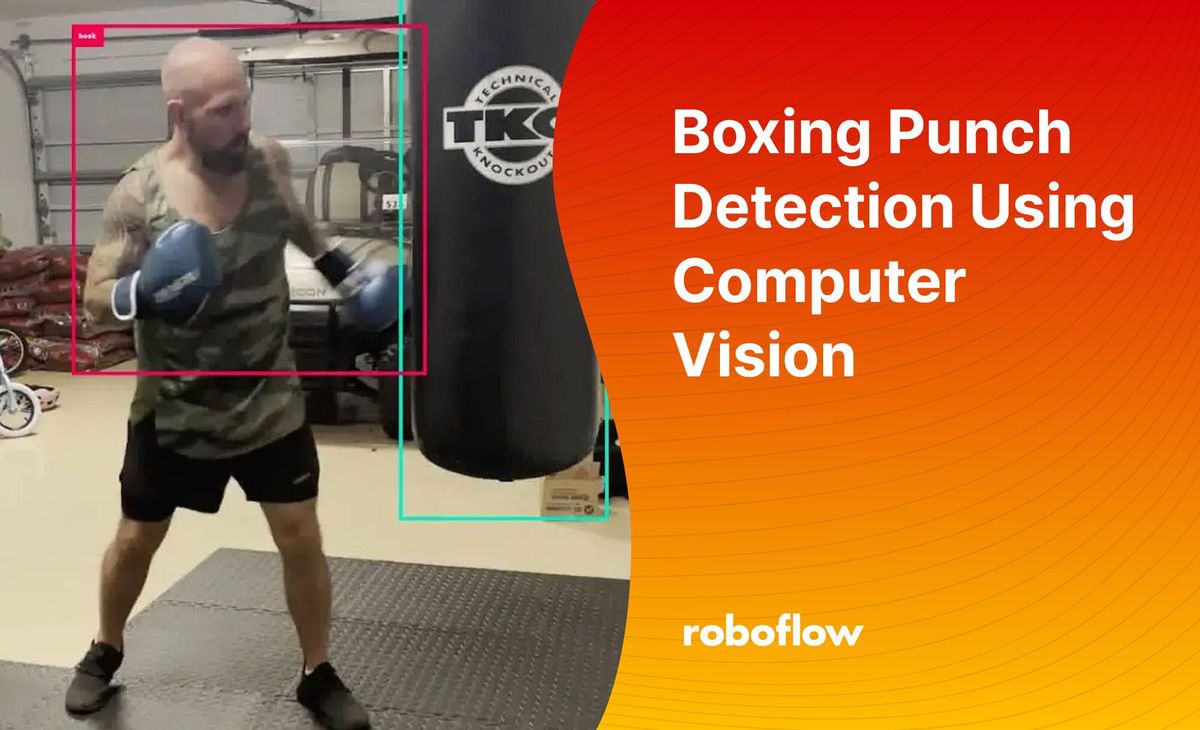

One of the best parts about joining Roboflow is doing a computer vision project in your first 2 weeks. As someone who loves to workout, I wanted to focus my project on a fitness related use case. I often mix boxing workouts into my routine, and thought it would be a great place to start. I will start out saying this problem is probably better suited for keypoint detection, but object detection did the job!

I thought it would be great if computer vision was able to capture the type of punches being thrown during a boxing workout round (jab, cross, hook, uppercut) as well as total punches in that round to determine what they need to work more on.

For example, if you want to get a better jab and are only throwing 5 jabs in a 3 minute round, this info should tell you that you need to throw more jabs!

Also, knowing the total punches allows you to up your round intensity. If you threw 50 punches in a round, you can challenge yourself to throw 60 the next round, and in turn get a better sweat in!

Creating and Annotation the Dataset from Images and Videos

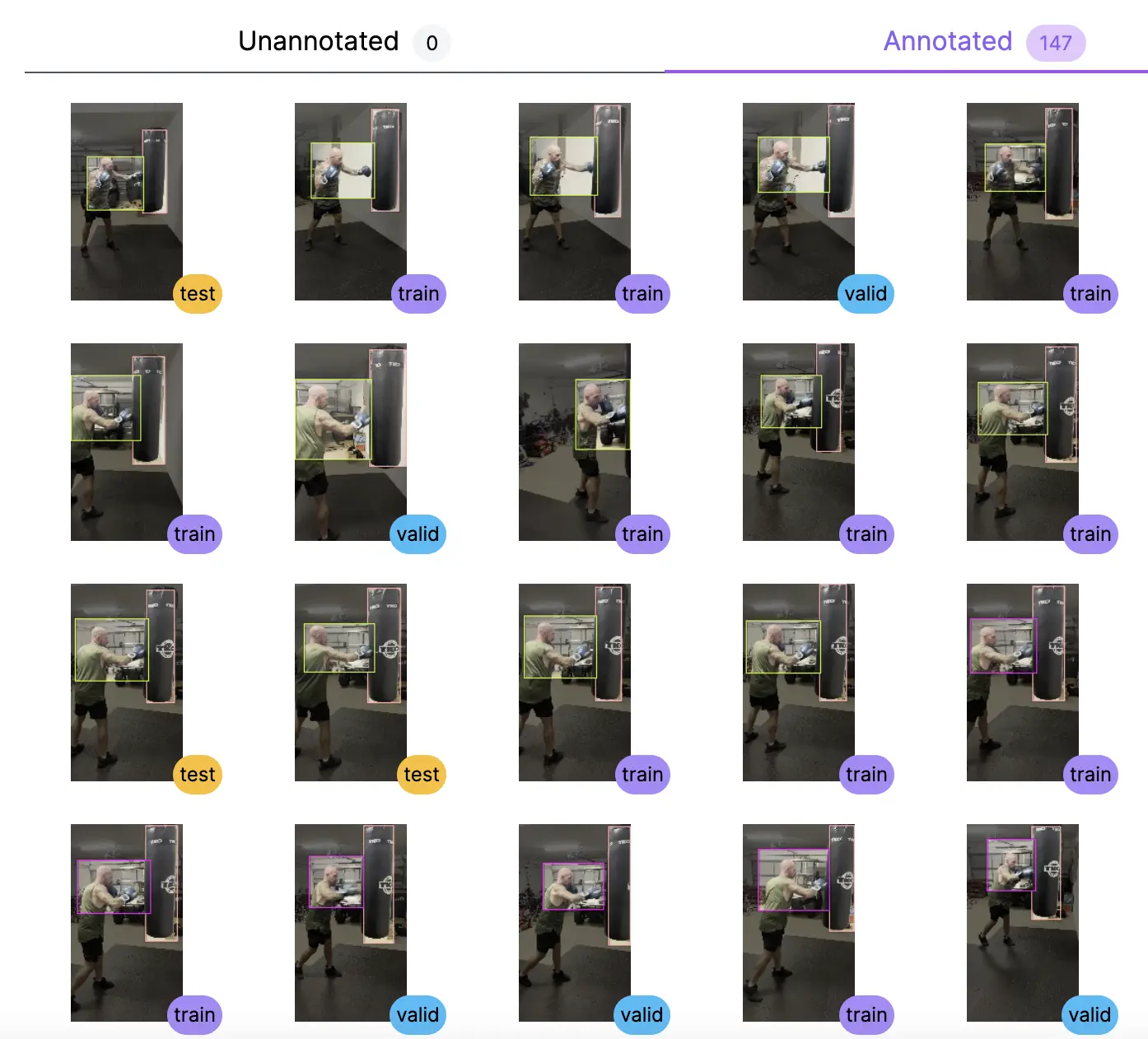

To get started, we took video from a 12 minute boxing session to gather enough data to train a model. The next step was uploading the video into Roboflow and splitting it into 1 frame per second with images of poses in a set position to better train the model.

Once the data is loaded into Roboflow, we have to annotate the images with bounding boxes around the images of my 4 classes: jab, cross, hook and uppercut.

In total, there were 239 annotated images in the final dataset. This was extremely easy to do within the Roboflow platform.

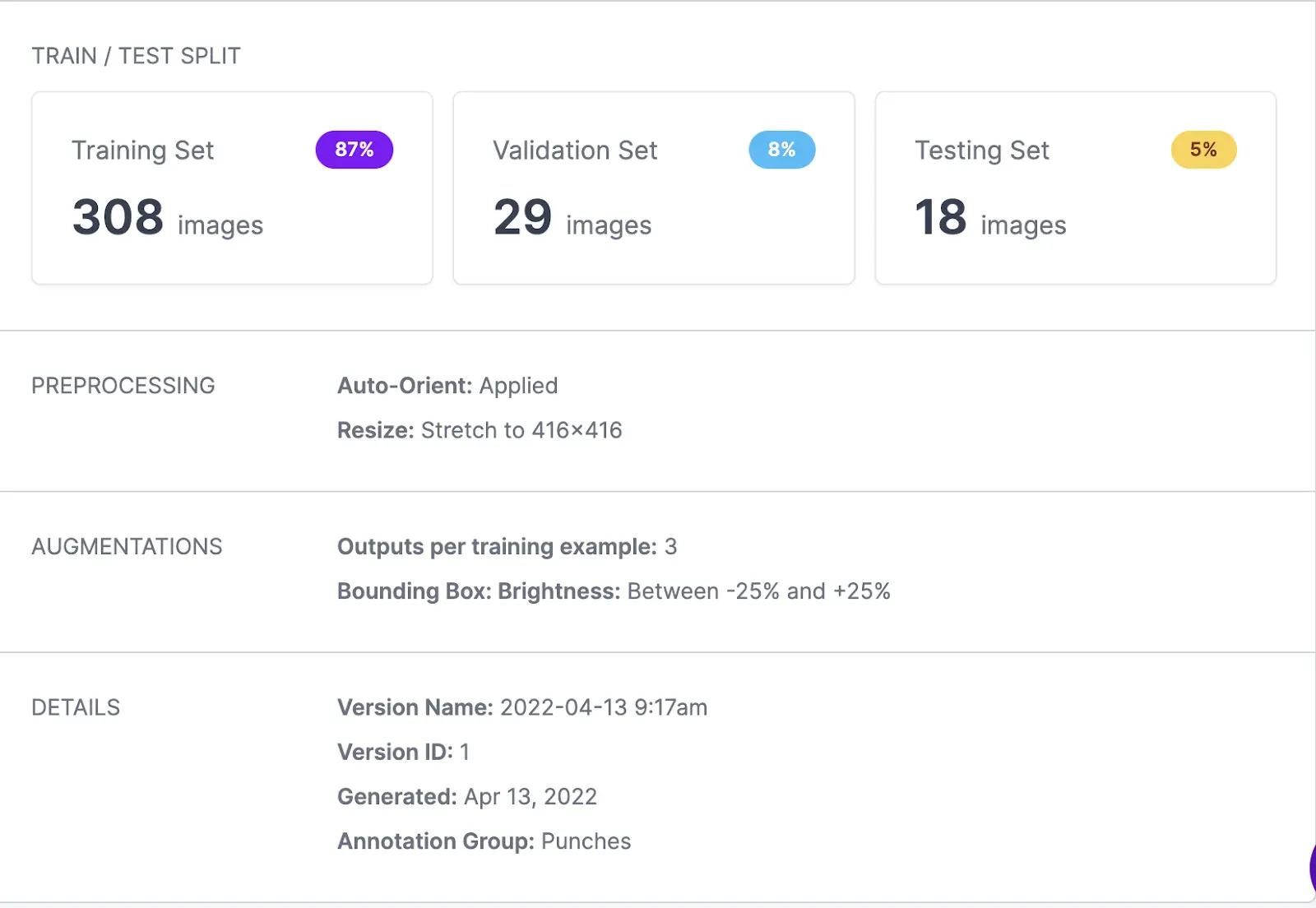

In the first model version, we applied some basic pre-processing and augmentations as well as added some brightness and ended up with 355 total images for my model.

Iterating on Model Training and Performance

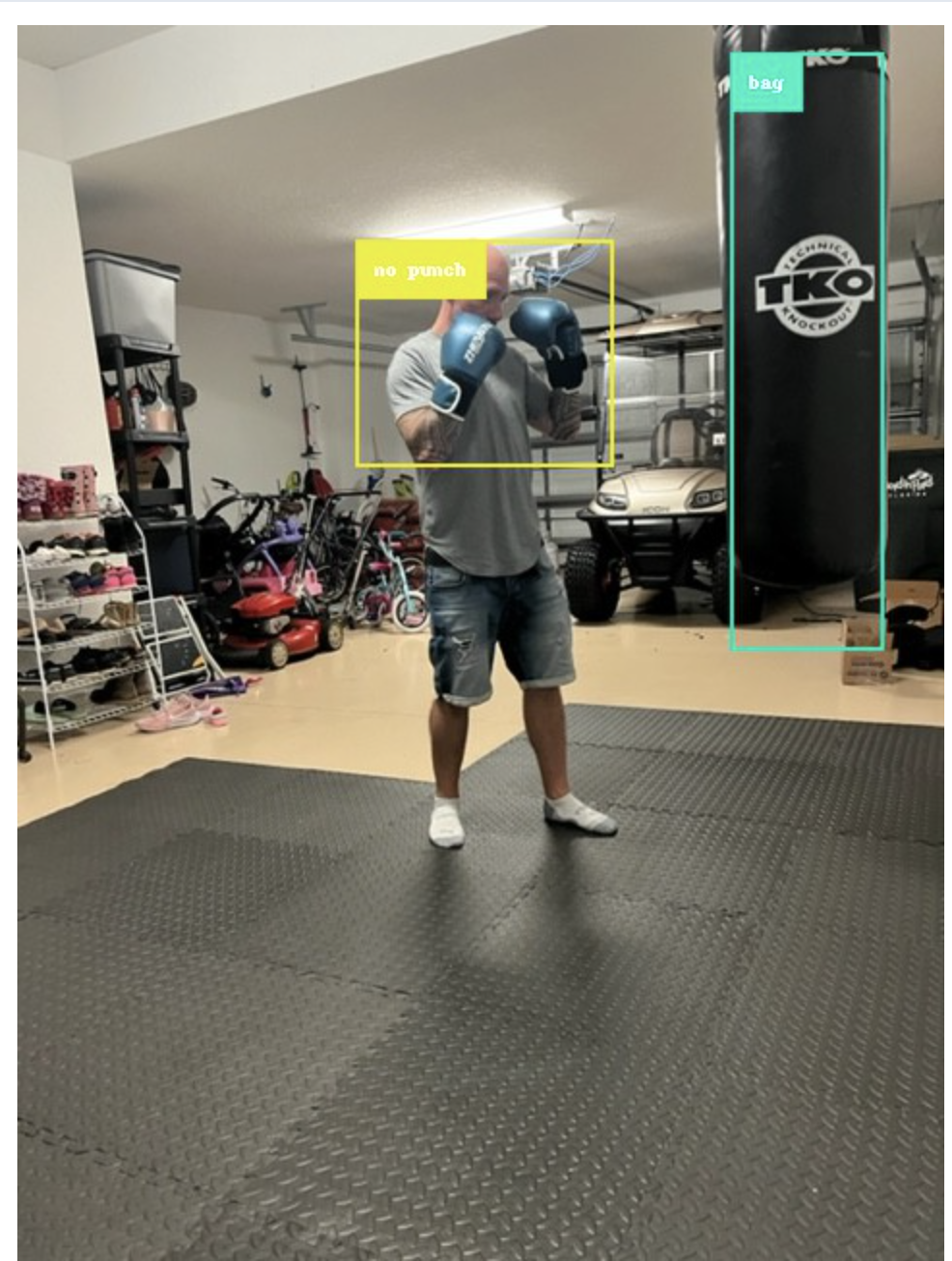

Initially, the first model was not performing as well as desired, so I went back (with our co-founder Brad's help) and decided to also add classes for ‘no punch’. This would identify when I was in a still position and there is no punch being thrown.

In addition to the new class, we added a bounding box around the punching bag to allow for further segmentation of stances and poses. After further review, there was room to tighten up the bounding boxes for the previously annotated punch types and add more images overall to better strengthen the dataset.

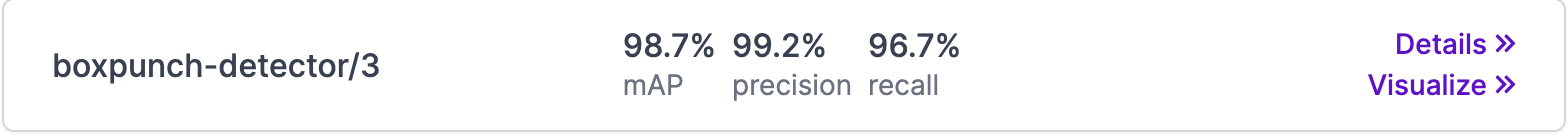

After a mix up on the 2nd version, the 3rd version is where the model performed much better.

Correct Prediction!

Although lots of room for improvement, the model is doing fairly well!

Setting Up the Video Inference Feed with Roboflow

Now the next step was to use the Roboflow video inference to feed an entire boxing round into my model for inference and output the results into a new video - it’s easy to do by following along with this repo - and added some parameters on top of the default values:

ROBOFLOW_KEY=xxxxxx ./infer.sh boxpunch-detector/3 boxpunch_2.MOV.mp4 video_out_4.mov --fps_out 3 --parallel 16 --labels

And, voila, the end result. Below is a still capture of the input video 🎉

Ideas for Further Improvement of the Punch Detection Model

All in all, the results work pretty well. The model could be improved by adding more data and allowing the model to learn the different types of punches more accurately. In boxing, hooks can look like crosses and a cross can look like a jab so it isn't an easy challenge to solve.

The model seems to struggle with uppercuts and hooks, although it seems like uppercuts would be the easiest punch to classify. To further improve the model, it might make sense to do more pre-processing and augmentations.

Next step is to write a script that calculates the results - number of total punches, number of jabs thrown per round, etc. After-all, this is the true value of a use case like this.

This was a lot of fun and I hope it's of interest to you 😄 Thanks to everyone who helped me along the way!

Cite this Post

Use the following entry to cite this post in your research:

Mark McQuade. (May 17, 2022). Boxing Punch Detection Using Computer Vision. Roboflow Blog: https://blog.roboflow.com/boxing-punch-detection-computer-vision/