This post was contributed to the Roboflow blog by Shai Nisan, Ph.D.

It all started with a small request from my 8 years old son, a chess player, to make an app that converts images of real-life chess games into a digital format. He could save the game for later continuation on his own device, share it with friends, or get moves suggestions from chess engines such as Stockfish. I decided to make this application a reality.

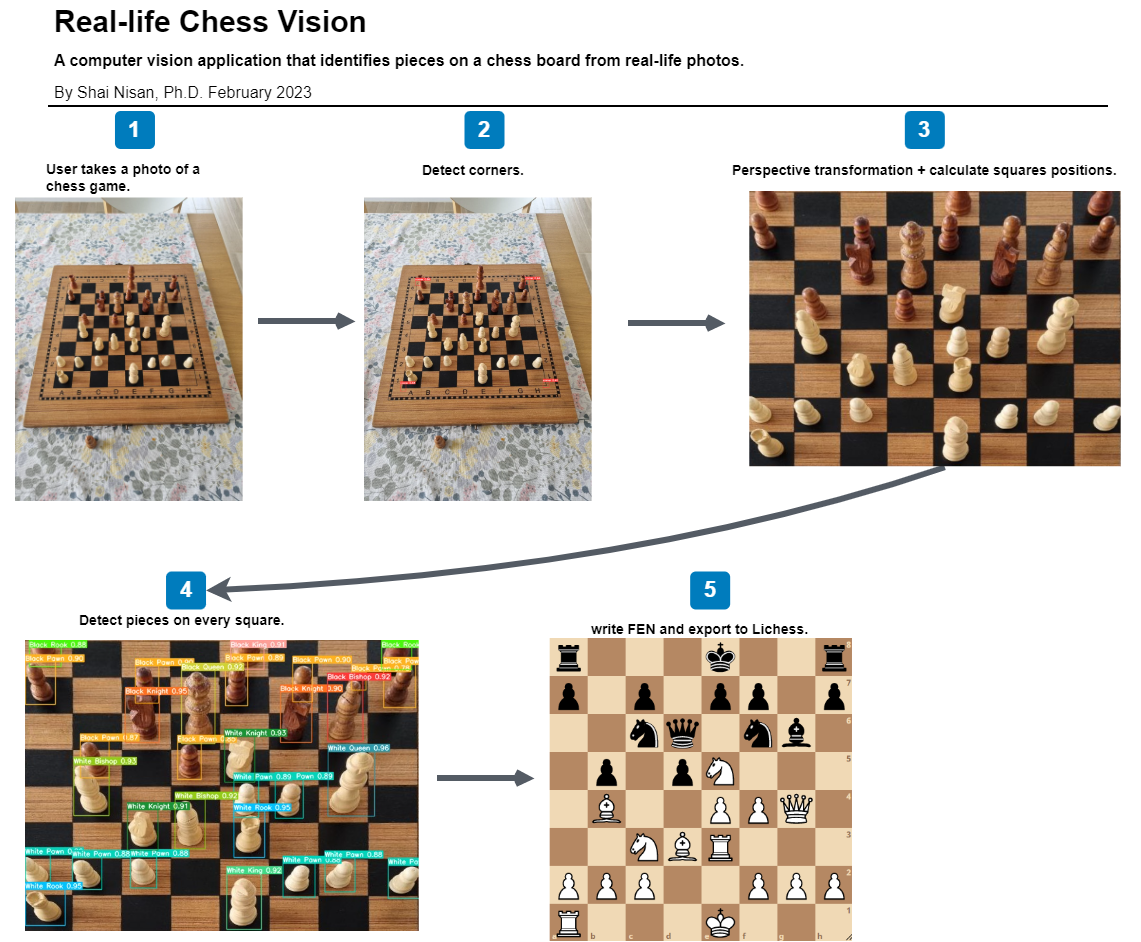

In this post, I’ll go through the journey of building an application that uses computer vision to identify pieces on a chess board. I then create a digital representation of the chess board for which Forsyth–Edwards Notation (FEN) data can be written. This data can then be exported into tools like Lichess to digitally represent the physical chess board in the photo taken.

The full code associated with this project is published on my GitHub account.

Understanding the Problem

Let's begin with a clear problem statement:

As I've quickly found out, chessboard recognition is a popular problem in computer vision, machine learning, and pattern recognition. Throughout the years there have been many works on this topic. The first work that I found was published in 1997. It was fascinating to see how different researchers used their contemporary technologies and algorithms to address this problem since then.

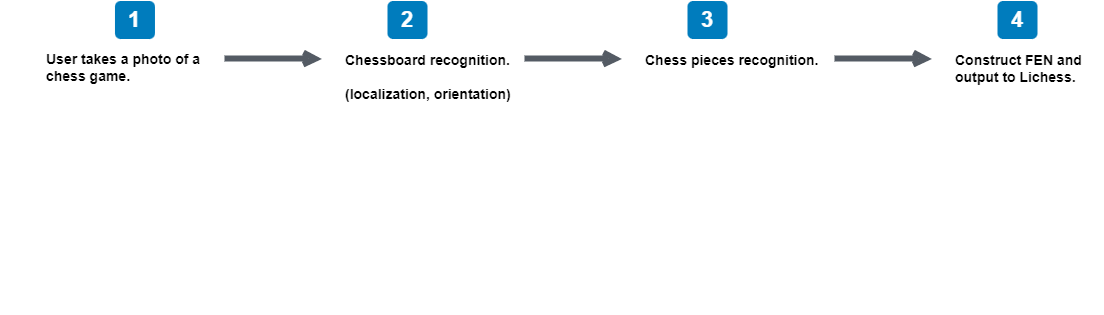

Reading previous work helped me realize that the chess vision problem can be broken down into two main sub-problems:

- Chessboard recognition: Identify a chessboard within an image and identify its characteristics: position, orientation, and the location of the squares.

- Chess pieces recognition: Detect the pieces on the board, classify them and localize them on the squares.

Here's a sketch of the work process that I needed to figure out:

FEN is the standard notation to describe the positions of a chess game. FEN data is easy for computers to interpret.

Solving the Chessboard Recognition Problem

I found three main approaches to recognize a chess board and its characteristics:

- Corner-based approaches;

- Line-based approaches and;

- Heatmap approach.

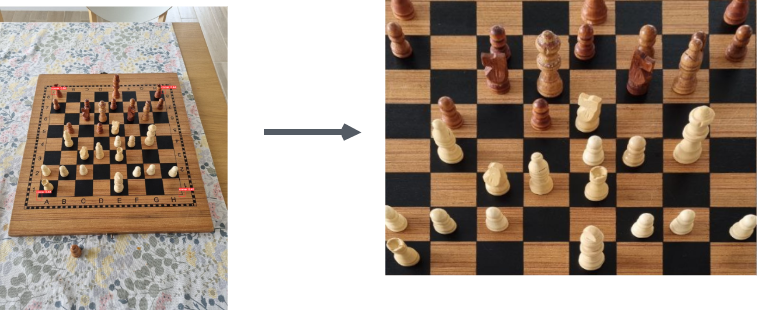

After trying these approaches I found my own version of corners-based approach to work best with real-life images. I used YOLOv8 to detect chessboard corners. Once I have the corners' coordinates, I can start calculating the localization of the rest of the board.

To detect the corners, I created a small dataset of around 50 images of chessboards and quickly annotated their corners using Roboflow's platform. Then I used Roboflow to quickly augment the dataset to 3x its original size. I trained a YOLOv8 nano model to detect chessboard corners:

from ultralytics import YOLO

# Load the model.

model = YOLO('yolov8n.pt')

# Training.

results = model.train(

data='data.yaml',

imgsz=640,

epochs=100,

batch=8,

name='yolov8n_corners')

model_trained = YOLO("runs/detect/yolov8n_corners/weights/best.pt")

results = model_trained.predict(source='1.jpgresized.jpg', line_thickness=1, conf=0.25, save_txt=True, save=True)In testing, my approach is not affected by different lighting conditions, the capturing angle nor the type of the chessboard. This is in part influenced by the augmentations applied that helped create a more representative dataset.

It was amazing to see how this simple solution outperforms the more sophisticated approaches (at least in my case). I got great results in detecting corners and could continue to the next phase.

After detecting the corners, I wanted to rearrange them in a fixed order. I found a small function that applies simple logic to this problem. The top-left point will have the smallest sum between x and y, whereas the bottom-right point will have the largest sum. The top-right point will have the smallest difference, whereas the bottom-left will have the largest difference. Here is the logic I used:

def order_points(pts):

# order a list of 4 coordinates:

# 0: top-left,

# 1: top-right

# 2: bottom-right,

# 3: bottom-left

rect = np.zeros((4, 2), dtype = "float32")

s = pts.sum(axis = 1)

rect[0] = pts[np.argmin(s)]

rect[2] = pts[np.argmax(s)]

diff = np.diff(pts, axis = 1)

rect[1] = pts[np.argmin(diff)]

rect[3] = pts[np.argmax(diff)]

return rectNow that I have the coordinates of the four corners of the chessboard in a fixed order, I can compute the perspective transform matrix using OpenCV2. This transformation algorithm transforms the image in a straight manner. This is useful in calculating the location of all the squares on the chessboard.

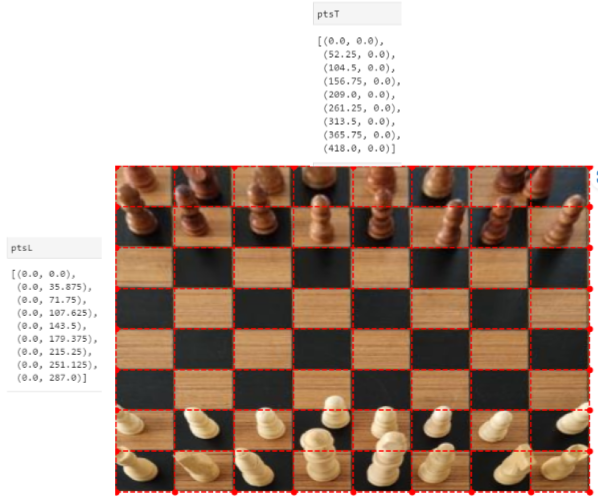

At this stage I can easily calculate the coordinates of all the squares on the board, by dividing the upper edge of the image by 8, and then the same for the left edge, and then connecting all the points into lines.

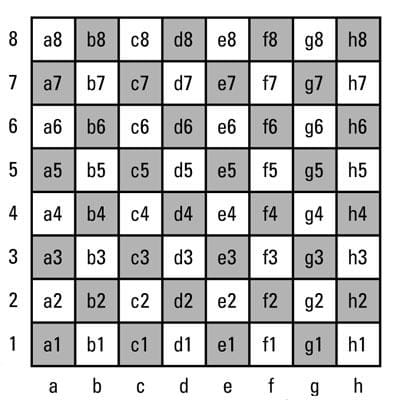

Then, we can map each square to its corresponding FEN data. This illustration shows how each position on a chess board is described in FEN:

Each square can then be calculated using the same approach:

a8 = np.array([[xA,y9], [xB, y9], [xB, y8], [xA, y8]])

a7 = np.array([[xA,y8], [xB, y8], [xB, y7], [xA, y7]])

…

Whereas xA, xB, y9, y8, etc. are:

xA = ptsT[0][0]

xB = ptsT[1][0]

y9 = ptsL[0][1]

y8 = ptsL[1][1]

…Solving the Chess Piece Recognition Problem

Now that I have the coordinates of all the squares on the board, I can go on to classify the chess pieces. Then I can connect each detection by its coordinates to the corresponding square.

I turned again to YOLOv8 and Roboflow, this time annotating a bigger dataset of chess pieces on chessboards that were transformed using the perspective transform matrix above.

Roboflow makes this stage easy. After an hour or so I had an annotated dataset, that was also augmented automatically by Roboflow, and I could start with training another YOLOv8 model on the chess-pieces detection problem.

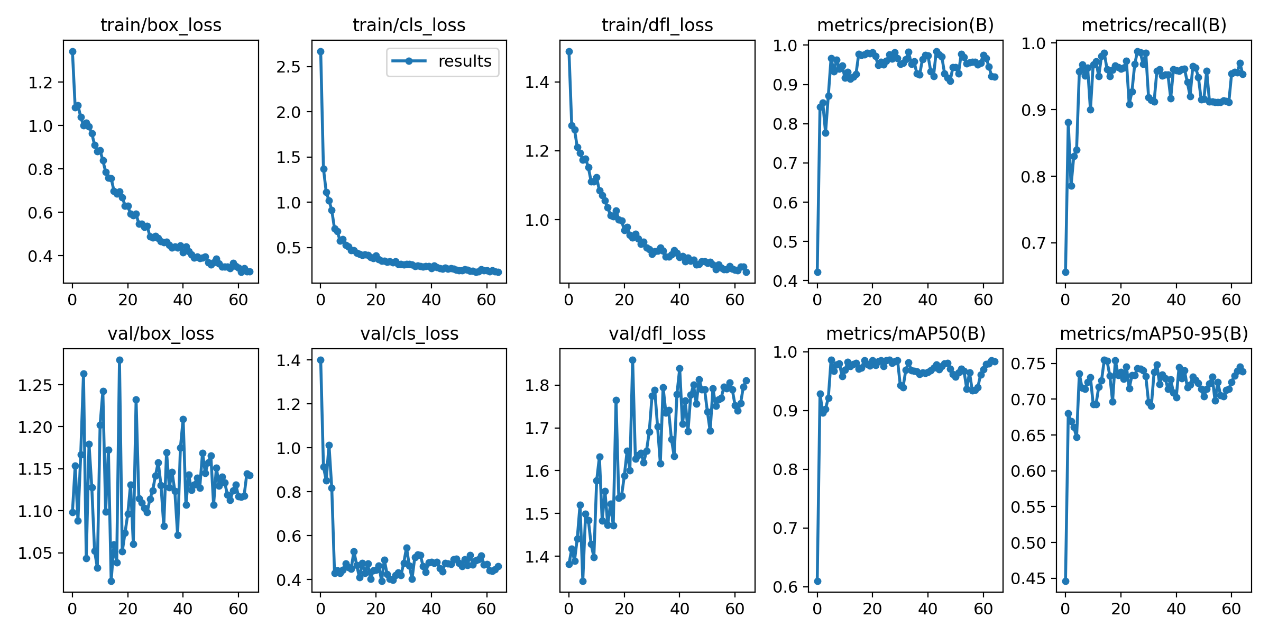

YOLOv8 does an excellent job detecting chess pieces! The following charts show performance of the model during training:

Now I can connect all the pieces together. I have the coordinates of all the squares on the board, and I have the coordinates of the detections on the pieces on the board. Using the "shapely" package, I wrote a simple function that calculates the intersection over union (IoU) between the predicted bounding box and each square on the board.

Then I wrote a function that connects each detection to the correct square – this would be the one that has the largest IoU with the detection's bounding box. For tall pieces such as the Queen or the King, I considered only the lower half of the bounding box.

def calculate_iou(box_1, box_2):

poly_1 = Polygon(box_1)

poly_2 = Polygon(box_2)

iou = poly_1.intersection(poly_2).area / poly_1.union(poly_2).area

return iouAt this stage, I could write the FEN of the board with a simple script that orders the connected detections and squares into the correct format and output it to the Lichess FEN URL. With this URL the user can enjoy all the magic that computer chess has to offer: share games, save for later, analyze, get suggestions, etc.

board_FEN = []

corrected_FEN = []

complete_board_FEN = []

for line in FEN_annotation:

line_to_FEN = []

for square in line:

piece_on_square = connect_square_to_detection(detections, square)

line_to_FEN.append(piece_on_square)

corrected_FEN = [i.replace('empty', '1') for i in line_to_FEN]

print(corrected_FEN)

board_FEN.append(corrected_FEN)

complete_board_FEN = [''.join(line) for line in board_FEN]

to_FEN = '/'.join(complete_board_FEN)

print("https://lichess.org/analysis/" + to_FEN)The described method performs extremely well and achieves an accuracy of over 99.5% for detecting chessboard corners and 99% for chess piece recognition. This approach is simple, fast, and lightweight while avoiding a time and resource-consuming training process. It can be easily generalized to other kinds of chessboards and chess pieces.

However, the best confirmation of this method’s usefulness is that my son actually uses it with his friends 😊

Please feel free to contact me through LinkedIn with ideas for future iterations of this project.

Cite this Post

Use the following entry to cite this post in your research:

James Gallagher. (Mar 10, 2023). Represent Chess Boards Digitally with Computer Vision. Roboflow Blog: https://blog.roboflow.com/chess-boards/