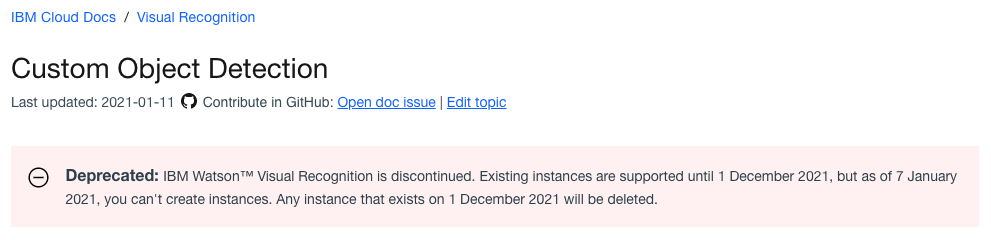

IBM recently announced they are shutting down IBM Visual Inspection, their product for creating custom computer vision models for classification and object detection. No new instances can be created and all current instances will be fully shutdown in December 2021. This has left many looking for IBM Visual Inspection alternatives.

(Note: the below post also serves as a guide to IBM Maximo Visual Inspection alternatives.)

In the below, we show how to download data from IBM Visual Recognition.

We then also included a "buying guide" on how to compare IBM Visual Recognition versus other products – including Roboflow – so you can pick up right where you left off with your computer vision projects.

If you prefer video content, subscribe to our YouTube.

How to Migrate from IBM Visual Recognition to a New Platform

In order to ensure continuity in your computer vision projects, you'll want to export as much of your progress from IBM as possible. Ideally, this means getting at least your data (images and annotations) to a new platform to pickup where you left off.

There are a few different methods of exporting your data from IBM Watson.

Option One: How to Export Data from IBM Visual Recognition

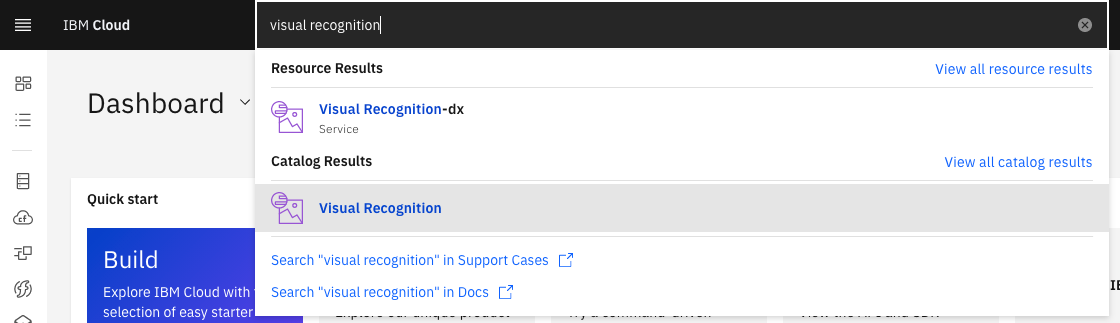

To export data from IBM Visual Recognition, first, login to the IBM Developer Console to access your project: https://cloud.ibm.com/

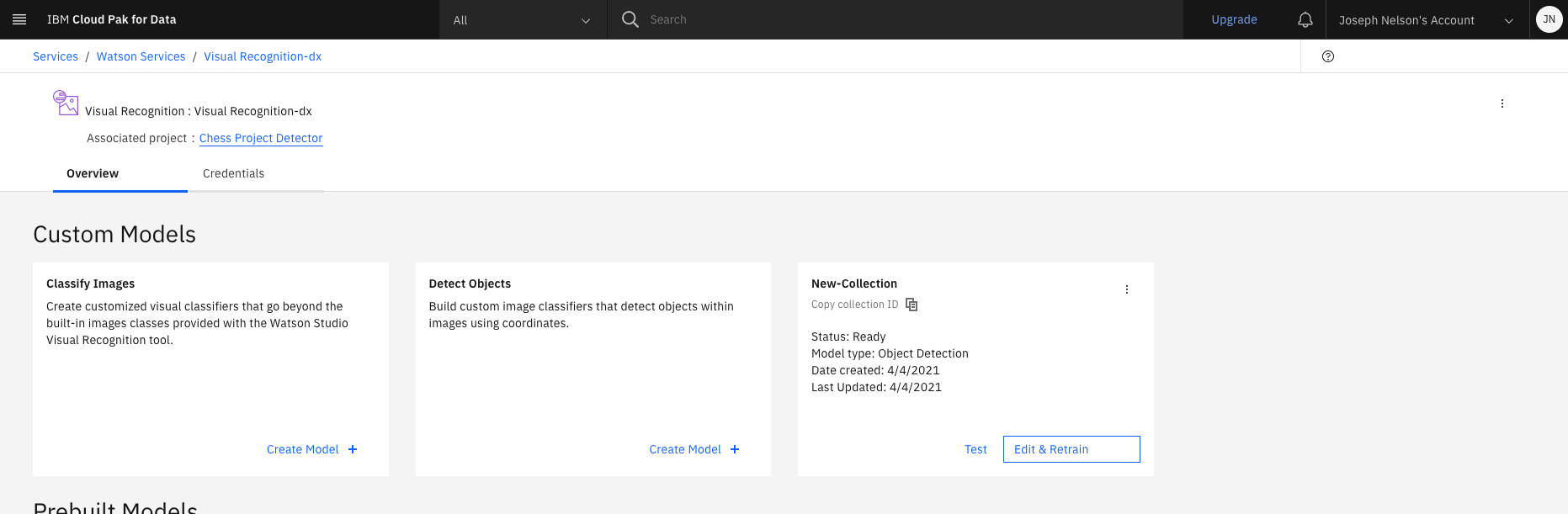

First, select your current Visual Recognition project. For me, that would be "Visual Recognition-dx" in the below.

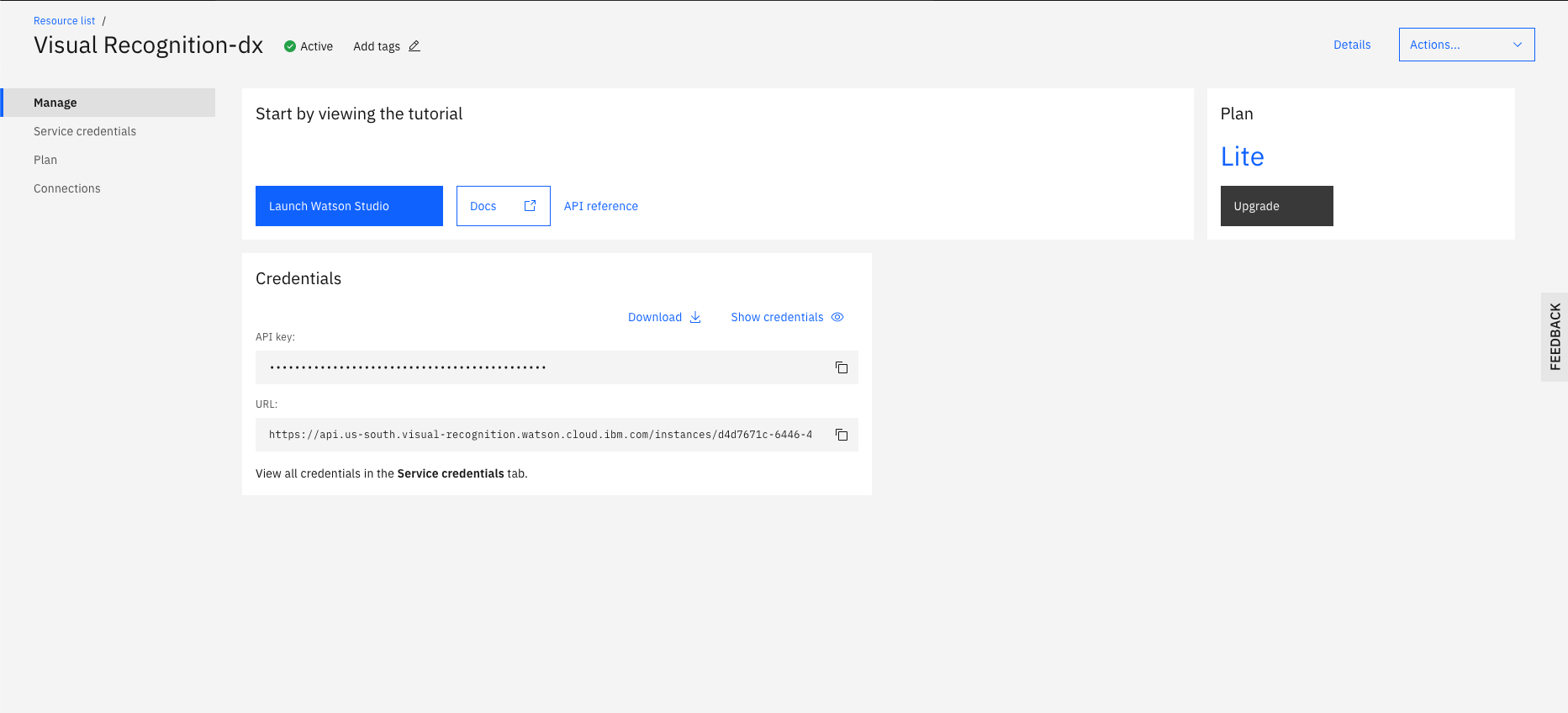

Then, select "Launch Watson Studio."

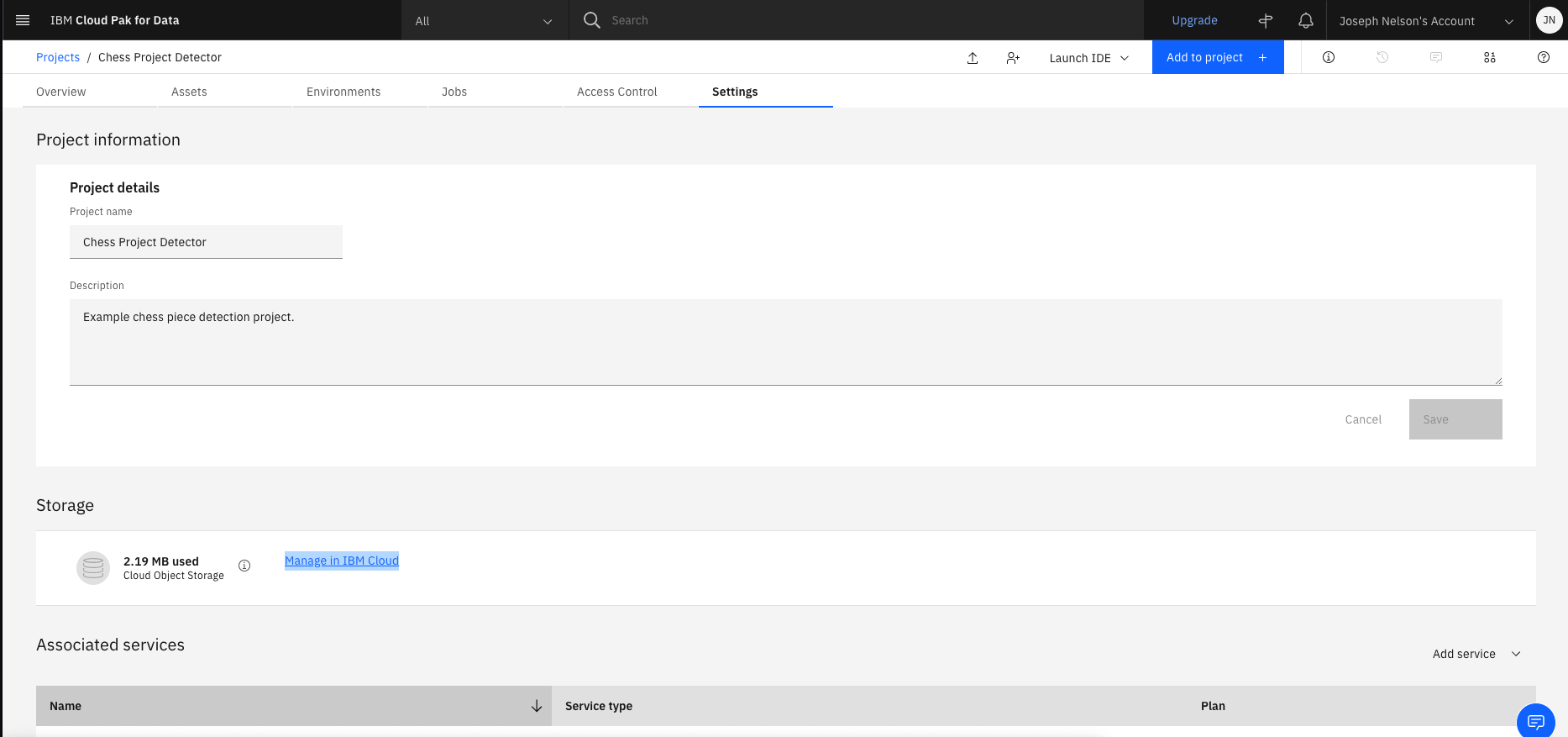

Form here, you should see the name of your project. Mine is "Chess Project Detector." Click that.

This should take you to your project's settings page. Near the middle on the bottom, you should see, "Manage in IBM Cloud." Click that.

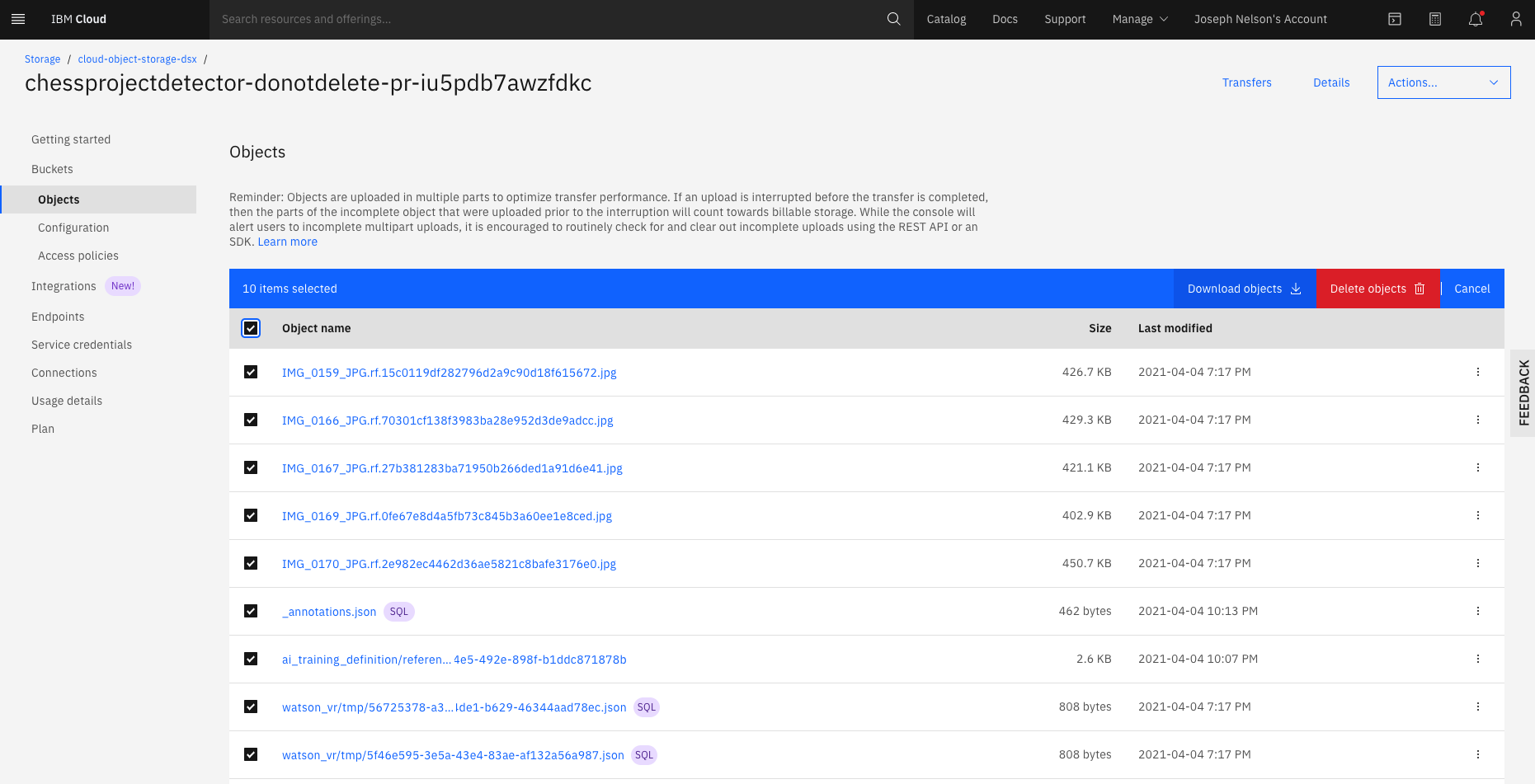

From here, you should see the name of your project. (Mine was "chessprojectdetector-donotdelete-pr-iu5pdb7awzfdkc".) Click the name of your project. Then, on the next page, select the checkbox for all files and click "Download Objects"

You can then upload your data to any other tool, like Roboflow: https://docs.roboflow.com/adding-data#uploading-data-with-the-web-user-interface

Option Two: Export from IBM Cloud Annotations

Alternatively, visit https://cloud.annotations.ai/ and select your project. From here, you can download your annotations in any of the selected formats.

Roboflow accepts any of these formats to continue your progress. See: https://roboflow.com/formats/cloud-annotations-json This enables you to convert IBM annotations to YOLO, YOLOv5, TFRecords, or any other format.

IBM Watson vs Roboflow

Before discussing how to migrate from IBM to Roboflow, we layout criteria that may be helpful for you and your team. We've included comparisons of IBM Watson for Computer vision, which applies both to IBM Visual Recognition (deprecated) and IBM Maximo Visual Inspection.

The Importance of an End-to-End Platform and Active Learning

Active learning is a machine learning strategy that incorporates a deliberate (and often model-assisted) approach in the curation of training data. Think about active learning like taking a data-driven (and, well, active) approach in selecting which images will best improve your model's performance. Active learning enables computer vision models to improve with 10x fewer data points – resulting in higher model performance in substantially less time (and less labeling).

Tools that support active learning deliver results substantially faster. As a result, consider using a platform that uses the model you have in production as an active part of your dataset curation and annotation strategy. Active learning requires an end-to-end platform: from dataset curation, organization, annotation, training, and deployment.

While IBM Maximo Visual Inspection and IBM Visual Recognition enabled users to make use of models in labeling data, they did not prioritize active learning in dataset curation. Platforms that use the same model that you have in production in your dataset curation. e.g. Imagine your production model only sends images back to your training dataset that were lower than 50 percent confidence for re-labeling – this, by default, enables rapid improvement in your dataset. This is how end-to-end tools like Roboflow incorporate active learning by default.

Fast (and Automated) Image Annotation is Key

Image annotation is one of the most time-consuming – yet critical – parts of creating a highly performant computer vision model. You should prioritize platforms that create a seamless annotation experience, including leveraging machine learning in the annotation process itself.

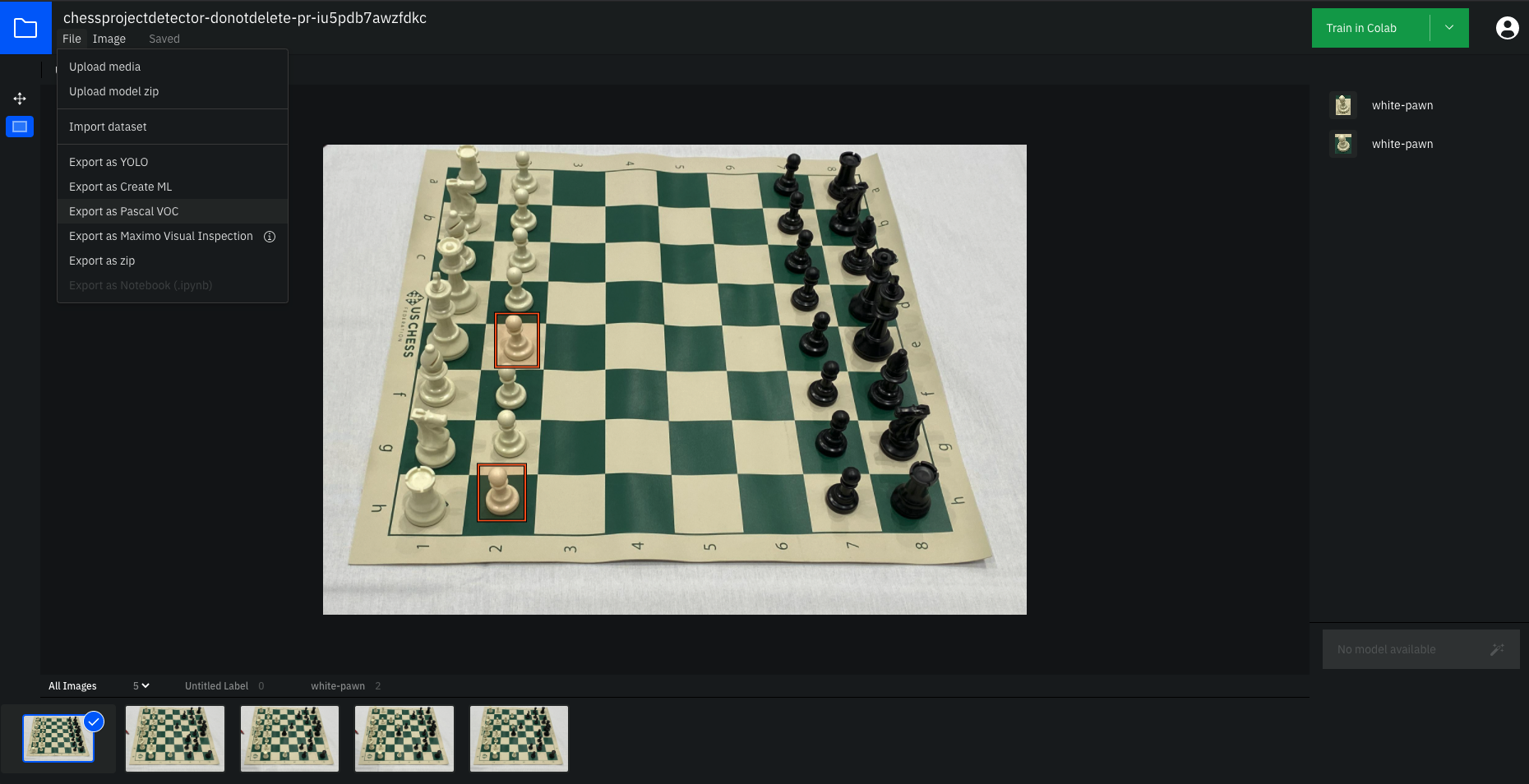

To annotate with IBM Cloud Annotation and IBM Visual Recognition, users make many clicks, scrolls across the screen, and endure save-wait times. For example, here's using IBM to annotate two chess pieces:

By way of comparison, here's adding the same two annotations in Roboflow, where the user has to make two clicks (total), smart-defaults suggest the annotation name, and there's no wait time:

In this example, adding two annotations in IBM took ~20 seconds and in Roboflow took ~7 seconds. In a modest dataset of 1000 annotations, that's 333 hours in IBM and 116 hours in a tool like Roboflow. What would your team do with an extra ~200 hours?

Moreover, platforms that leverage model-assisted labeling use computer vision itself to automatically add annotations to images. This means automating annotation – sometimes up to 70-80 percent in our experience. Platforms like IBM and Roboflow incorporate this capability by default, though many open source tools do not.

Enable Model Deployment to the Cloud, Edge – Anywhere

Teams should be able to use their models wherever needed: via cloud-hosted API, on the edge like on a NVIDIA Jetson, or even native in the browser (like with TensorFlowJS).

IBM Visual Recognition enabled users to download their model and hosted it via API. It did not provide easy edge deployment to mobile devices or in the browser, leaving teams to recreate this vision infrastructure in-house.

Platforms should enable developers to use their models where needed, and even seamlessly experiment between a cloud API or a on-device strategy. (Platforms like Roboflow include a fully managed and scalable web API, a three-line deployment to edge devices like NVIDIA Jetsons, and even on-device in-browser models.)

Emphasize User (and Developer) Experience

At the core, the tools your development team uses can have a step-wise shift in developer productivity. Thus, platforms that emphasize developer experience should be a key consideration.

Evaluating developer experience is inherently a subjective game, but there are a few measures to consider.

Try the tool yourself. The first test here is if the tool even has open signup for users. Tools that don't allow users to signup for even a free version without, e.g., talking to a sales team may not be prioritizing developers themselves as their target user. Unintuitive user experiences drain productivity. Remember you're buying a tool to improve output quality and/or quantity.

Secondly, when evaluating the tool yourself, ensure you're able to get to a productive end state quickly. At Roboflow, we often see teams build production ready models in days – not weeks – even without talking to our team to get started.

Ensure the company emphasizes documentation. This includes leading with documentation, the ease of reading documentation and keeping it up to date. (Here's the Roboflow documentation.)

Identify if the tool is building developer community. Related to documentation, cultivating a community of users demonstrates a company's commitment to being responsive and iterating on feedback. It also creates an open space for developers to help one another – thereby improving overall community productivity. (At Roboflow, we maintain a very active blog – 👋 hello – and YouTube channel to engage, educate, and respond to our community.)

At Roboflow, emphasizing developer experience has resulted in over 20,000 users trying our product within the first year of it being available. We can't wait to welcome you to the Roboflow community!

Cite this Post

Use the following entry to cite this post in your research:

Joseph Nelson. (Apr 4, 2021). How to Use Roboflow with IBM Visual Recognition (IBM Watson vs Roboflow). Roboflow Blog: https://blog.roboflow.com/ibm-watson-computer-vision-alternatives/