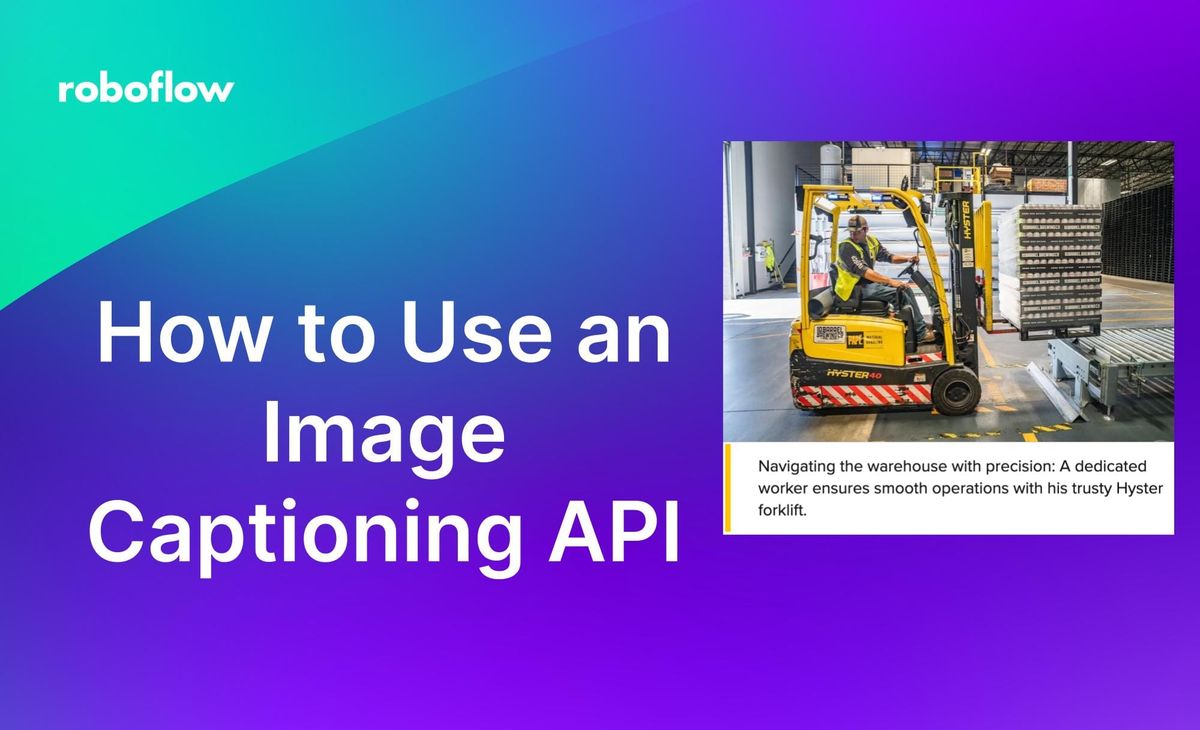

Automated image captioning is a problem you can solve with computer vision and get human-level quality outputs. You can use CogVLM, a multimodal language model, to create captions for images. These captions can be used to provide accessible descriptions for images, enriching image search features with more context, among other use cases.

In this guide, we are going to walk through how to deploy an image captioning API powered by CogVLM and Roboflow Inference on your own infrastructure.

Consider this image:

When prompting CogVLM with "Generate a caption for this image.", our system returns:

Navigating the warehouse with precision: A dedicated worker ensures smooth operations with his trusty Hyster forklift.

By the end of this guide, you will have an API that you can call to programmatically generate captions for images like in the example above.

Without further ado, let's get started!

Requirements

For this guide, you will need an NVIDIA GPU that is at equal to or more powerful than a T4 GPU. This is because the model we are going to use for generating captions, CogVLM, is large. You can deploy T4s and other GPUs on cloud platforms such as Google Cloud Platform or AWS.

We have a guide on how to set up CogVLM on an AWS virtual machine that you can follow if you want to deploy your captioning API on AWS. You only need to follow the AWS tutorial up to "Setup Inference Server"; we cover the rest in this guide.

You will also need a free Roboflow account.

Step #1: Install Roboflow Inference

To get started, we need to install Roboflow Inference. Inference is an open source server for running computer vision models. You can run fine-tuned models such as RF-DETR models as well as foundation models like CLIP and CogVML with Inference.

To install Inference, run:

pip install inference inference-cli inference-sdkStep #2: Start an Inference Server

To generate captions, we need to start an Inference server. To start an Inference server, you need Docker installed. Refer to the official Docker installation instructions to learn how to install Docker on your device.

To start an Inference server, run:

inference server startThe Inference server will start at http://localhost:9001.

Step #3: Generate an Image Tag

With an Inference server ready, we can start to generate image captions.

Create a new Python file and add the following code:

import os

from inference_sdk import InferenceHTTPClient

CLIENT = InferenceHTTPClient(

api_url="http://localhost:9001", # only local hosting supported

api_key=os.environ["ROBOFLOW_API_KEY"]

)

result = CLIENT.prompt_cogvlm(

visual_prompt="./image.jpg",

text_prompt="Generate a caption for this image.",

)

print(result)In this code, we use the Inference SDK to connect to our Inference server. We then send an image called "image.jpg" to our server. Replace "image.jpg" with the name of the image you want to send to the server.

We send our image with the prompt "Generate a caption for this image." If there is a specific attribute of the image you want to ask about – for example, whether a certain object is present – you can adjust the prompt to be a different question.

Behind the scenes, this code makes a HTTP request to our Inference server. The Inference HTTP server processes our request. CogVLM generates a caption. Then, the application returns a caption.

When you first run this script, the CogVLM model will be downloaded. CogVLM is a large model – over 10 GB – so it will take a few minutes to retrieve the model. The model then needs to load into memory, which can take another few minutes. Once the model is in memory, requests will take around 10 seconds on a T4, or less on a more powerful GPU.

Once you have set your image and prompt, run the script.

Let's run the script on the following image:

Here is the caption generated by our model for the image:

Navigating the warehouse with precision: A dedicated worker ensures smooth operations with his trusty Hyster forklift.

Our server successfully generated relevant captions for the provided images.

Conclusion

You can use CogVLM with Roboflow Inference as an image captioning API that runs on your own hardware. In this guide, we walked through how to deploy CogVLM with Roboflow Inference to a GPU device. We discussed how to install Inference, how to set up an Inference server, and how to make a request to the server.

If you need assistance deploying CogVLM to solve a computer vision problem, contact the Roboflow sales team. Our sales team are experts in developing custom computer vision solutions for large enterprises.

Cite this Post

Use the following entry to cite this post in your research:

James Gallagher. (Feb 2, 2024). How to Use an Image Captioning API. Roboflow Blog: https://blog.roboflow.com/image-captioning-api/