Optical Character Recognition (OCR) is a problem type in computer vision that aims to identify and recognize characters (i.e. numbers, letters, punctuation) in an image. OCR has many applications across industries. For example, businesses can use OCR to read serial numbers on manufacturing lines for use in inventory management systems.

The best OCR systems do not always return exact results. With that said, we will end this article with a summary of how to approach error correction with OCR.

In this guide, we are going to talk about what OCR is, common use cases for OCR, and how to use a free OCR API. We will also discuss how to approach error correction with OCR.

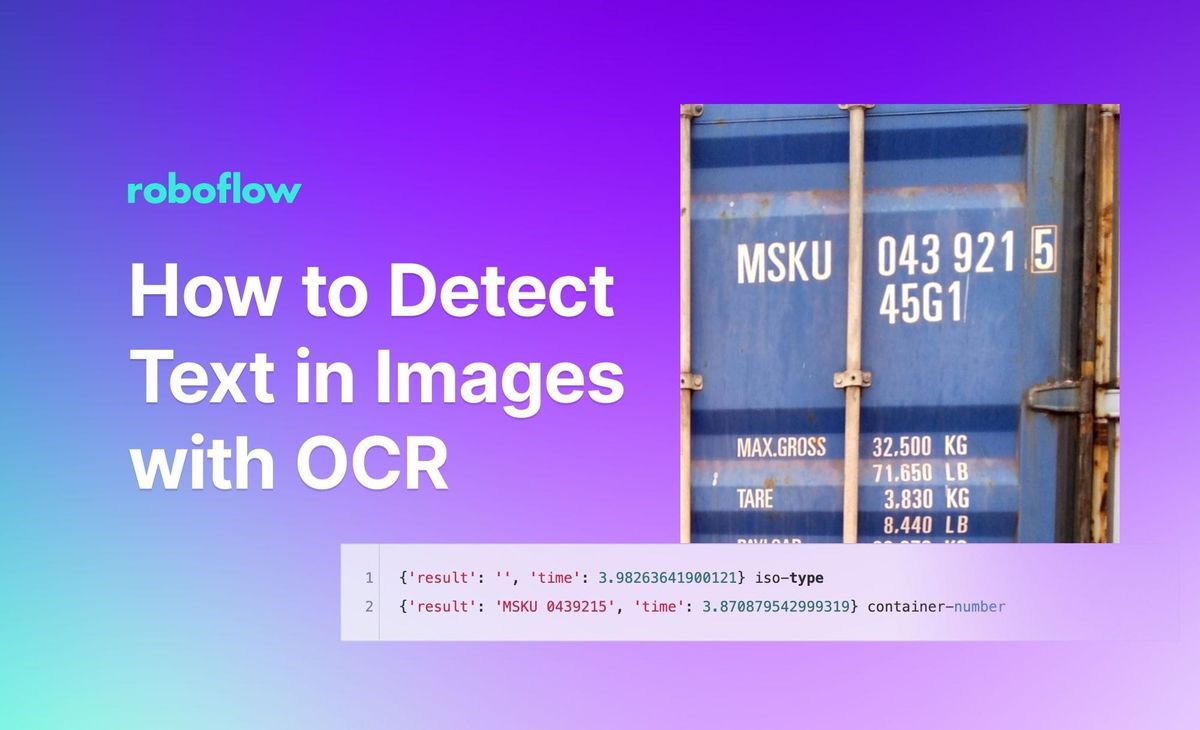

By the end of this guide, we will be able to retrieve the shipping container numbers in this image (MSKU 0439215):

Without further ado, let’s get started!

What is Optical Character Recognition (OCR)?

Optical Character Recognition (OCR) is a method of using computers to read text on an image. In recent years, deep learning methods have been used for OCR, offering superior performance in many cases.

OCR Use Cases

A good way to think about OCR use cases is “when would I need to be able to read text with a computer?” Let’s talk about a few common use cases for OCR.

- Inventory management systems: Inventory systems can use OCR to track the serial numbers of parts as they are prepared for packaging, allowing real-time views on the status of parts in the system (i.e. has the part been sent to a customer?).

- Reading documents: OCR is commonly used to read documents, either written by hand or printed. This approach is taken to allow for extraction of the words on the page, which can then be copy-pasted and edited in a new digital document. OCR is commonly used for digitizing archives so they can be made digitally accessible and searchable.

How to Recognize Characters in an Image

Roboflow maintains a free OCR endpoint you can use to recognize characters in an image or video. The API is powered by DocTR, a machine learning-powered OCR model. The API allows you to retrieve the location of text in visual data. You can then retrieve the text in each location where text was found. You do not need any experience with computer vision to use this API.

The OCR endpoint is available for use in a hosted offering as well as on your device. The latter – running the model on your device – is useful if you need to run OCR in real time, or if you do not have access to a stable internet connection where you need to use OCR.

Step #1: Create a Roboflow Account

First, create a free Roboflow account. You can use your account to make 1,000 OCR API calls.

Step #2: Install Dependencies

Next, open a terminal and install the Inference dependencies, which we will use to make our requests:

pip install inference inference-sdkStep #3: Run OCR on an Image

You can pass a full image through DocTR for use in OCR.

Create a new Python file called image.py and add the following text:

import os

from inference_sdk import InferenceHTTPClient

CLIENT = InferenceHTTPClient(

api_url="https://infer.roboflow.com",

api_key=os.environ["ROBOFLOW_API_KEY"]

)

result = CLIENT.ocr_image(inference_input="./container1.jpeg")

print(result)You will need to replace two values in the code above:

image.jpg: The name of the image on which you want to run OCR, and;API_KEY: Your Roboflow API key. Learn how to retrieve your Roboflow API key.

In this code, we send an image to the Roboflow DocTR endpoint to run OCR.

Let's run the model on this image:

The OCR process returns:

{'result': 'MSKU 043921.5 45G1 MAX.GROSS 32,500 KG 71,650 LB 3.830 KG TARE 8.440 LB', 'time': 4.146573492999778}DocTR successfully identified some of the text in the image. Of note, OCR models are not fully reliable. For optimal performance, we recommend applying error correction to the output based on the structure of data you expect.

Now that we have demonstrated how to run OCR on a whole image, let's talk about how to run OCR on specific regions of an image. This is ideal because you can tag the text in a region. For example, you can ascertain which of the text in a shipping container image is a container ID or which part is a chassis ID.

Step #4: Run OCR on Specific Regions of an Image

Let’s run an API that can identify both container numbers and ISO types first. For example, suppose we are building an application for the logistics industry.

We want to read the characters on a shipping container to take inventory of which containers are within a given facility, but not any extraneous characters (i.e. a logo). We can use a model to detect the regions of interest. Then, we can use OCR to read the attributes on the container.

Then, create a new Python file called app.py and add the following code:

import requests

import base64

from roboflow import Roboflow

from PIL import Image

from io import BytesIO

import supervision as sv

API_KEY = ""

rf = Roboflow(api_key=API_KEY)

project = rf.workspace().project("container-shipping-number2")

model = project.version(3).model

bounding_boxes = model.predict("container1.jpeg").json()

predictions = sv.Detections.from_inference(bounding_boxes)

image = Image.open("container1.jpeg")

classes = [i["class"] for i in bounding_boxes["predictions"]]

for i, _ in enumerate(predictions.xyxy):

x0, y0, x1, y1 = predictions.xyxy[i]

class_name = classes[i]

# add 10% padding

x0 = int(x0 * 0.9)

y0 = int(y0 * 0.9)

x1 = int(x1 * 1.1)

y1 = int(y1 * 1.1)

cropped_image = image.copy().crop((x0, y0, x1, y1))

# change to black and white

cropped_image = cropped_image.convert("L")

# convert to base64

buffered = BytesIO()

cropped_image.save(buffered, format="JPEG")

data = {

"image": {

"type": "base64",

"value": base64.b64encode(buffered.getvalue()).decode("utf-8")

}

}

# decode and show image

img = Image.open(BytesIO(base64.b64decode(data["image"]["value"])))

img.show()

ocr_results = requests.post("https://infer.roboflow.com/doctr/ocr?api_key=" + API_KEY, json=data).json()

print(ocr_results, class_name)In this code, we:

- Use a fine-tuned model to identify the container number in an image, then;

- Make a web request to the OCR endpoint to retrieve the text in the image.

You will need to replace two values in the code above:

image.jpg: The name of the image on which you want to run OCR, and;API_KEY: Your Roboflow API key. Learn how to retrieve your Roboflow API key.

Finally, run the Python script:

python app.pyLet’s run our script on the following image:

Our OCR script returns the following response:

{'result': '', 'time': 3.98263641900121} iso-type

{'result': 'MSKU 0439215', 'time': 3.870879542999319} container-numberOur model was able to successfully identify text in the image, but only for one class (the “container-number” class). To mitigate this issue, you can look at applying additional preprocessing steps appropriate for your project.

If the OCR model makes a mistake, we recommend that you: (i) ensure the text in the image is as readable as possible (i.e. the lighting conditions make the text visible, the typeface is easy to read), and; (ii) using an error correction system to address mistakes in the OCR endpoint.

We will talk about error correction later in this post.

Deploy an OCR Model on Your Hardware

You can also run the OCR API on a device through Roboflow Inference. Inference enables you to deploy computer vision models. You can deploy pre-trained models that use a supported model architecture (i.e. RF-DETR) and use foundation models such as DocTR.

Inference runs a range of devices and architectures, from x86 CPU to ARM CPU to NVIDIA GPU.

For this guide, we are going to use the inference pip package.

To install the package, run the following code:

pip install inferenceThen, start an inference server:

inference server startCreate a new Python file and add the following code:

import os

from inference_sdk import InferenceHTTPClient

CLIENT = InferenceHTTPClient(

api_url="http://localhost:9001",

api_key=os.environ["ROBOFLOW_API_KEY"]

)

result = CLIENT.ocr_image(inference_input="./image.jpg")

print(result)When this code runs for the first time, DocTR will be downloaded to your system. The amount of time this process will take depends on the strength of your internet connection.

Once you have the model weights ready on your system, OCR will run on the provided image. OCR runs entirely on your device without the requirement of an internet connection once you have downloaded the model weights for the first time.

OCR Error Correction

OCR works well when text is clearly visible and in a typeface the OCR model can understand. You can test how well an OCR system works for your use case by running the system on a few images and evaluating the results.

With that said, OCR models do make mistakes, even when text is clearly readable. That is why systems that use OCR often employ error correction, a technique whereby you read the output returned by an OCR model and clean it up as necessary.

A common and effective approach to error correction is employing the use of heuristics to evaluate an OCR result. Consider a scenario where you know that the text you are reading is numbers. If OCR returns a “l” instead of a “1”, you can make the requisite correction.

If you know a document should contain one of three outputs in a particular location, you can take the output that is closest to the outputs you expect. Such logic is commonly implemented in code since you can define exactly what rules are met to correct errors. Read about how an insurance company applies OCR error correction on documents.

Alternatively, you can use a spelling correction algorithm. This is useful if you have written prose from which you want to extract text. Tools like SymSpell, of which there implementations in many programming languages, enable you to clean up OCR results.

You could use two different OCR models and develop logic to come to a probable answer. For example, one model may work well with text that is not straight, another may work well with text at an angle. You could run both models and choose the one that is correct, or apply fixes to both outputs to see if either of the model outputs can be parsed. You can also manually review OCR results.

Conclusion

OCR is a common task in computer vision. With OCR, you can identify the characters in an image. You can also identify the location of each unit of text (i.e. a word or a series of numbers). This location information can help you understand the structure of a document.

In this guide, we used the Roboflow hosted OCR API to retrieve the text in an image. This API uses the DocTR OCR model. We then used the inference pip package to run inference on an image locally. Finally, we discussed error correction in OCR.

Cite this Post

Use the following entry to cite this post in your research:

James Gallagher. (Nov 1, 2023). How to Detect Text in Images with OCR. Roboflow Blog: https://blog.roboflow.com/ocr-api/