Computer vision is performed on a wide array of imaging data: photographs, screenshots, videos. Commonly, this data is captured in similar perception to how humans see – along the visible red, green, and blue (RGB) color spectrum.

However, there's growing interest in processing images beyond the visible color scheme. Thermal image is principal among these alternative input data formats. Thermal imaging is a non-contact method by which we can observe the surface temperature of objects based on its radiation patterns. Thermal images, or thermograms, convert this reflected surface temperature radiation into a visible spectrum humans can observe.

Thermal imaging has novel applications. Not only does it add a new dimension to existing RGB image processing problems, but it enables capturing insights not easily available in typical visible light conditions. For example, in a completely black room, thermal imaging would still reveal nearby objects provided they are of different temperatures.

Thermal imaging has proven to be essential in fields like search and rescue (e.g identifying individuals under wreckage when they are not immediately but are emitting heat), maritime navigation (e.g. at night, ships cannot easily see one another but emit heat), night time road safety (e.g. identify obstacles), gas leak detection (e.g. leaking areas are a cloud of different temperature), and much more.

Because of this, we're confident there are many yet-to-be built computer vision use cases leveraging thermal data.

Releasing a Free Thermal Imaging Dataset for Computer Vision

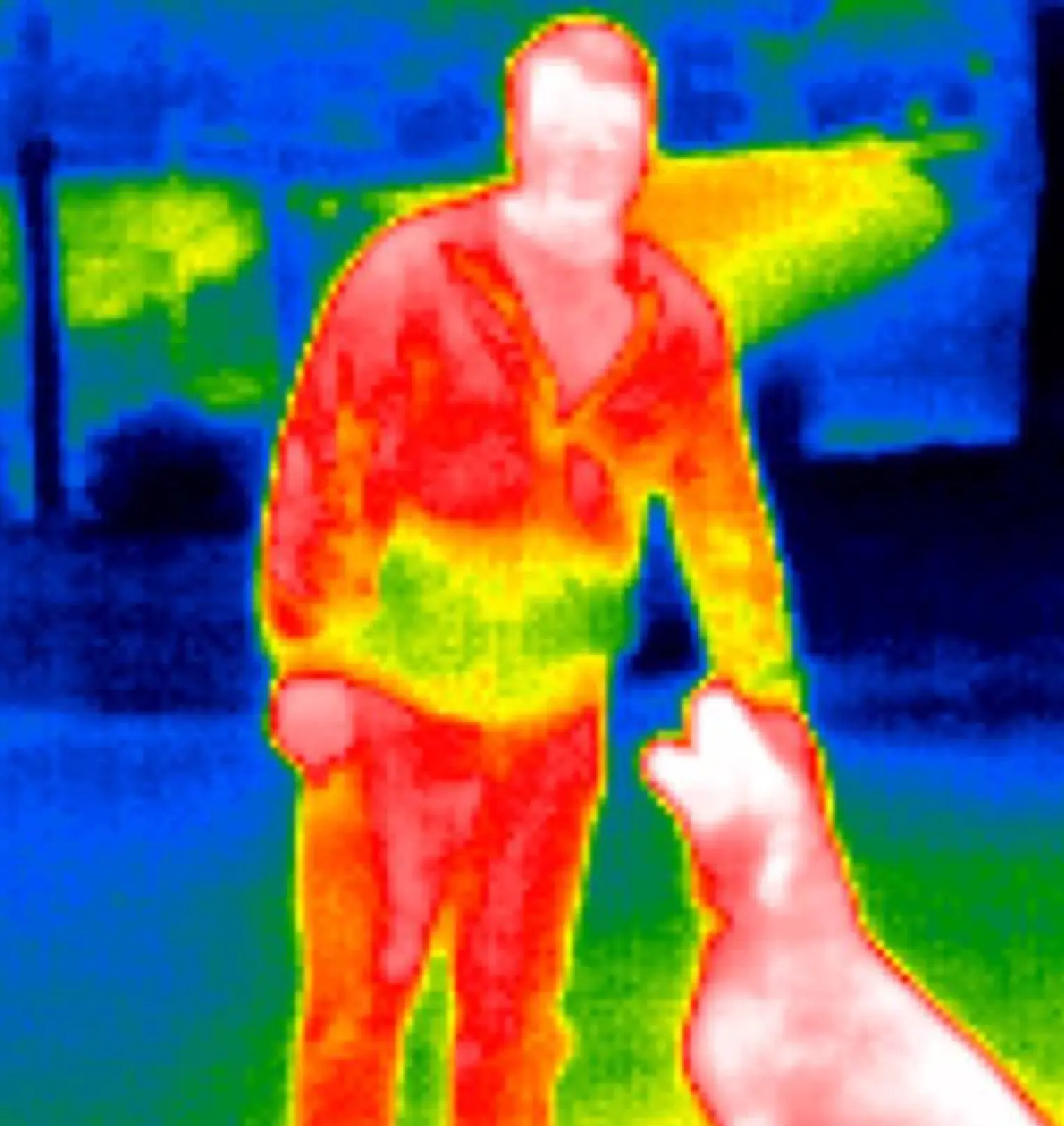

To demonstrate the power of thermal imaging and computer vision, we've captured 203 images of people and dogs. We've annotated this dataset for object detection. The thermal imaging dataset for computer vision is available on https://public.roboflow.com.

Images were captured using the Seek Compact XR Extra Range Thermal Imaging Camera for iPhone (but you could also use a FLIR ONE for iPhone or Android). Notably, 19 of the images deliberately do not contain a person or a dog. (A more detailed breakdown is available via the Dataset Health Check.)

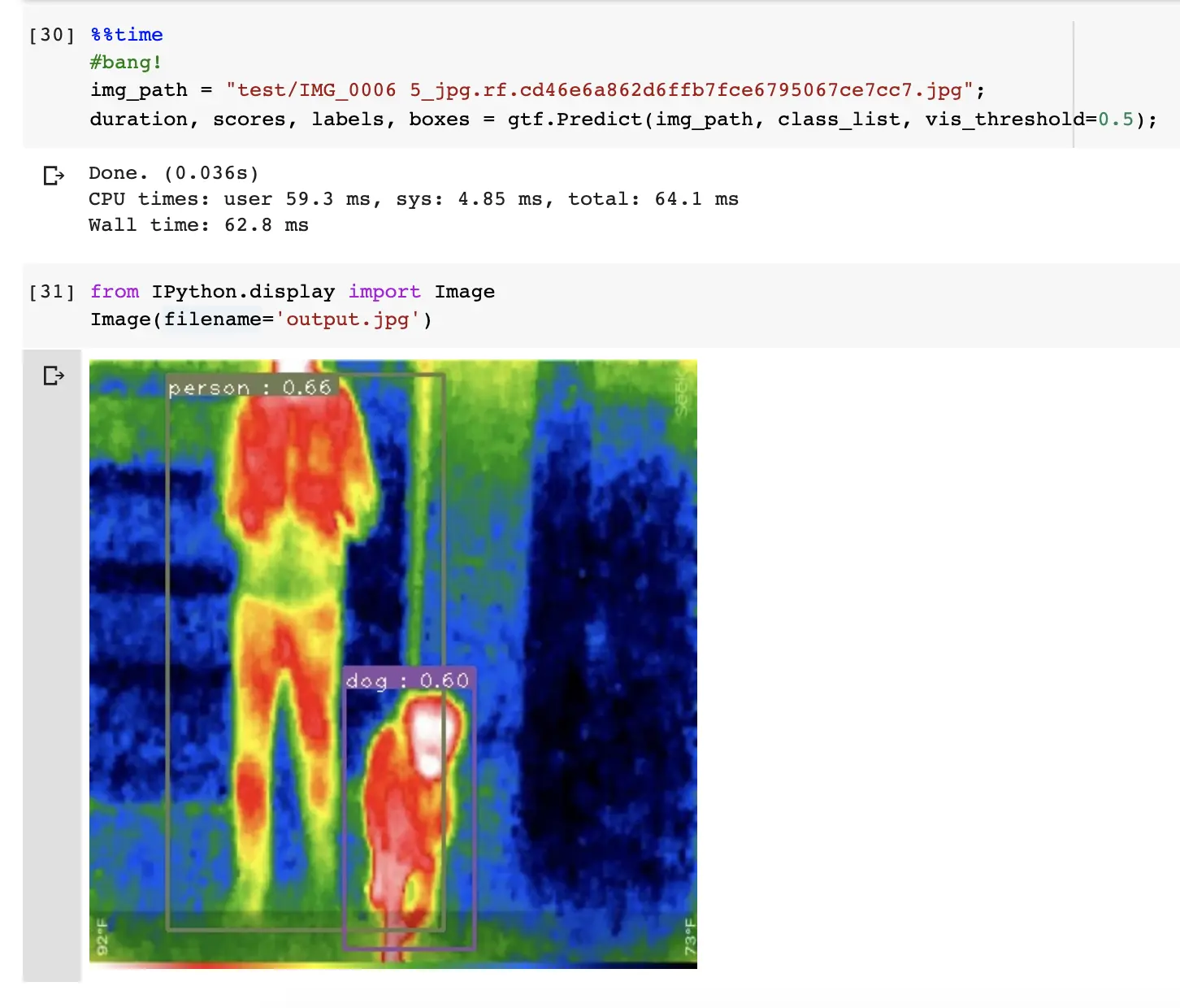

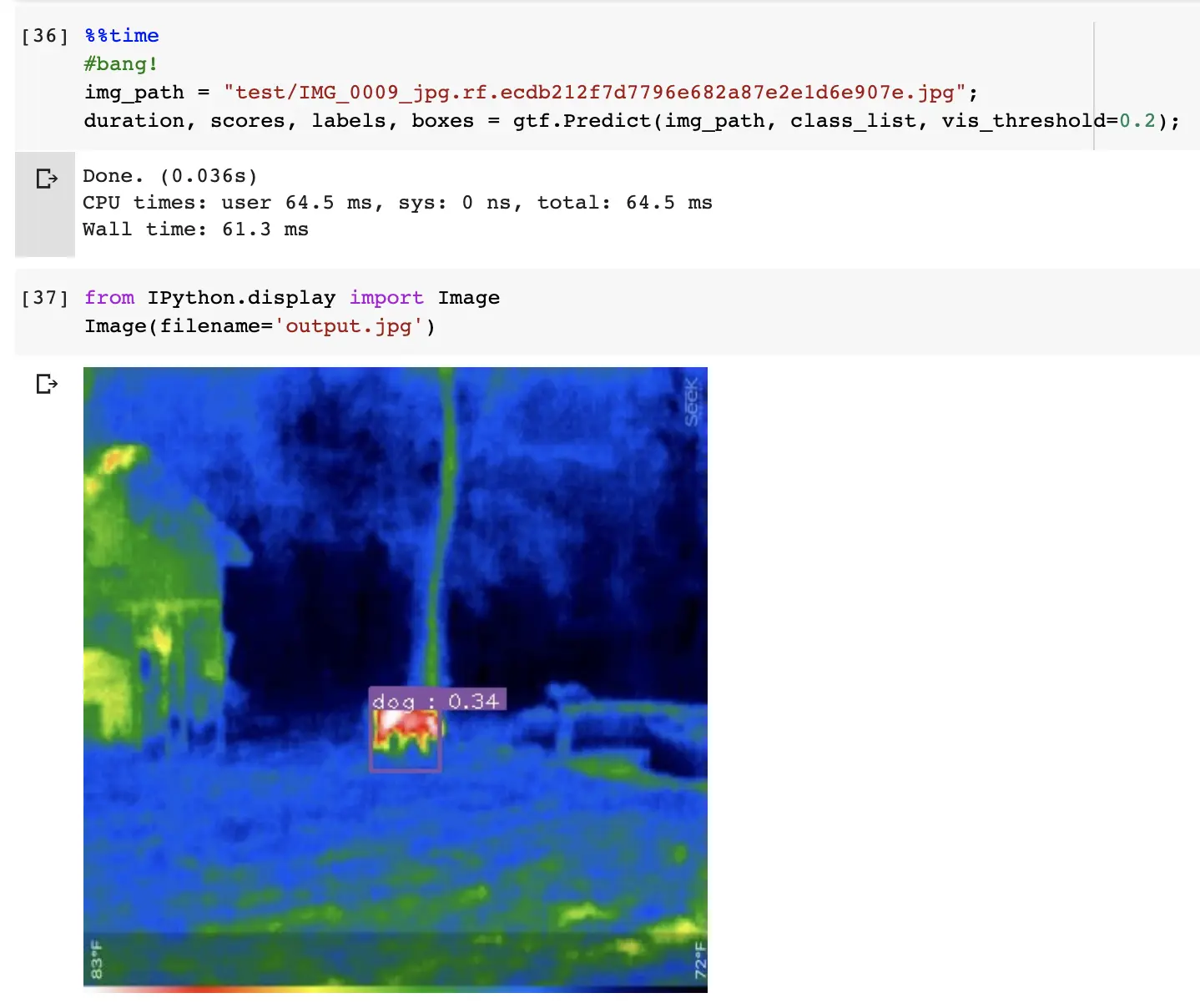

We built an EfficientDet model leveraging just these 203 images (140, 70 percent, in the training set) and saw 0.11735 total loss on the validation set (41 images). In the holdout test set (22 images), the model generally performed pretty well.

The model struggled when the dog was further away from the camera, but it did localize the object's presence:

Though this is a relatively toy example with identifying dogs and people, it begins to show what is possible with Roboflow and thermal data. Even this dataset could assist in identifying Labrador Retrievers in the night or in a search-and-rescue operation.

As always, we cannot wait to see what you build.

Cite this Post

Use the following entry to cite this post in your research:

Joseph Nelson. (May 24, 2020). Thermal Infrared Dataset for Object Detection. Roboflow Blog: https://blog.roboflow.com/thermal-infrared-dataset-computer-vision/