Edge AI has never been hotter. As computer vision technology advances, it is becoming more and more important to be able to deploy computer vision models that can inference in realtime on affordable edge devices.

Want the easiest Jetson Deploy?

Use Roboflow Deploy to streamline the process of deploying your object detection model to an NVIDIA Jetson.

In this post, our edge AI model for object detection is YOLOv5s and our selected hardware is the Jetson NX. Let's get cracking.

If you prefer a video walkthrough, subscribe to our YouTube channel.

Training Your Custom YOLOv5 model

In this post, we will abstract away most of the model training steps. Thankfully, the Roboflow blog has great documentation on how to train YOLOv5 to recognize custom objects. If you don't yet have your trained model then I recommend checking that blog post out first.

Once you have your model trained in .pt format you are ready to advance to the Jetson Xavier NX.

Store you .pt on the cloud or on a USB device so you can access it from the NVIDIA device.

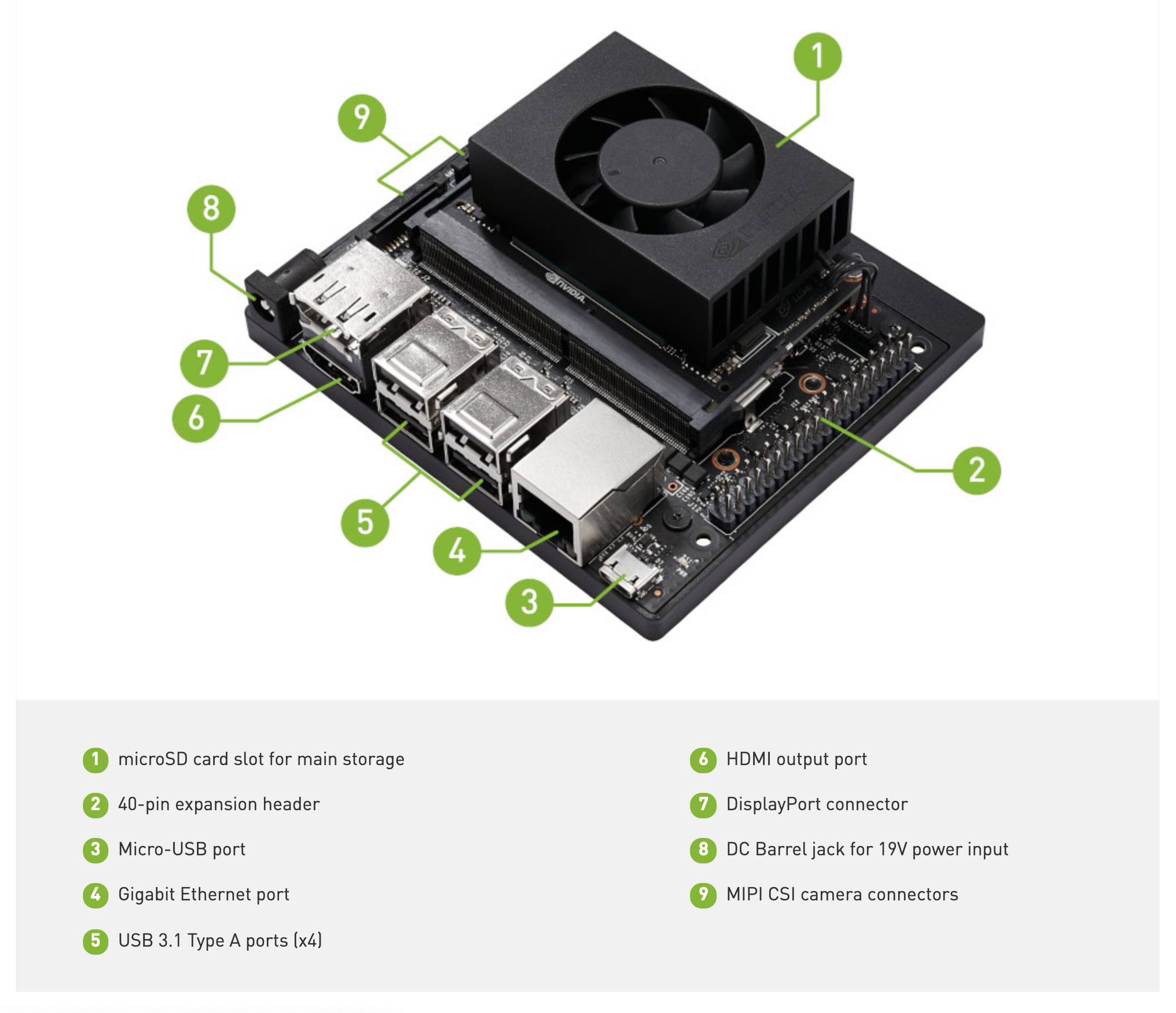

Booting up the Jetson NX

NVIDIA makes it easy to start up the Jetson NX with the NVIDIA Jetpack installation guide.

You will need your own microSD card to flash the NVIDIA Jetpack and ubuntu installation onto your device.

Once set up is complete, you can move forward to deploying YOLOv5.

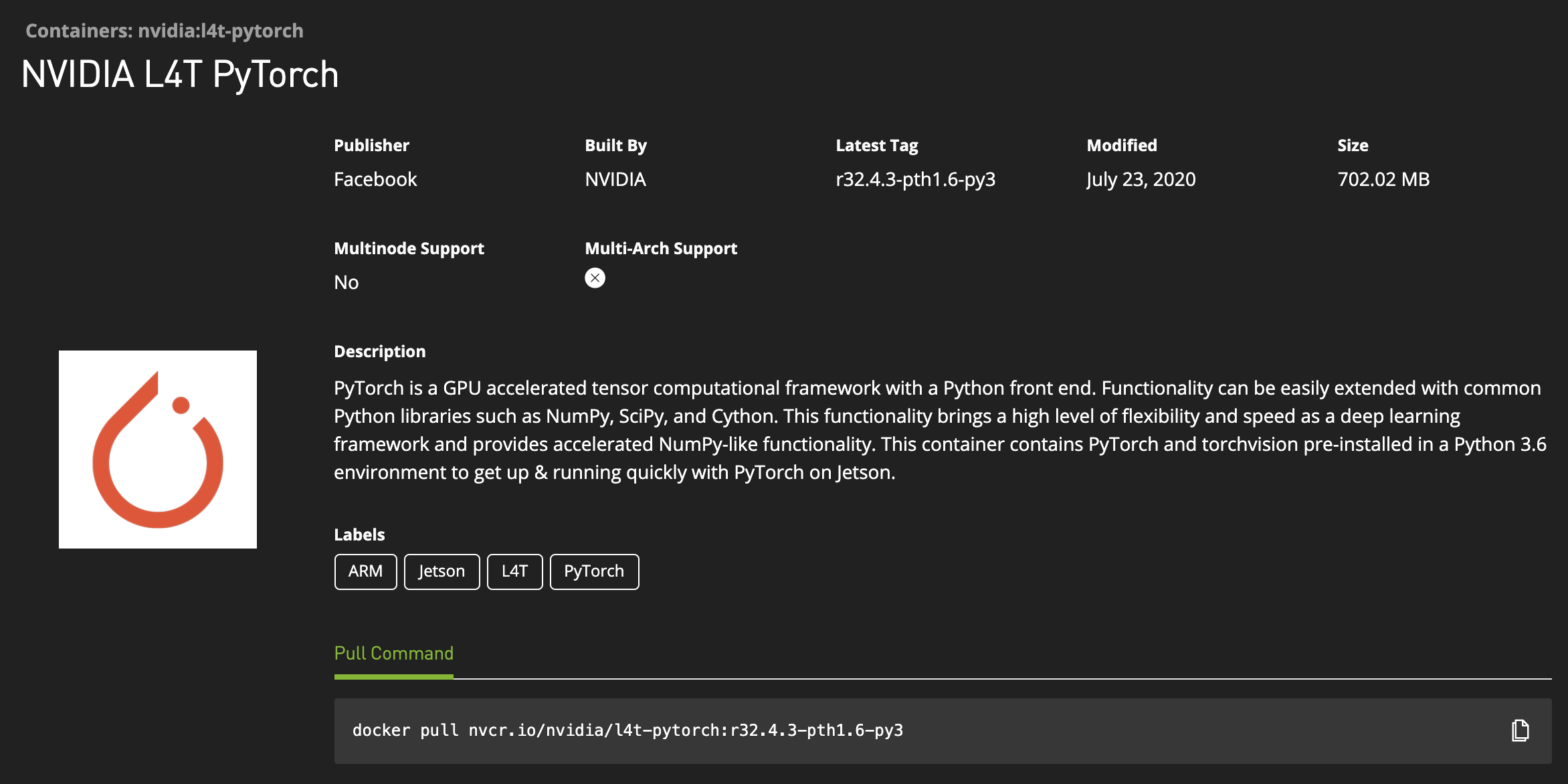

The NVIDIA Jetpack 4.4 PyTorch Container

We will be deploying YOLOv5 in its native PyTorch runtime environment. That means we will need to install PyTorch on our NVIDIA Jetson Xavier NX. Getting this installation right could cost you your week.

Thankfully, the NVIDIA Jetpack 4.4 PyTorch Docker containers are available for our use. Docker crystallizes the install process so you don't have to do it on your machine.

After executing into the PyTorch container go ahead and clone the YOLOv5 repository. There are a few installs to make in the requirements.txt. You may also need to install OpenCV 4.4.0 separately (as I did using this link).

Running Inference on the NVIDIA Jetson NX

Once you have the YOLOv5 environment configured on your NVIDIA Jetson NX, then you are ready to start making inferences. Download an image, a video, or expose your webcam port to the model and kick off an inference session with:

python detect.py --source ./inference/images/ --weights yolov5s.pt --conf 0.4Subbing in your model for the default "yolov5s.pt".

Here is some printouts of my custom YOLOv5s model inferencing at 30FPS!

video 1/1 (2371/2398) /yolov5/video.mp4: 288x224 Done. (0.033s)

video 1/1 (2372/2398) /yolov5/video.mp4: 288x224 Done. (0.037s)

video 1/1 (2373/2398) /yolov5/video.mp4: 288x224 Done. (0.033s)

video 1/1 (2374/2398) /yolov5/video.mp4: 288x224 Done. (0.033s)

video 1/1 (2375/2398) /yolov5/video.mp4: 288x224 Done. (0.033s)YOLOv5s at 30 FPS on a Jetson Xavier NX

Making YOLOv5 Run Even Faster

Of course, we didn't give away all of the keys to the kingdom in this post. You may want to explore making your YOLOv5s model even smaller to speed up inference.

It is also worthwhile to look in to TensorRT solutions to speed things up even further.

Skip All of This

If you're looking for the easiest way to deploy to the NVIDIA Jetson and don't want to jump through all these hoops, you may want to consider Roboflow Deploy which has seamless deployment to edge devices like the NVIDIA Jetson for your custom object detection models. Read the Roboflow Deploy for NVIDIA Jetson docs.

Conclusion

Congratulations! You have learned how to deploy YOLOv5 all the way to an edge device, the Jetson Xavier NX to make inference in realtime at 30 FPS.

Given the flexibility of the YOLO model to learn custom object detection problems, this is quite the skill to have.

We hope you enjoyed and as always, happy detecting.

Build and deploy with Roboflow for free

Use Roboflow to manage datasets, train models in one-click, and deploy to web, mobile, or the edge. With a few images, you can train a working computer vision model in an afternoon. Start free here.

Cite this Post

Use the following entry to cite this post in your research:

Jacob Solawetz. (Sep 10, 2020). Deploy YOLOv5 to Jetson Xavier NX at 30FPS. Roboflow Blog: https://blog.roboflow.com/deploy-yolov5-to-jetson-nx/