Now you can deploy your trained Roboflow model to an iOS app.

The Roboflow Mobile iOS SDK is a great option if you are developing an iOS application where having a model running on the edge (iPad or iPhone) is needed for faster inference or to unlock a new suite of features, capabilities, and use cases (like augmented reality). Native mobile applications with custom computer vision models embedded in them allows developers to give their apps the sense of sight.

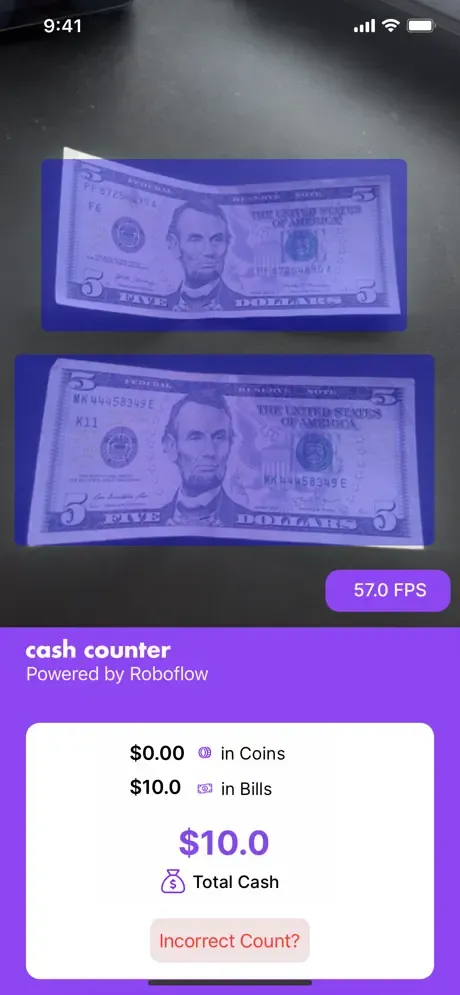

Take a look at and download CashCounter, our example iOS app that counts US coins and bills.

This is a simple example of how to embed a computer vision model into a mobile app and some adjacent functionality.

Supported Hardware and Software

All iOS devices support on-device inference, but those older than the iPhone 8 (A11 Bionic Processor) will fall back to the less energy-efficient GPU engine.

Roboflow requires a minimum iOS version of 15.4.

Installing the SDK

Running the Example App

Make sure Expo and CocoaPods are installed.

- Clone the RoboflowExpoExample project from GitHub.

- Open the project and run

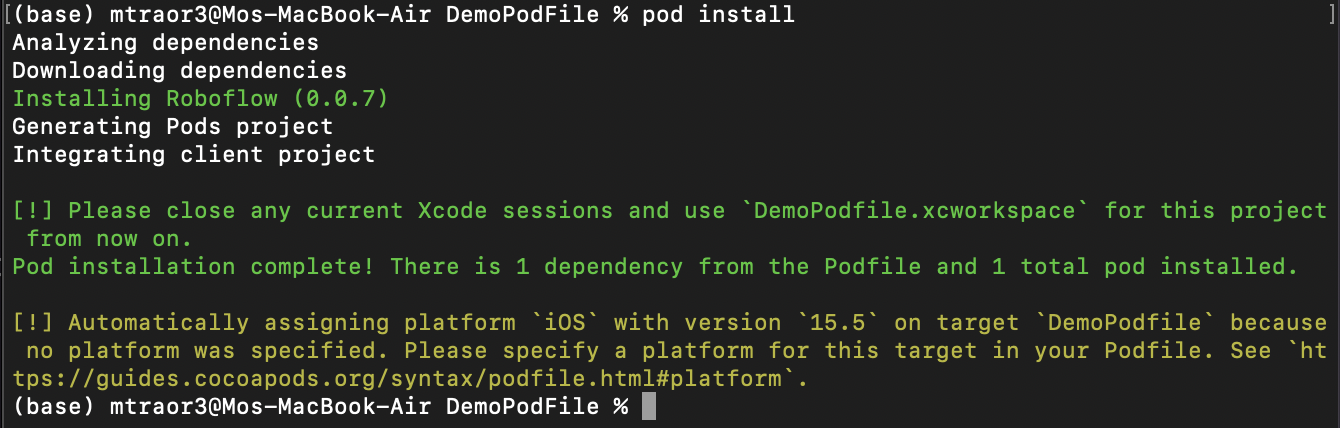

expo install - In your terminal, navigate to the iOS directory in the project and run

pod install

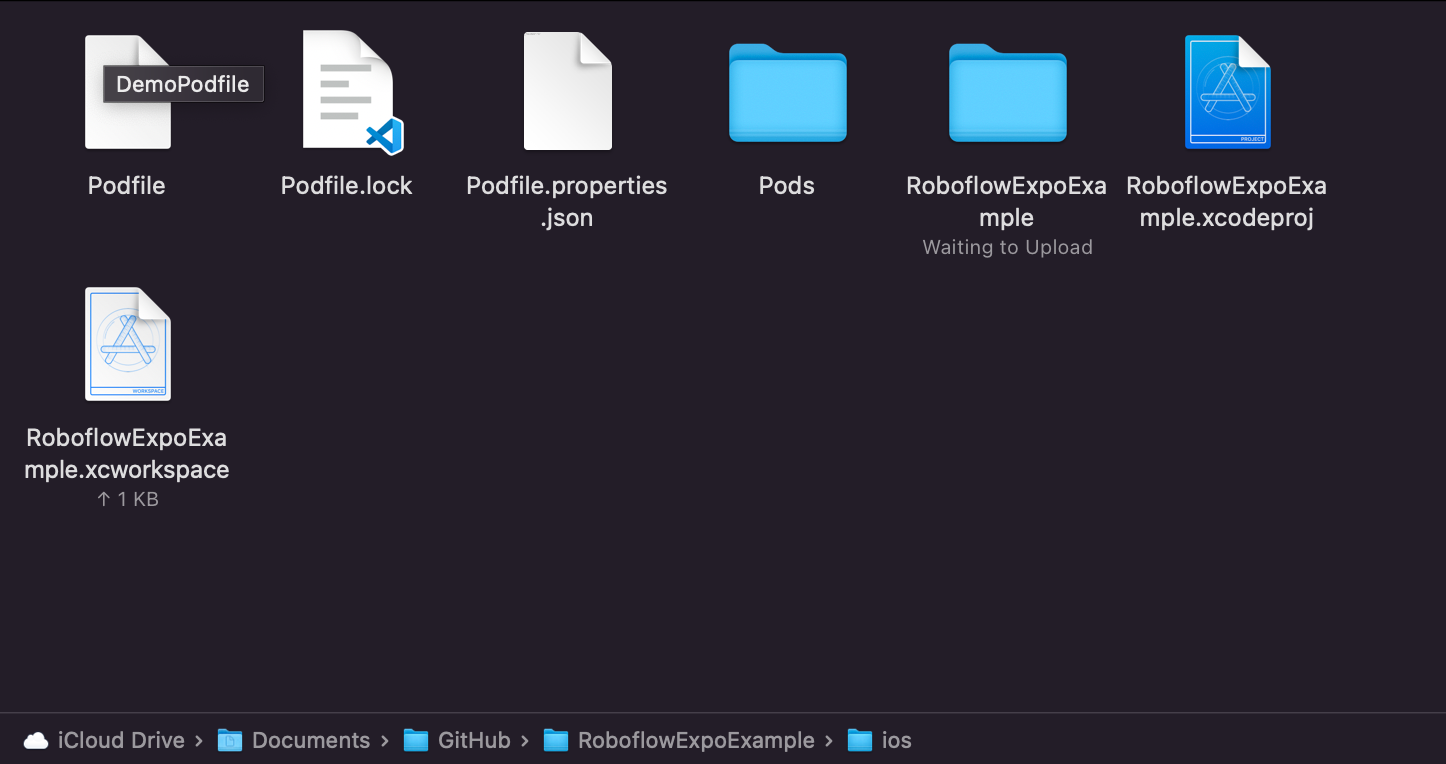

- Open

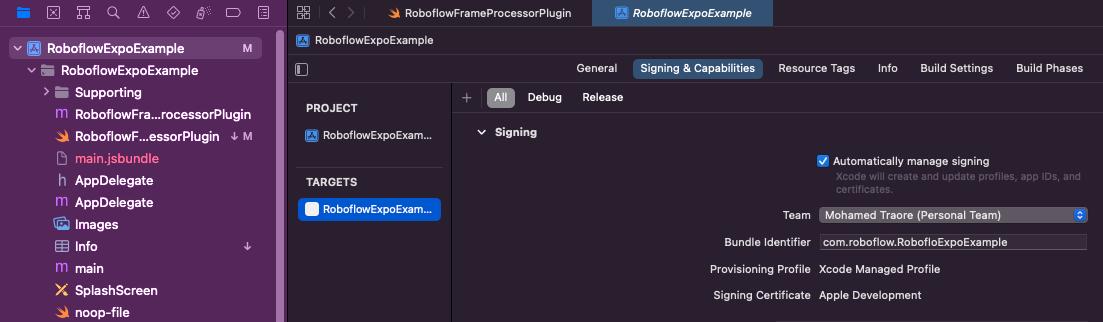

RoboflowExpoExample.xcworkspacein XCode. - Click on the project name on the left, then Signing and Capabilities, then select your team.

- Open

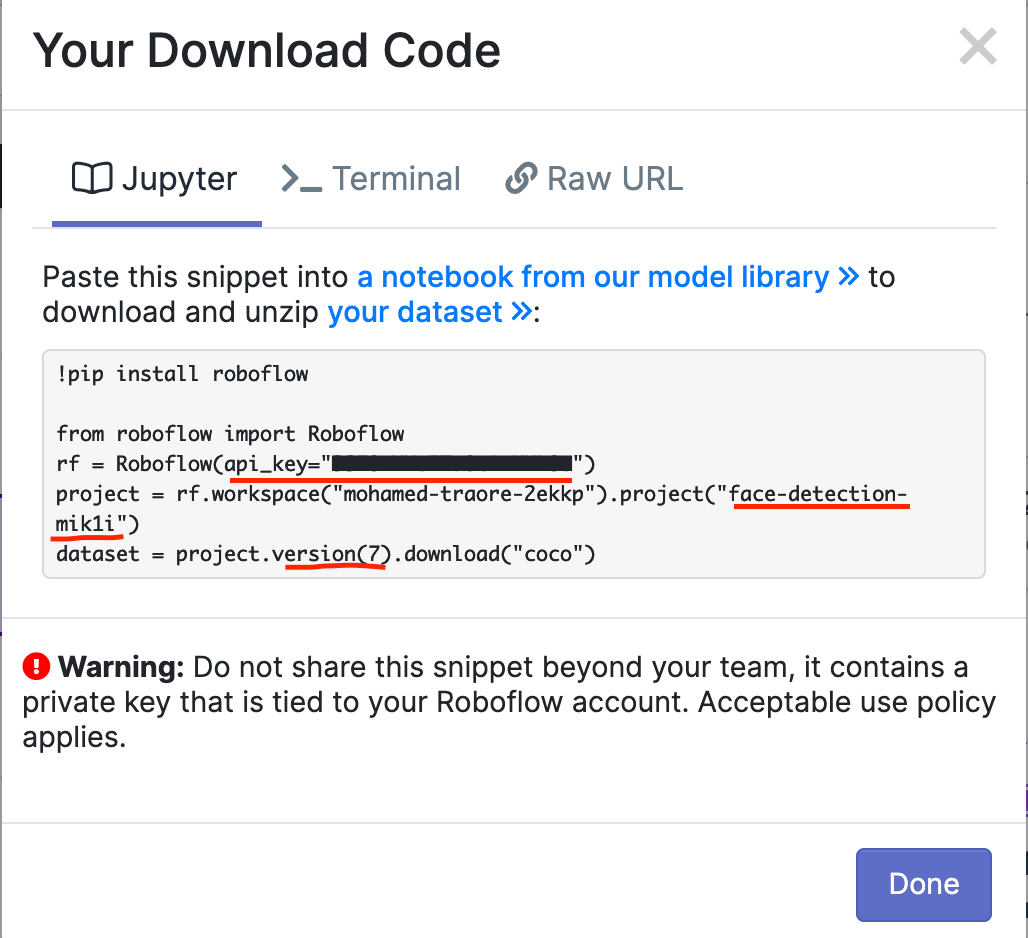

RoboflowFrameProcessorPlugin.swiftin XCode and add your API key, model name, and model version from Roboflow.

Final Step: Run the app on a device using XCode.

Predictions Format

x:Float //center of object x

y:Float //center of object y

width:Float

height:Float

className:String

confidence:Float

color:UIColor

box:CGRect

Creating a Custom App

We have more information in our Documentation and GitHub repository to help you with creating a custom app for model deployment.

React Native Expo Example

We also provide an example of integrating this SDK into an Expo app with React Native here. You may find this useful when considering the construction of your own downstream application.

What's Next?

Sample images from your app where you notice false or low-confidence detections and send them back to Roboflow for annotation and retraining to quickly improve your model.

Share what you made with us on our Show & Tell page in the Community Forum!