And that's a problem that is extremely dangerous.

Machine learning, the process of teaching computer algorithms to perform new tasks by example, is poised to transform industries from agriculture to insurance. But ML models can only be as good as the data on which they're trained.

One much-hyped area where machine learning is going to bring about societal change is in the advent of self-driving cars. But with great power comes great responsibility; a poorly trained self driving car can, quite literally, lead to human fatalities.

That's why we were surprised and concerned when we discovered that a popular dataset (5,100 stars and 1,800 forks) being used by thousands of students to build an open-source self driving car contains critical errors and omissions.

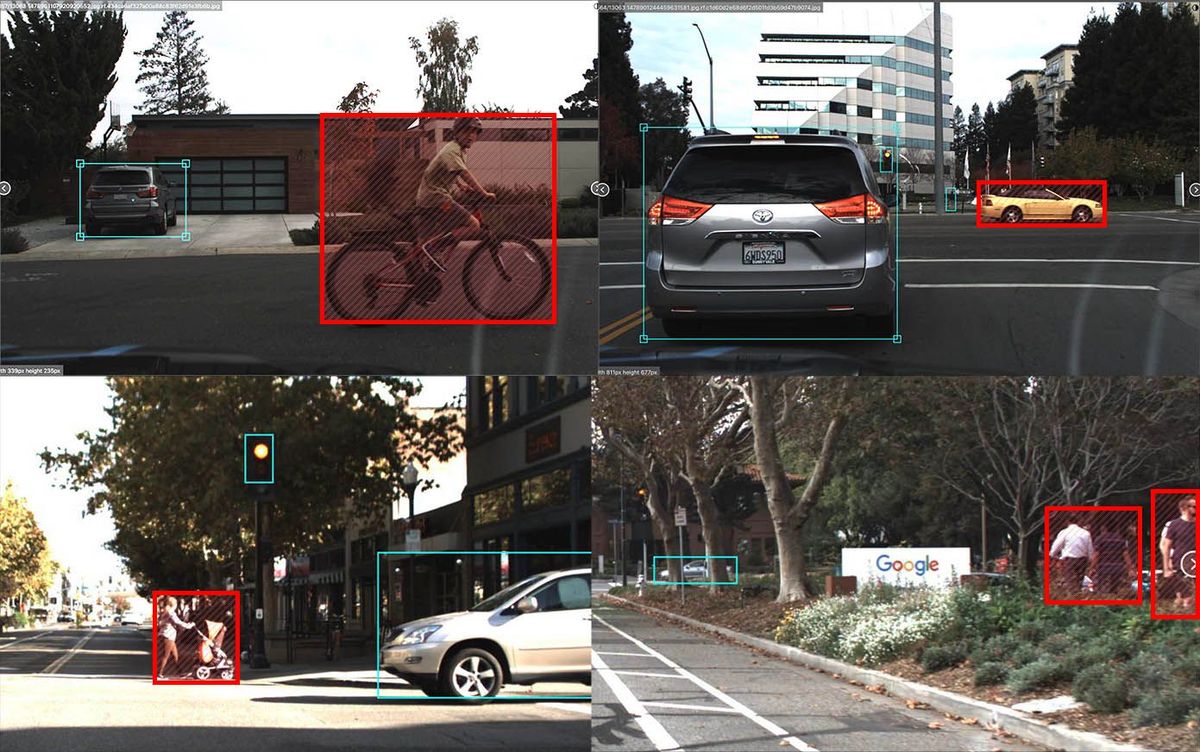

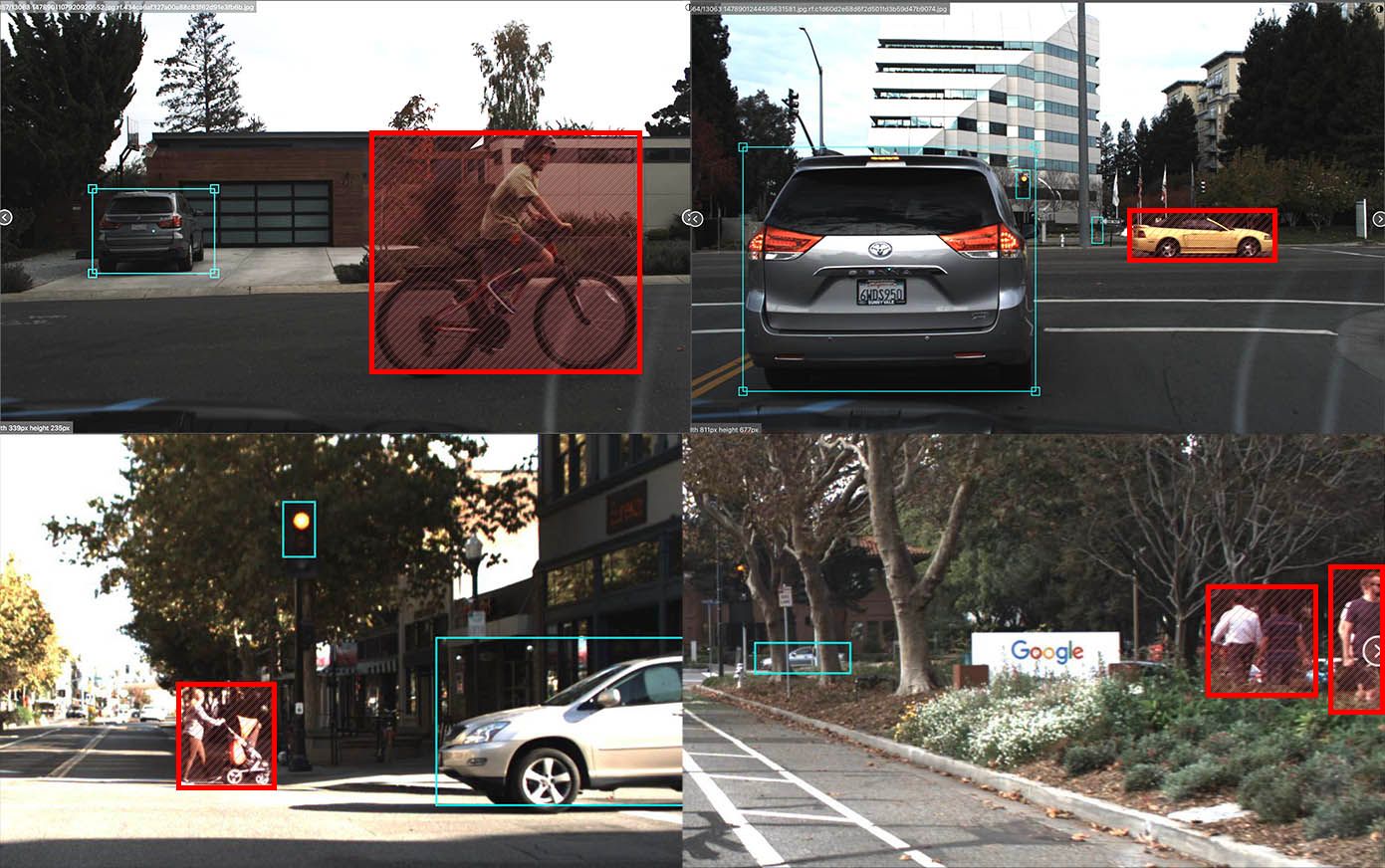

We did a hand-check of the 15,000 images in the widely used Udacity Dataset 2 and found problems with 4,986 (33%) of them. Amongst these were thousands of unlabeled vehicles, hundreds of unlabeled pedestrians, and dozens of unlabeled cyclists. We also found many instances of phantom annotations, duplicated bounding boxes, and drastically oversized bounding boxes.

Perhaps most egregiously, 217 (1.4%) of the images were completely unlabeled but actually contained cars, trucks, street lights, and/or pedestrians.

Open source datasets are great, but if the public is going to trust our community with their safety we need to do a better job of ensuring the data we're sharing is complete and accurate. If you're using public datasets in your projects, please do your due diligence and check their integrity before using them in the wild.

Thanks to their permissive licensing, we've fixed and re-released the Udacity self-driving car dataset referenced in this post in a number of formats for use in your project. If you were training a model on the original, please consider switching to using these updated annotations.

Cite this Post

Use the following entry to cite this post in your research:

Brad Dwyer. (Feb 11, 2020). A popular self-driving car dataset is missing labels for hundreds of pedestrians. Roboflow Blog: https://blog.roboflow.com/self-driving-car-dataset-missing-pedestrians/