Answering the question "how do I deploy a computer vision model?" can be difficult. There are so many options. Which one should you choose? How do you deploy to the option that is right for your use case?

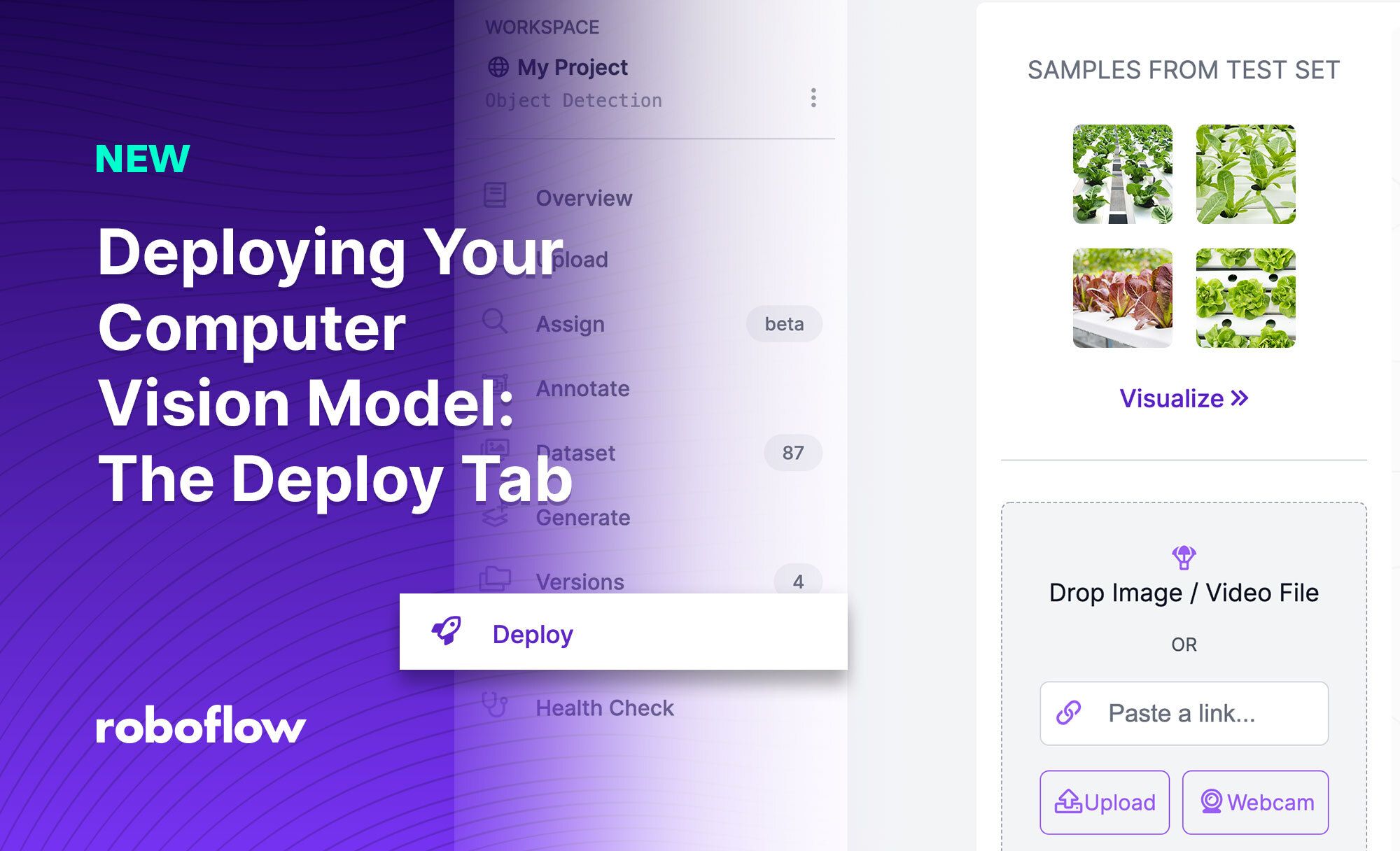

With the launch of the Roboflow Deploy feature, deploying computer vision models suddenly got a lot easier.

Using Deploy, you don't have to worry about writing code to implement v1 demos on a webcam just to validate that your model works before you get around to proper deployment. You also don't have to worry about writing a lot of code when it comes to deploying on many major devices.

In this blog post, we're going to talk about how to deploy a computer vision model with the Roboflow product. This page has production ready inference capabilities for videos, images, and using your webcam. Included in the new page are code snippets for Hosted Inference API and various edge deployment options that are ready to copy and paste into your web app. Let's begin!

The Roboflow Deployment Tab

We've built an extensive inference tool so as you as you train a model you can start using it with any media format you choose. Let's talk about how to use our inference tool.

Our product allows you to deploy computer vision models using:

- Drag-and-drop for video files and images (useful for testing and demos)

- Webcam via a browser

- By URL

- Using our API

- Using the OAK and Jetson deployment opions

- Using our iOS SDK

With all of these options available, you should have no trouble deploying a computer vision model.

The first question to ask is: what method of deployment is right for you?

Choosing a computer vision deployment method

When you are deciding on a computer vision deployment method, there are a few questions you should ask:

- Am I testing or ready for production? Drag-and-drop and webcam deployment can be useful for testing, demos, and showcases.

- Do I want to trigger inference as a result of submitted content? If you want to run inference on an image or video that has been submitted (i.e. by a user or a customer), an API or URL-triggered inference might be appropriate.

- Do I want to deploy to the edge? If you need greater speed, have specific privacy concerns that limit your deployment options, or need to deploy where there might not be a network connection, an edge deployment device is a good idea.

We support all of these deployment methods for models trained on Roboflow.

Settings

You can select from any of your trained versions and see their train results at the top of the page:

Additionally, you can control the confidence and overlap thresholds for any inferences you make with the inference widget:

Infer on video files

Drag and drop, or a select from your device, a video file to use the video capabilities. You can also change the speed of the video.

Deploy a computer vision model on your webcam

You can also infer in real-time using your webcam. Both video and webcam inference use roboflow.js live in the browser (which you can use yourself).

Infer on image files

Similar to video files, you can drag and drop (or choose from your device) an image file to run inference. We also included samples from the dataset's test set that you can try out and see how your model performs on specific images.

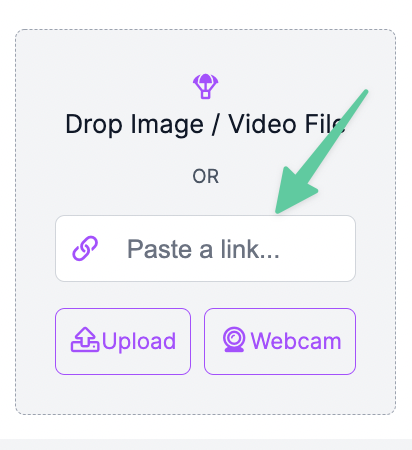

Infer on image URLs

To infer on an image url you can paste the url in the form input.

Or you can drag and drop images directly from google image search or other webpages.

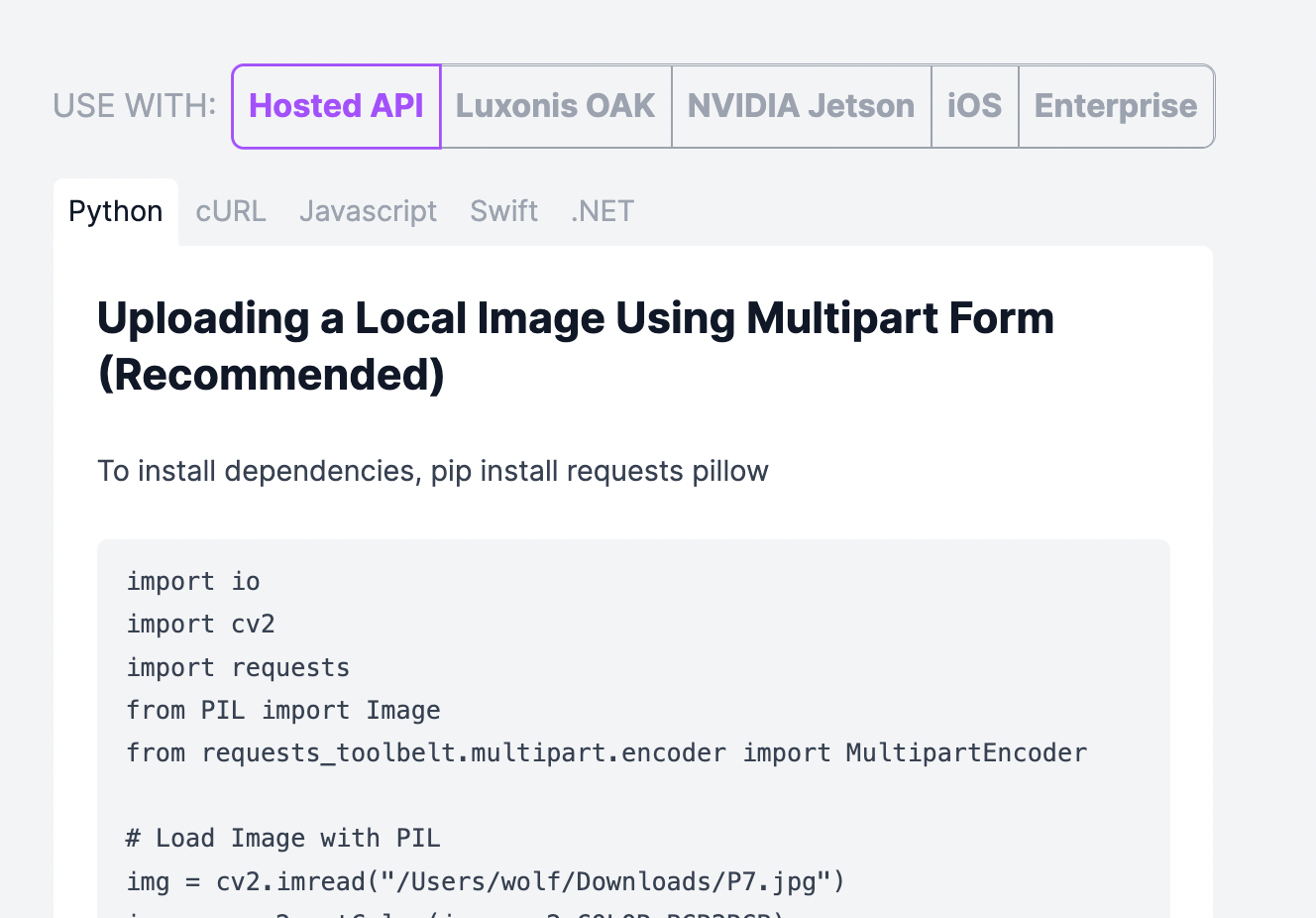

Code Snippets for Edge and iOS Deployment

You can replicate all the functionality (and more) of the inference widget using the code snippets we provide in our docs or at the bottom of the page. All code snippets are formatted with your model's endpoint and your API's keys, so they're ready to copy and paste.

We have code snippets in several languages for using our auto-scaling hosted inference API.

You can deploy to the edge using Luxonis OAK, NVIDIA Jetson, iOS, and Enterprise deployment options.

More Resources

We have even more in-depth resources for deploying your models in our docs as well as our youtube channel.

You can also use the inference widget, or deploy on your own, any of the thousands of public pre-trained models on Roboflow Universe! Checkout the blog we wrote about Universe here.

Happy Inferencing!

W