If you’ve ever tried to explain how computer vision works to your friends, family or colleagues, you probably know that it can be hard to do. This is especially true if you start using the common jargon (e.g. neural networks, hyperparameters, machine learning), as these terms can sometimes be counterproductive. Instead of adding clarity, they complicate the matter further.

We know, however, that computer vision is already touching all of our lives, from social media to transportation, online shopping to photos stored in the cloud. Believe it or not, most of us interact with machine learning algorithms everyday.

It’s understandable that we don’t always perceive the introduction of computer vision around us. This is due (in part) to the fact that technology everywhere is evolving to more closely imitate human behavior: the better it gets, the less we notice its adoption into our favorite tools and online resources. Computer vision is the automation of human sight, one of our most basic, innate senses.

If you’re having trouble convincing your supervisors to explore computer vision as a means to streamline workflows or supercharge productivity, or explaining your new job at a computer vision startup company to your friends and family, follow along. We’ll illustrate how some well-known brands are using computer vision today.

Apple

How Apple uses computer vision

If you’ve activated the facial recognition feature on your iPhone (Apple Face ID), you are running a computer vision model locally (on your device) each time you unlock your phone. Biometric authentication was first introduced on iOS 10, which was released in 2016 - and the really remarkable aspect of this feature is how Apple successfully addressed the challenges of getting deep learning algorithms running on iPhone, instead of through the cloud.

This technology recognizes the unique features of your face using keypoint detection, or instance segmentation, two common model formats in computer vision.

How Facebook uses computer vision

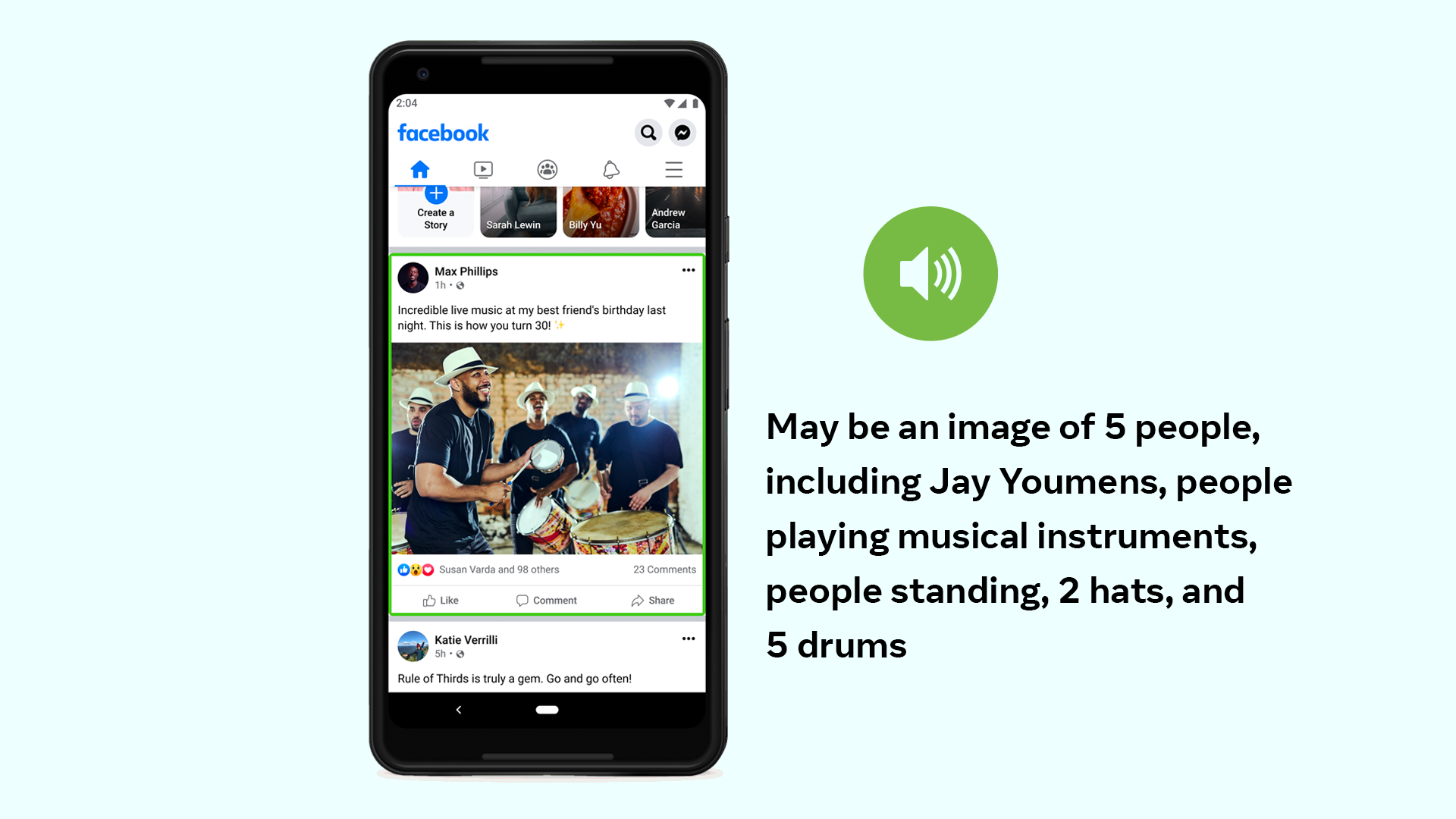

Facebook is a leading company in computer vision and embeds its usage throughout their products. For example, Facebook uses computer vision to improve accessibility. If a user is blind or visually impared, Facebook is able to identify what is happening in a photo and provide an auditory description of that scene.

One additional example is when Facebook helps you identify the right people to tag in photos. By recognizing you (or your friends), Facebook can also notify users when a fraudulent account is using photos of you.

Uber

How Uber uses computer vision

When the pandemic struck, Uber sought to find ways to ensure its drivers and riders adhered to the newly established health and safety guidelines. The company created a mask detection model that required its drivers to take a photo of themselves at the beginning of each shift. Those selfies were fed into a computer vision model trained to detect the presence of a face mask.

You can create your own mask detector with our public mask dataset.

Airbnb

How Airbnb uses computer vision

Airbnb plays host to millions of photos uploaded by its users every year. While each listing on the platform may or may not include every amenity offered, the machine learning algorithm developed by the AirBnb Engineering team in 2019 is designed to cover those gaps.

Items visible in photographs of the property - including hair dryers in the bathrooms, pressure cookers on kitchen counters, and outdoor/indoor pools - are noted in the online listing, even if the host forgot to check those respective boxes. This effort required annotating over 50,000 images across 40 classes. That's a five-star review in our books!

Instagram

How Instagram uses computer vision

Popular photo-sharing app Instagram is fertile ground for vision technology, especially in the effort to more efficiently (and effectively) moderate content posted to the platform. After all, keeping spam to a minimum on the product is a key priority to ensuring its users have a positive experience. Researchers have identified one technique that has proven particularly successful: comparing the caption of an image to the predictions generated by a computer vision model. If the two are significantly mismatched, the photo is likely spam.

Instagram has also been home to more cutting-edge techniques, like generative models. That is, machine learning models that can create entirely organic images. Virtual fashion models (known as digital men and women) are slowly replacing their real-life counterparts in publications, on websites, in advertisements and even on social media.

The fake model Imma, for example, has 50,000 followers on Instagram. She first appeared in an advertisement for Kate cosmetics alongside two (real) models in Vice’s i-D site in Japan. Every detail of her appearance was designed by ModelingCafe, a CGI firm in Tokyo - from the lighting on her face to the dark roots peeking through her bright pink (presumably colored) hair.

Madison Reed

How Madison Reed uses computer vision

The world of online shopping will soon be saturated with computer vision models that will help us do everything from “try on” new clothes and shoes to evaluating if that new sofa matches the décor in our living room. Companies like Madison Reed are already leveraging computer vision in this capacity, using it to help women overcome the most common objection in choosing a new hair color: will this look good?

Madison Reed rolled out an AI-powered personality in 2016 named Madi, a chatbot infused with computer vision that recognizes a woman’s hair color and recommends a match based on her unique skin tone and complexion. You can find her on the Facebook Messenger app. This technology rivals a woman’s consultation with a professional stylist/colorist, and can loop-in a third party on command if the consumer wants that human touch after all.

How Pinterest uses computer vision

Even Pinterest is getting into the game of computer vision. With the new Lens Your Look app, Pinterest users can upload a picture of themselves, their favorite blue jean jacket, a snap of their bedroom or a treasured vintage flower vase, and Pinterest will make predictive recommendations of pins to follow or similar boards for more inspiration. The differentiator in this piece of technology is its themed groupings of similar items using style, color, pattern and other identifiers.

How Google uses computer vision

At this point, most of us have personal photo libraries that span years or even decades of our lives. In between all those pictures of our pets and fancy meals, there are almost certainly a few gems - and if you’ve ever experienced the frustration of scrolling endlessly through your own library to find that one photo, you’ll be glad to know that computer vision has already saved the day.

You can now search through your own Google library using text prompts in the search field; this could include finding pictures from a concert you attended at Bonnaroo, or your cousin’s high school graduation. I used it in my own photo library just today to find pictures of beaches.

What’s Next?

The next wave of evolution in tech will be driven by advancements in computer vision, artificial intelligence and machine learning. These tools will continue to touch and improve our lives in almost every way, from medicine to retail, manufacturing to supply chain logistics. There are startups springing up in every part of the world, ready to leverage this powerful technology in myriad ways and functions.

“The applications of computer vision are so varied that it is hard to imagine a business that couldn't benefit from it.” - Bernard Marr, Forbes

These are just a few light-hearted examples of how computer vision is already in-use by some of our favorite brands, of course, but we know that computer vision is so much bigger than even we can imagine. Domain experts in healthcare and wildlife conservation, global warming and oil and gas refineries are working hard to create a better, safer and cleaner world.

Stay Ahead of the Curve

Computer vision isn’t only for large technology companies. With Roboflow, teams in all industries deploy state-of-the-art vision techniques. Contact us to learn more about how this technology can be used to streamline workflows, mitigate risk or supercharge industries.

Cite this Post

Use the following entry to cite this post in your research:

Kaylee Williams. (Jul 5, 2021). How Your Favorite Brands Are Using Computer Vision. Roboflow Blog: https://blog.roboflow.com/how-your-favorite-brands-are-using-computer-vision/