On July 29th, 2024, Meta AI released Segment Anything 2 (SAM 2), a new image and video segmentation foundation model. According to Meta, SAM 2 is 6x more accurate than the original SAM model at image segmentation tasks. This article uses the Segment Anything model.

In April 2023, Meta Research took the computer vision world by storm with the release of the Segment Anything Model (SAM), a powerful image segmentation model. This model has strong segmentation capabilities: given a photo, SAM can, with a high degree of precision, generate masks that segment objects in the image. SAM was trained on the SA-1B dataset of over 11 billion segmentation masks.

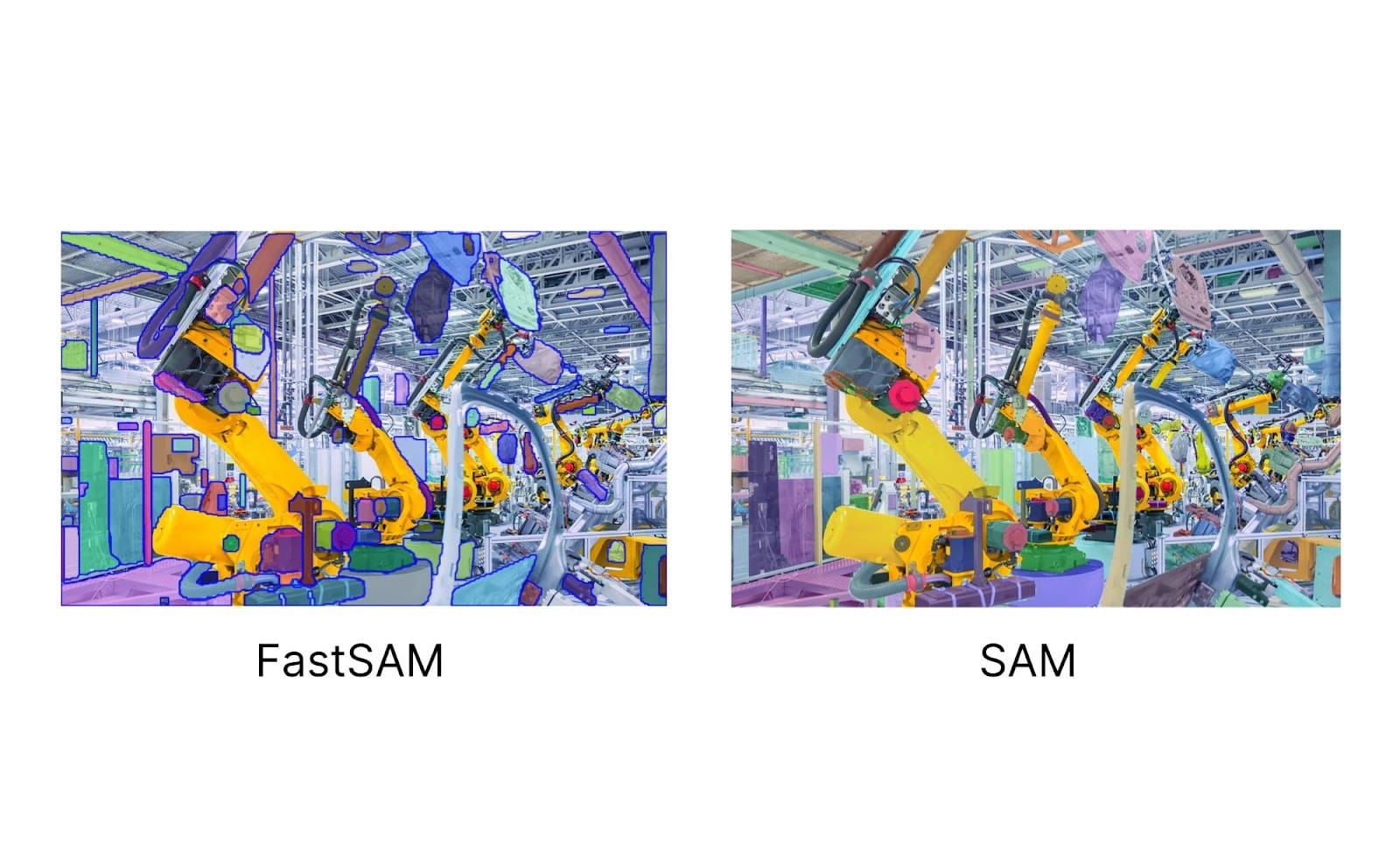

This month, a new paper was published detailing Fast Segment Anything (FastSAM), a new model trained on 2% of the original SAM dataset. This is accomplished through dataset distillation and knowledge distillation tactics. In our testing, we have found this model is capable of producing relatively precise masks for a range of domains, although to a lesser extent than the canonical SAM model.

In this guide, we are going to talk through what FastSAM is, for what the model is useful, and what we can learn from FastSAM about segmentation.

Without further ado, let’s get started!

NOTE: On July 29th, 2024, Meta AI released Segment Anything 2 (SAM 2), a new image and video segmentation foundation model. According to Meta, SAM 2 is 6x more accurate than the original SAM model at image segmentation tasks.

What is FastSAM?

FastSAM, released by the Image and Video Analysis Group at the Chinese Academy of Sciences, is an image segmentation model trained on 2% of the data used to train the groundbreaking Segment Anything Model (SAM). FastSAM has lesser computational requirements than SAM, while still achieving strong accuracy.

Instance segmentation on COCO 2017 results from the FastSAM repository.

FastSAM was trained using the Ultralytics YOLOv8 instance segmentation architecture. This is interesting because it shows the strength of the dataset on which the original SAM was trained: using only a portion of the dataset, researchers were able to create a model that segments objects in images with relatively precise boundaries.

In addition, the FastSAM authors report a 50x faster run-time speed, making the model more practical to run than the original SAM model.

FastSAM works using a two-stage process:

- A pre-trained YOLOv8 instance segmentation model generates segmentation masks, then;

- Optionally, a text prompt can be specified to return masks related to the prompt. This is done using CLIP. CLIP is run over each mask and images with a close similarity to the text prompt are returned as a match.

The FastSAM authors note that the model can also be used for zero-shot edge detection and object proposal generation.

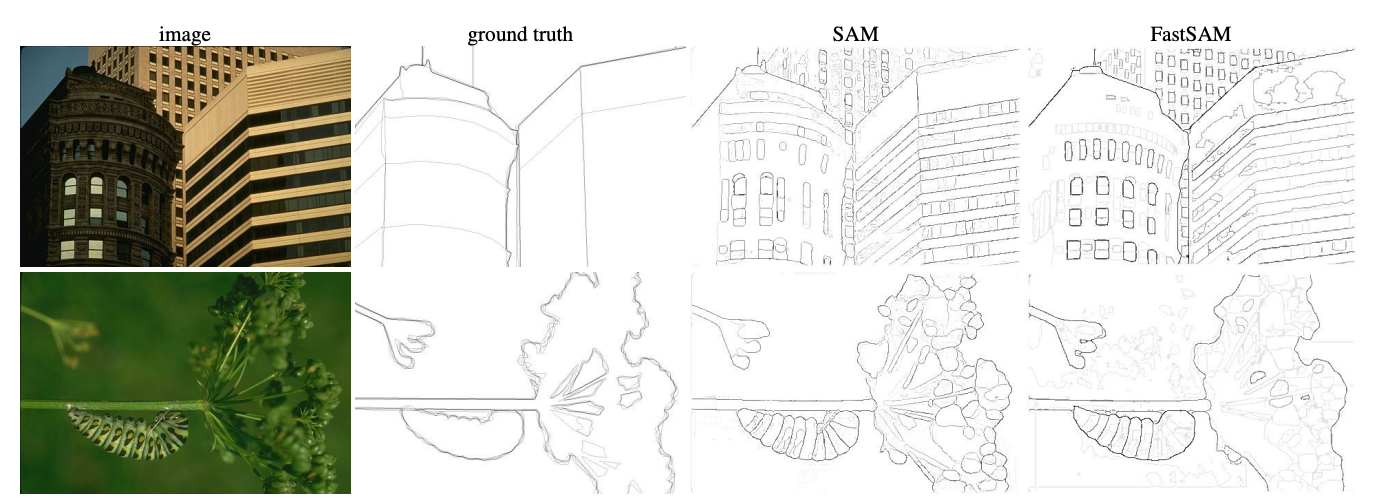

In the case of zero-shot edge detection, the FastSAM authors reported that, through qualitative observation, "despite FastSAM’s significantly fewer parameters (only 68M), it produces a generally good edge map.” The following image, from the FastSAM paper, shows the quality of FastSAM edge boundaries:

In this example, we can see the boundaries are less precise than those of SAM, but are nonetheless impressive given the model is trained on a significantly smaller dataset than SAM.

FastSAM Use Cases

One of the biggest learnings from FastSAM is that a model can replicate an output similar to SAM using a small, real-time model. FastSAM also demonstrates that the dataset on which SAM was trained is strong: with only a portion of the dataset, FastSAM was able to generate relatively precise masks.

FastSAM could be used as a transfer-learning checkpoint for training YOLOv8 models, given the strong performance achieved by the model. You could give text prompts to FastSAM to generate masks for your particular task.

With that said, FastSAM is not a replacement for SAM. While FastSAM achieves impressive performance compared to SAM given the smaller dataset on which FastSAM was trained, SAM is capable of producing more precise segmentation masks.

Conclusion

FastSAM is an image segmentation model trained on a portion of the dataset on which Meta Research’s SAM model was trained. Inference on FastSAM, as the name suggests, is faster than that of the SAM model. Fast Segment Anything could be used as a transfer-learning checkpoint, and demonstrates the quality of the SAM dataset. With that said, masks from FastSAM are less precise than masks generated by SAM.

Cite this Post

Use the following entry to cite this post in your research:

James Gallagher. (Jun 30, 2023). What is Fast Segment Anything (FastSAM)? The Ultimate Guide.. Roboflow Blog: https://blog.roboflow.com/what-is-fastsam/