Fastai, the popular deep learning framework and MOOC releases fastai v2 with new improvements to the fastai library, a new online machine learning course, and new helper repositories.

In this post, we will dive into what is new in fastai v2 and why you should check it out!

Video summary of this post.

What is fastai?

Fastai is a completely self-funded non-profit with the mission of:

Making neural nets uncool again

The fastai team Jeremy Howard, Rachel Thomas, and Sylvain Gugger are working to make AI understandable, accessible, and usable for anyone in the world.

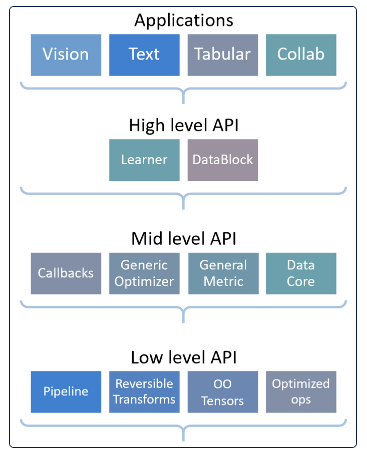

The fastai team is working to democratize artificial intelligence by developing their stack at differing layers of extraction.

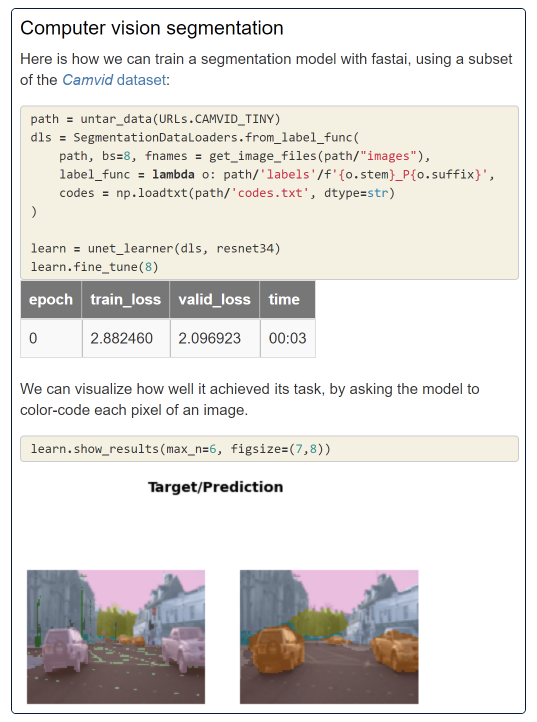

The topmost layer can be implemented with just a few lines of code to perform complex AI tasks in computer vision and natural language processing. For example, if you want to train a model in fastai to semantically segment portions of an image, it can be as quick as a few lines of code.

The high level abstraction is reminiscent of Facebook's Detectron2, TensorFlow's Object Detection Library, or Hugging Face Transformers. The high level abstraction in fastai makes it extremely easy to get started, and new users can quickly see the impact of their creations.

The mid level allows for more customization as you become more familiar with the library. This is where things like the data loader and customizable training loop live (more on these later). As you want to shift the library for your own uses and make contributions, you can do it here.

And finally, the deepest low layer reaches down to operations performed and optimized for the GPU. This allows deep learning researchers to tune low level configurations and run experiments, before the functionality has been provided by NVIDIA and CuDNN.

Fast.ai v2 Library Enhancements

Fastai 2 extends the popular fastai library by building more flexibility and ease of use into the prior library. Fastai 2 boast consistency across domains, meaning that students and researchers can use the library to make small migrations from subtask to subtask, and to make large migrations for NLP to computer vision and back again.

Fastai is built on top of PyTorch and many of the enhancements in Fastai 2 are building on and updating basic PyTorch functionality.

The Infinite Training Loop

The feature of the fastai library that I am most excited about is the fastai v2 infinite training loop. It is not infinite in so far as training runs forever. Training is fast.l Rather, it is infinite in the number of ways you can customize it.

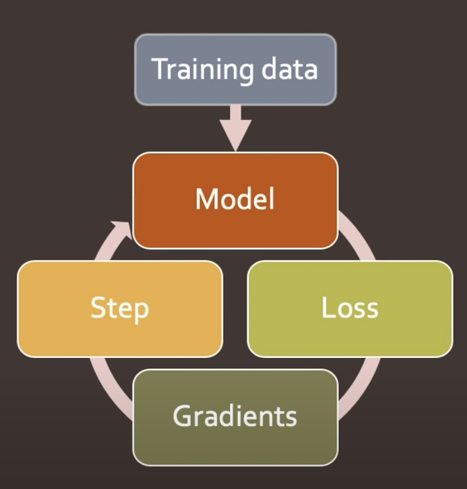

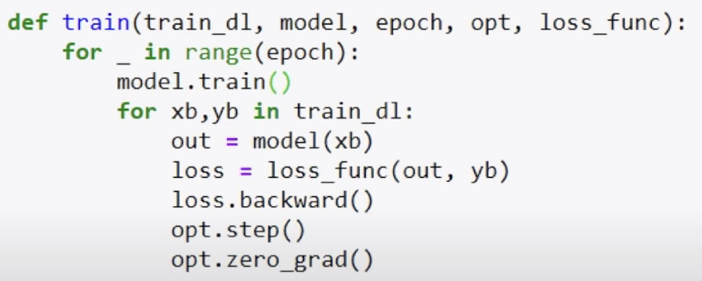

Boiled down, the typical training loop in PyTorch may look something like this:

Implemented in PyTorch code:

And if you want to implement customizations on top of that training loop (say to implement mixed precision training or MixUp data augmentation) you might interject sections of code to make your customizations. This can become quite entangled and is difficult to pass along to the next researcher, or to edit later with new tweaks.

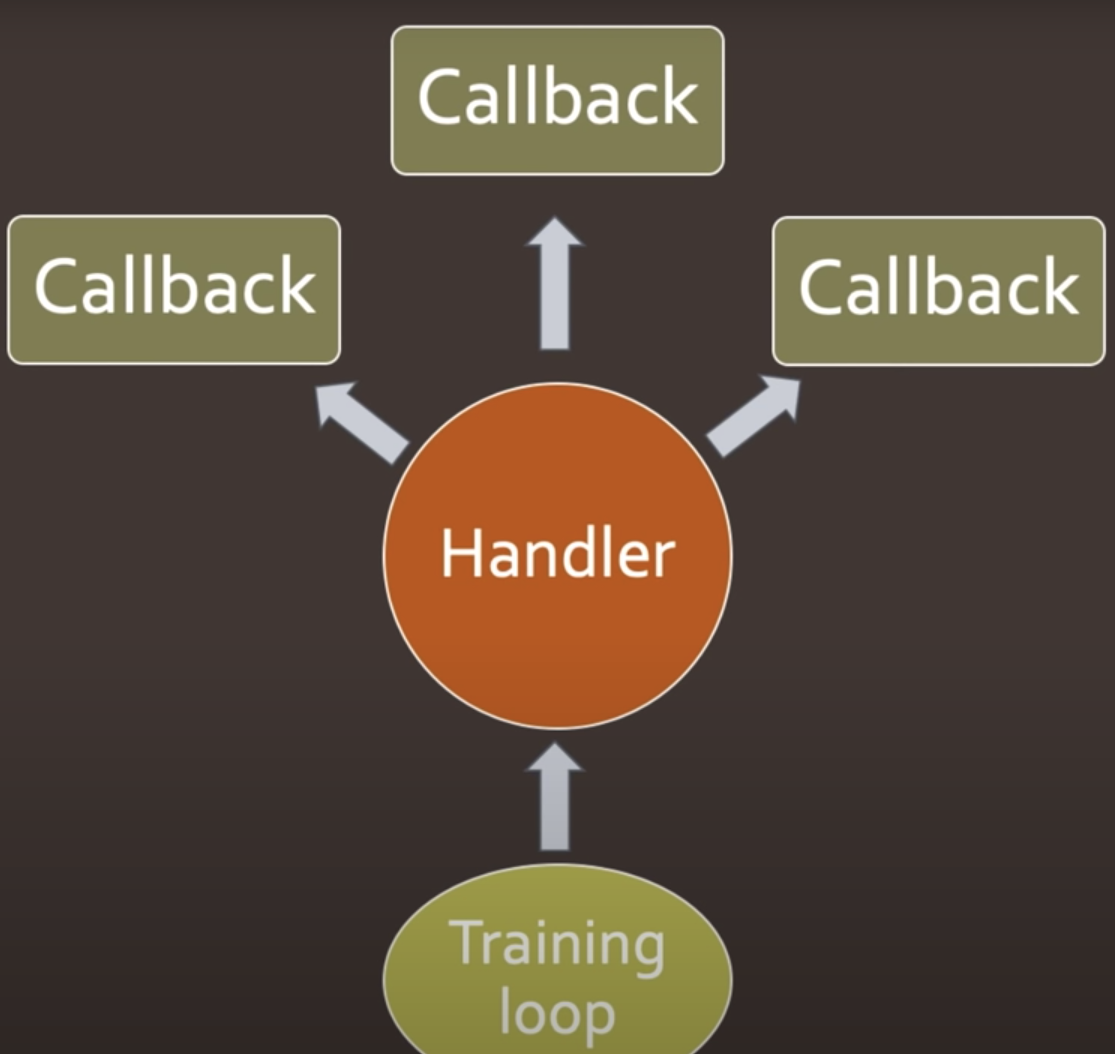

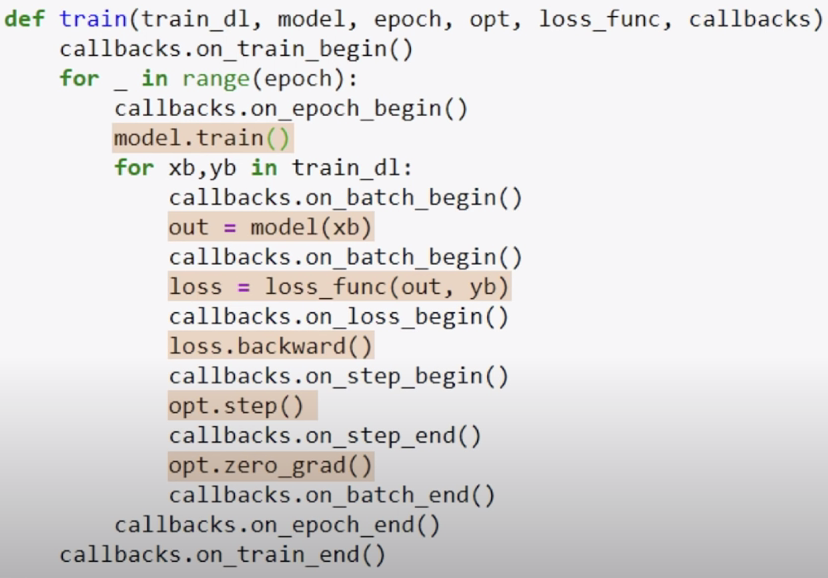

To address this problem, fastai builds the infinite training loop, by placing callbacks in the middle of the loop, allowing the programmer to interject new techniques.

And implemented in example code:

With the callbacks imported, you can separate them in your repository, mix and match, and share them through contributions.

GPU Accelerated Augmentation

Image augmentations generate additional training data by making tweaks to your original training set images. For example, you can augment your training data by performing random crops, random rotations, and flips.

The fastai 2 library supports most augmentations in computer vision and performs these augmentations during training for each batch, accelerated via the GPU.

These are just a few highlights from the new fastai library. If you'd like to dive deeper, I certainly recommend diving into the repository and reading the fastai v2 white paper.

Fastai V2 Helper Libraries

A new and improved deep learning framework wasn't enough to take on, so fastai also published a number of helper repositories for their new library and course. These include fastcore, fastscript, and fastgpu.

fastcore adds features to python from other languages to make fastai even better. It also adds some missing features to popular python libraries like numpy.

fastscript1 is a special scripting language, making it easy for a developer to package up their python code and share it as a simple CLI input command.

fastgpu is convenient library to orchestrate GPUs.

Fastai V2 MOOC

The best part of all of this is that Jeremy and Sylvain have recorded a lecture series explaining the foundations of machine learning and implementing techniques with their new library.

Check it out the new course! And check out our fastai2 classification tutorial.

Cite this Post

Use the following entry to cite this post in your research:

Jacob Solawetz. (Aug 28, 2020). Fast.ai v2 Released - What's New?. Roboflow Blog: https://blog.roboflow.com/whats-new-in-fastai2/