This is a guest post by Kristen Kehrer, Developer Advocate at CometML. Since 2010, Kristen has been delivering innovative and actionable machine learning solutions across multiple industries, including the utilities, healthcare, and eCommerce. Kristen was a LinkedIn Top Voice - Data Science & Analytics in 2018.

Welcome back to the tutorial on creating a school bus detector! In the last article we set up the camera using OpenCV and started annotating images in Roboflow. If you missed that article, you can find it here.

The Github repo with the code for this project is found here. Since I’m talking more about Roboflow in this article, I thought it would be fun to guest blog on the Roboflow site. All of the articles in this series will be linked so that they’re easy to navigate.

In this article we’ll go over:

- Annotating data in Roboflow

- Getting the images out of Roboflow

- Creating a Comet data artifact

- Training our yolov5 model

For the next phase of the project, we need to download the annotated data from Roboflow and then create a data artifact in CometML to track the data lineage. You might be thinking, “hey, if the data is already in Roboflow, why do you also need it in Comet?” Great question.

Before I started with Roboflow or Comet I made an absolute mess of my image data. I had a folder of images that were taken from my phone, a folder of images from my camera, from the internet, I created additional folders to hold the data that I hadn’t yet annotated, data was everywhere.

I put the project down for a couple months during the summer and when I picked it back up, I had no idea which data I had used last and I had to dig around in folders extensively to figure out which folder had the correct data. With the data lineage in Comet, I’m always going to know what data I was using last and I’ll have all of my code, data, dependencies, training runs, etc in one place. So even if I had not made a mess of my data, it is still valuable to have all of the data in one place for when I need to find it in the future, or if I’m sharing the Comet workbook with someone else.

I originally found Roboflow when I was looking to do some data augmentation. The data I finally ended up using is not augmented because the security camera will always be in the same place, and it will always be looking at the same scene outside my house. There’s no need to add image mutations that it will never see in the wild.

The Roboflow UI made it easy to upload my data, do the annotations, do the augmenting (I had still played with it), and then download the files in whatever file format I need for building my model. It also handles setting up training and validation sets for you. Roboflow is an end-to-end solution, but I had already planned on trying the YOLOv5 library, so I used the Roboflow Python library to access the data.

Annotating Your Images

We left off in the last article with annotating your images. Annotating over 1,000 images took me about 3 hours. I consider that to be quite quick and I think it’s largely due to Roboflow’s awesome UI. Instead of talking through the steps, here’s a quick 30 second video that shows you how I annotated my images in Roboflow.

Annotating school buses in Roboflow

Getting Images Out of Roboflow

To get the images out of Roboflow, we start by clicking the “export” button. You’ll see that I’ve already chosen the Yolov5 PyTorch format, which is what we’ll need for this project. I’m choosing to “show download code” so that I can add it to my repo. This will also create “data.yaml” that lists the location of the test/train/validate image sets within the data artifact.

The script will use the Roboflow API to download the image data to a staging directory. I could have continued my project in Roboflow as they offer tools for all phases of a computer vision project. However, in this particular version of my project I was looking to use the new CometML integration with YOLOv5.

Creating a Comet Data Artifact

Once I have the image data in a directory on my local machine, I create a Comet experiment, load the data.yml file, point Comet to the data directory to access the images, and then upload them to create a data artifact. This will also create a comet_artifact.yaml. This .yaml is also in version control, and if you want to see it, it’s here.

"""

Download an exported dataset from Roboflow, and create a Comet data artifact with the files.

"""

from roboflow import Roboflow

from glob import glob

from comet_ml import Artifact, Experiment

import yaml

import os

import shutil

# These are all the "configuration parameters" to set before running this script

roboflow_workspace = "kristen-kehrer"

roboflow_project = "schoolbus-images"

roboflow_version = 4

staging_directory = f"Schoolbus-Images-{roboflow_version}"

roboflow_api_key_file = '.roboflow_api_key'

def create_artifact():

# Delete the staging directory if it already exists

if os.path.exists(staging_directory):

shutil.rmtree(staging_directory)

if not os.path.exists(roboflow_api_key_file):

raise f'Missing configuration file: {roboflow_api_key_file}'

# Load api key from saved credential file and strip whitespace characters from the ends

roboflow_api_key = open(roboflow_api_key_file).read().strip()

# With the Roboflow python SDK, download the desired dataset.

# After `.download("yolov5")` completes, the full data artifact will

# be on the local filesystem at `staging_directory`

rf = Roboflow(api_key=roboflow_api_key)

project = rf.workspace(roboflow_workspace).project(roboflow_project)

dataset = project.version(roboflow_version).download("yolov5")

# Start a comet experiment (project name / api key are already set in `.comet.config`)

experiment = Experiment()

# Load the `data.yaml` file (this is how data needs to be formatted for YOLOv5) from the Roboflow artifact

metadata = yaml.load(open(os.path.join(staging_directory, 'data.yaml')), Loader=yaml.Loader)

# Convert the `names` field in the metadata from a list of strings to an integer-keyed dictionary

# of strings (`['bus', 'car']` needs to be `{ '0': 'bus', '1': 'car'}` for the comet metadata

# I created a PR to add this to the yolov5 library, I'll remove this once it's merged.

names_dict = {}

for i in range(len(metadata['names'])):

names_dict[str(i)] = metadata['names'][i]

metadata['names'] = names_dict

# Create the comet Artifact object

artifact = Artifact(name="schoolbus-yolov5-take2", artifact_type="dataset", metadata=metadata)

# Iterate through all files in `staging_directory`, and add the file to the artifact

for file in glob(f'{staging_directory}/**/*.*', recursive=True):

artifact.add(file, logical_path=file.replace('', '/'))

# Log the artifact and end the experiment

experiment.log_artifact(artifact)

experiment.end()

def write_yolov5_data_file():

with open('comet_artifact.yaml', 'w') as file:

file.write(f'path: "comet://kristenkehrer/schoolbus-yolov5-take2:latest"n')

# When this script is run, run these two functions:

create_artifact()

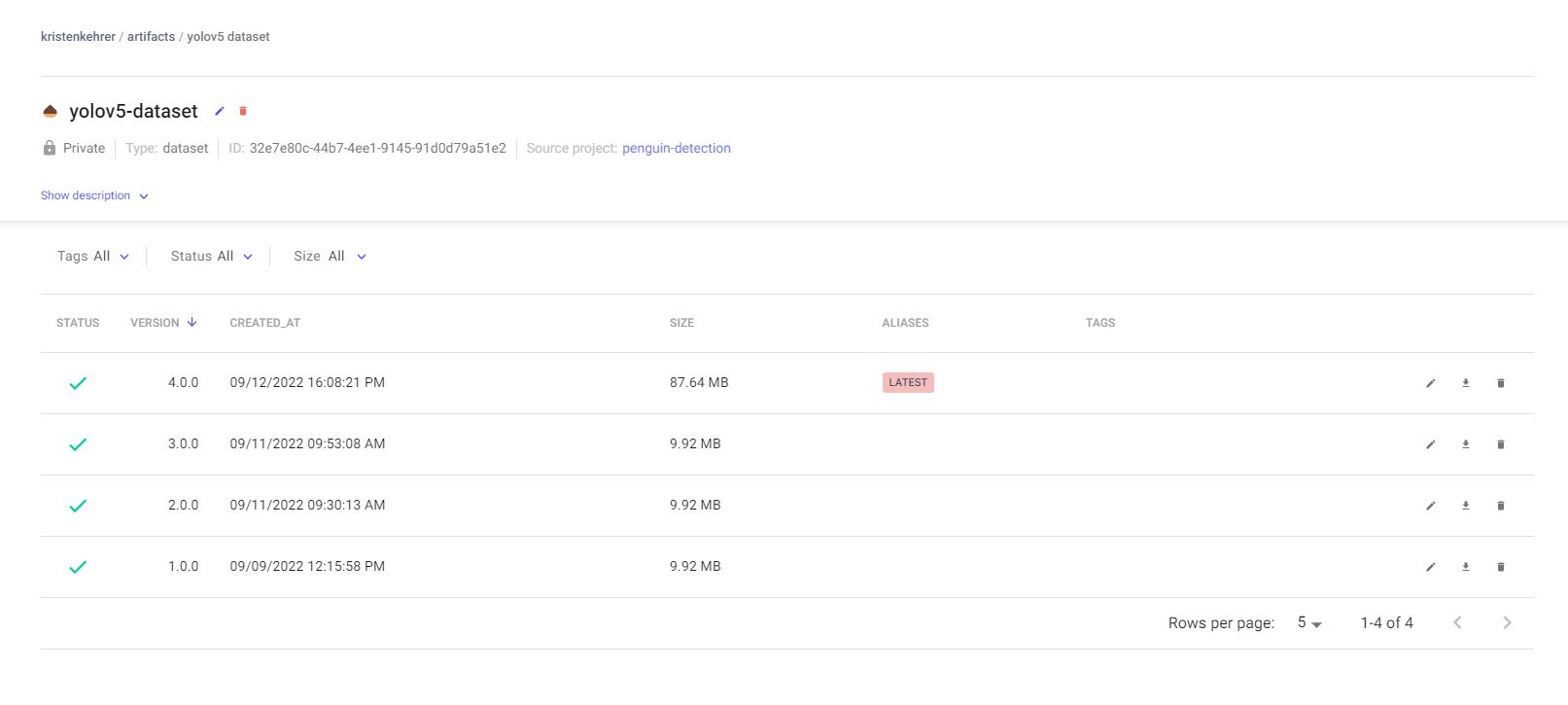

write_yolov5_data_file()The image below shows the data versions for part of the project. I actually ended up creating a new artifact when I was getting ready to make the project public, but I figured that the older image below better demonstrated what I’m talking about.

The last artifact I uploaded is always automatically tagged by Comet with “latest”, so that I can always use the most recent artifact when I run training. In this view you can also see the earlier versions of the artifact I created while building the project. You can see that I have multiple versions of the data, so that if I need to go back I can, but I’m also always able to identify which data source is my latest.

Having data in unorganized files on my computer was a bit of a pain point after putting this project down for a month or two and then trying to pick it back up.

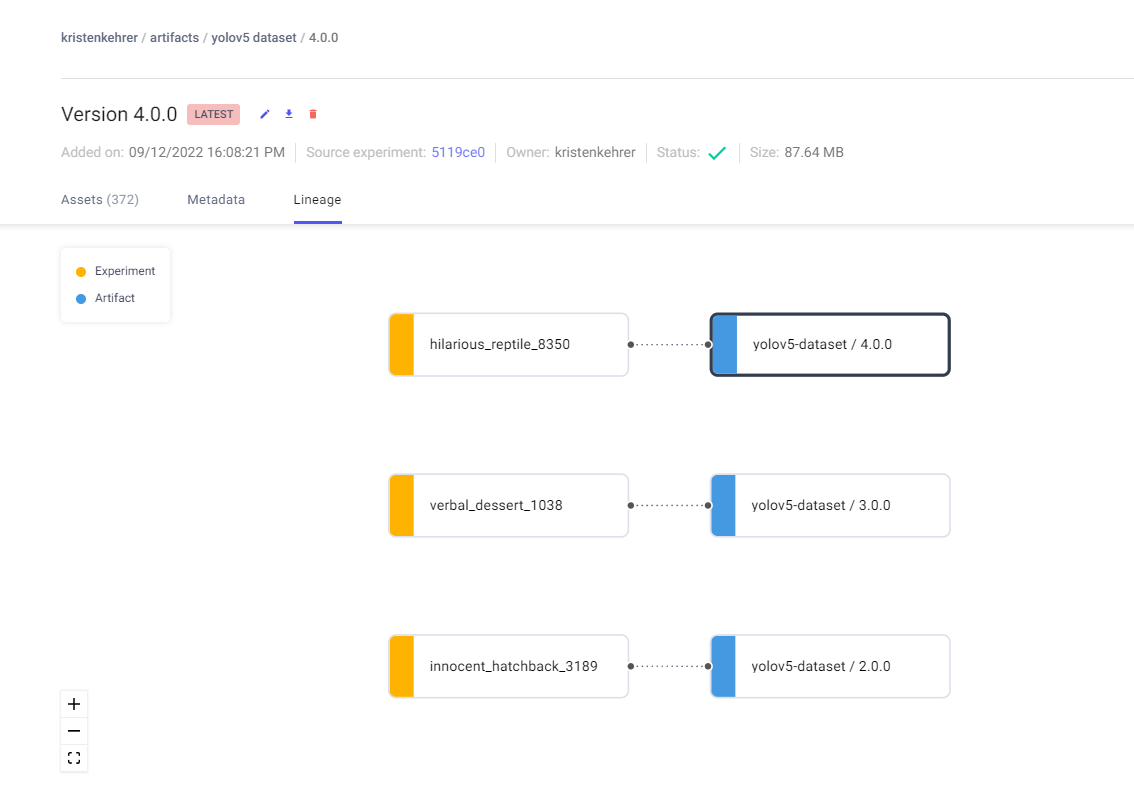

Below you can see that I’m able to see which model was trained on what data. “hilarious_reptile” is just the name of one of my model runs.

Now I have my data all set and I’m ready to start in with my model.

Preparing to Train Our YOLOv5 Model for Bus Detection

Now that we have our data, it’s time to think about compute. I chose to run this model on my own GPUs. To make sure I can access the GPUs, I made sure to follow the pytorch (used by yolov5) “Get Started Locally” documentation. This provides the specific pip instructions for installing pytorch, which I then added to my requirements.txt file.

Training Our YOLOv5 Model

Now we’re set up to train our YOLOv5 model. For training, I followed the “Train Custom Data” instructions from the yolov5 project.

I created a simple script, train.ps1, which calls the yolov5 train.py script from the yolov5 repository. I include the path to my comet artifact.yaml, which will be downloaded and used as the training data source for yolov5, thanks to the new yolov5 comet ml integration.

The workbook where my model runs is compared and tracked is here. I’m not going to claim to be a computer vision expert, I used the weights that were provided and played a bit with the defaults, but there was no magic taking place here. Once I started training on a set of over a thousand images, all of the training runs are doing quite well.

Summary

Alrighty, at this point you’ve potentially kicked off a training run, and you’re waiting for your model to train. In this article we used Roboflow to annotate our images and the Roboflow Python SDK to move our annotated image data from Roboflow to a staging directory and then uploaded the image data as an artifact into Comet. Then we used our data to train a model.

In the next article, we’re going to detect the bus live and set up the alerts using AWS, super exciting.

Cite this Post

Use the following entry to cite this post in your research:

Trevor Lynn. (Oct 27, 2022). School Bus Detection Using YOLOv5 (Tutorial – Part 2). Roboflow Blog: https://blog.roboflow.com/school-bus-detection-yolov5/