What is CVAT?

CVAT is a web-based, open-source image annotation tool originally developed by Intel and now maintained by OpenCV. CVAT provides annotation features for object detection, classification, tracking, and segmentation tasks.

In this post, we will be focusing on CVAT's ability to make object detection annotations on images, although, it has many more capabilities including, CVAT annotation tool for video, CVAT annotation tool for semantic segmentation, CVAT for polygon annotations, and so on.

For an extremely detailed guide of every element in the interface, refer to CVAT's documentation.

CVAT Object Detection Video Tutorial. Subscribe to our YouTube for more!

CVAT is an annotation tool among a group of similar DIY labeling tools including LabelImg computer vision labeling tool.

We recommend trying to label a batch of images yourself (50+) and training a state of the art model like YOLOv4, to see if your computer vision task is already solved with current technologies.

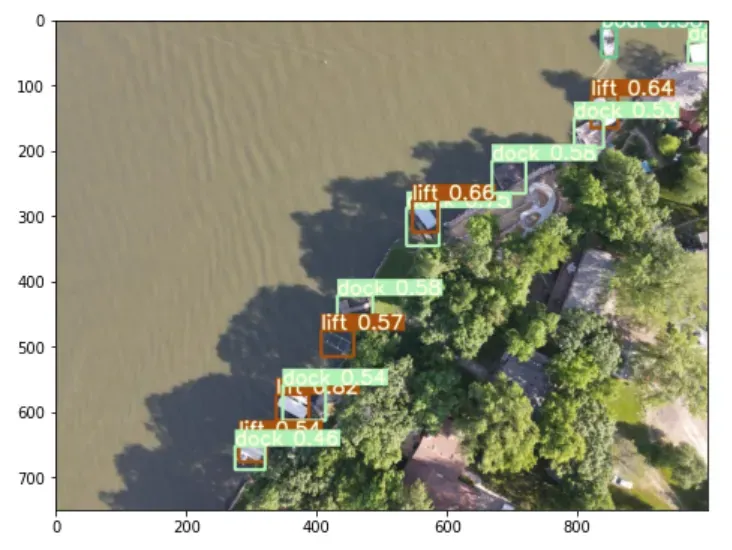

We will be showing the steps used to annotate the public aerial maritime object detection dataset taken from a drone. Although a specific dataset is used, this post is meant to be a general guide on how to label an object detection dataset and how to use labeling tools for object detection. Feel free to another similar aerial imagery dataset.

CVAT labeled image for computer vision

How to Annotate Images with CVAT

If this is the first time you have encountered CVAT, then you want to start by launching the CVAT website, which is the quickest way to start labeling your data.

Once into the CVAT website, you will see a page like this:

Launch New CVAT Task

From there, you can launch a new task in CVAT and drag your images in for labeling. You are prompted to specify the class labels of the objects that you would like to detect. Carefully specify these because you'll want to ensure you have all the needed classes before you start labeling.

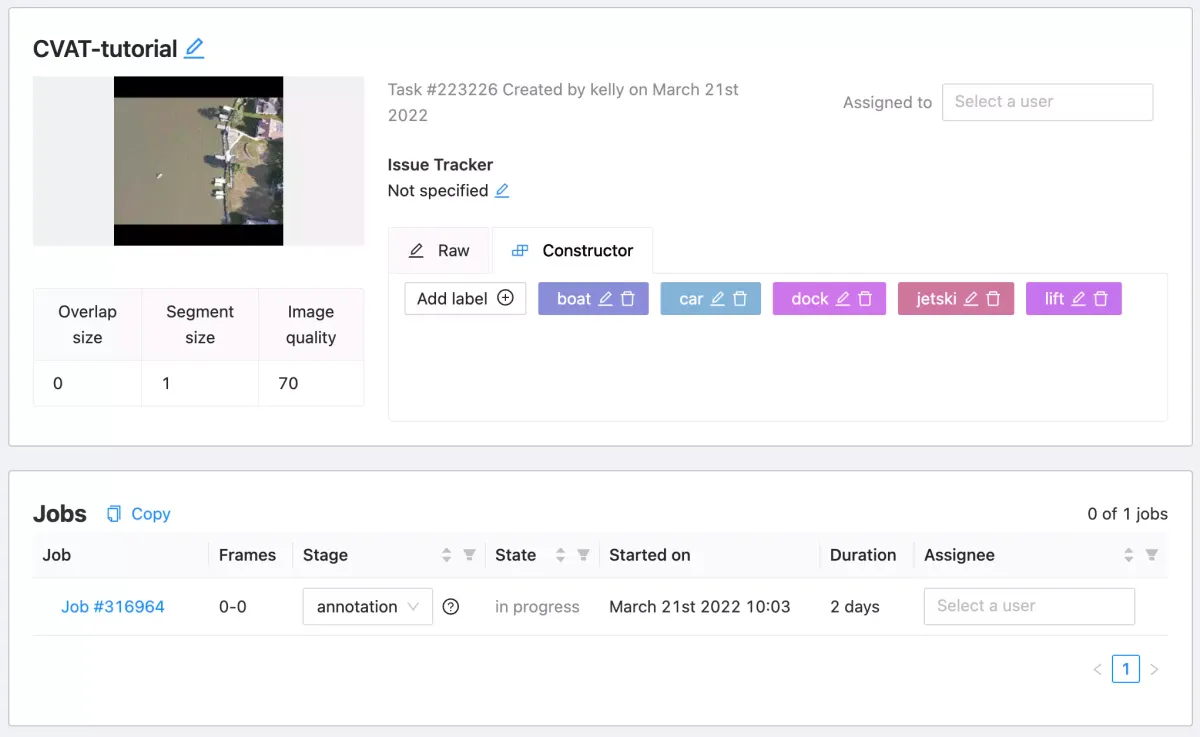

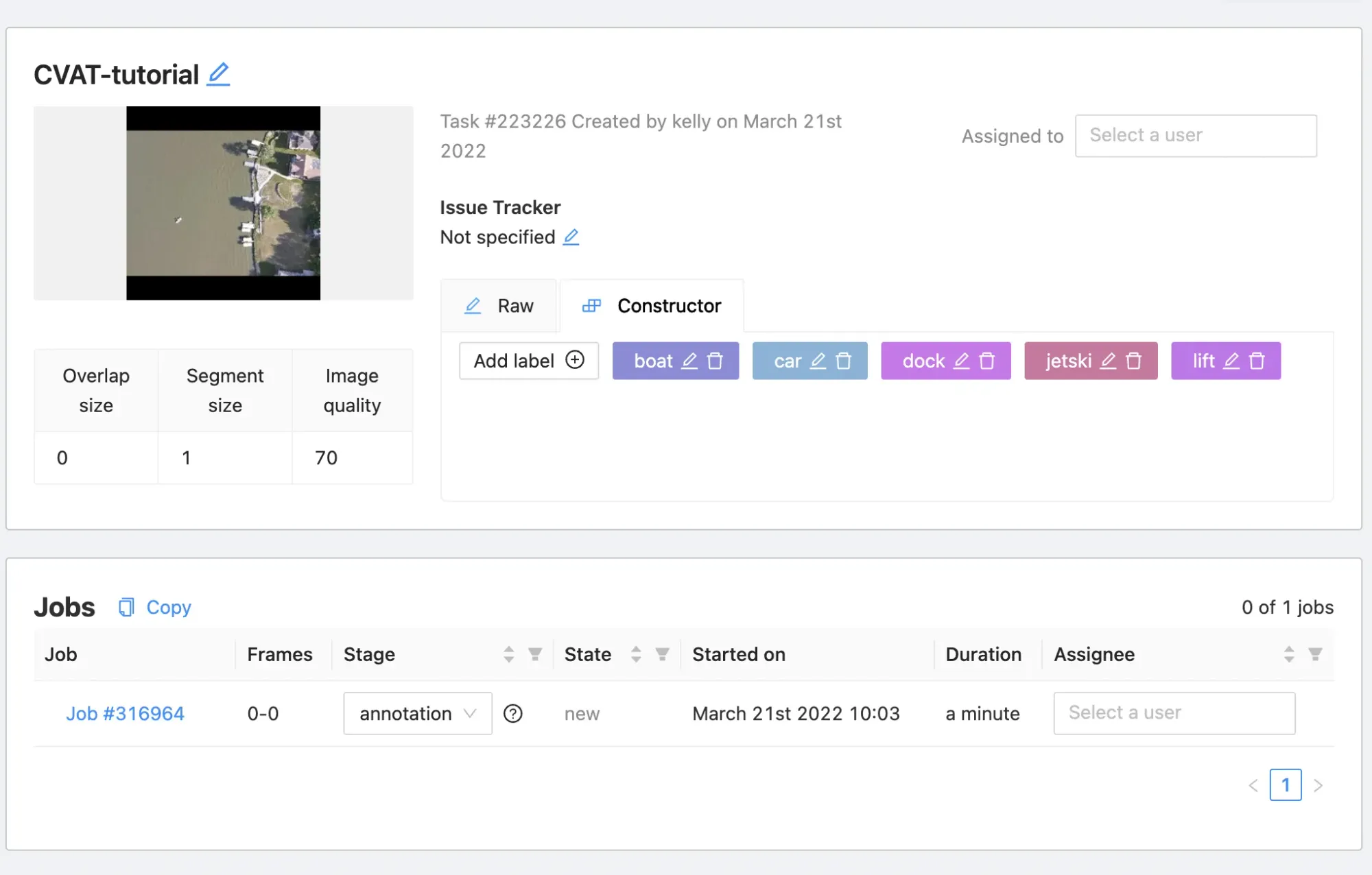

Once your data is uploaded, navigate back to tasks. From there, you will see a task page.

Enter CVAT Labeling Job

You can create jobs to annotate this dataset and you will have automatically set up the CVAT labeling job when you created the task. Note the task/job semantic hierarchy.

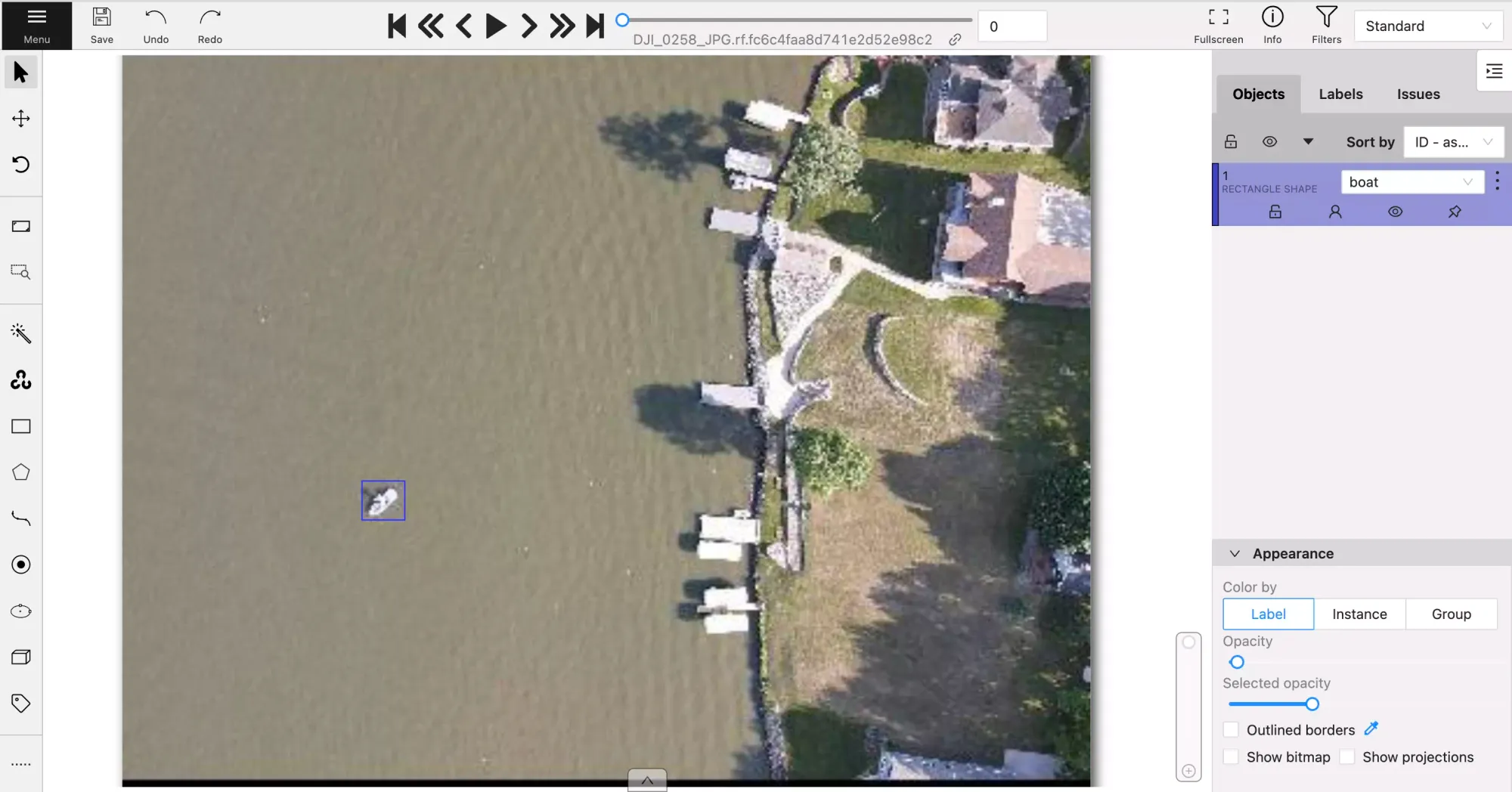

Now you can click into your labeling task and get to work. When you're in the labeling screen you will see the following.

Drawing Annotations in CVAT

CVAT offers multiple types of shapes for annotation: rectangle (bounding box), polygon, polyline, points, ellipse, cuboid, and tag. Below are examples of how those tools can be used.

You can click "Create Shape" and draw a box around the object you want your detector to detect.

Labeling in CVAT with a rectangle

Bounding boxes may work for your project but if you need a tighter outline of your objects for better performance, you can use the polygon tool. Similar to the polygon tool, you also have the option of using the polyline annotation tool. Below is a video of the polygon option.

Labeling in CVAT with the polygon tool

In addition to manual labeling tools, CVAT offers model assisted labeling which allows you to use artificial intelligence to automate labeling work.

You can use your Roboflow models in CVAT, allowing you to annotate images faster than ever in CVAT. With this setup, your Roboflow model (or any model on Roboflow Universe) will automatically annotate new images that you need to annotate. You can adjust and remove annotations as necessary. Check out our Roboflow CVAT Integration guide for a deep dive into how to start using Roboflow models with CVAT.

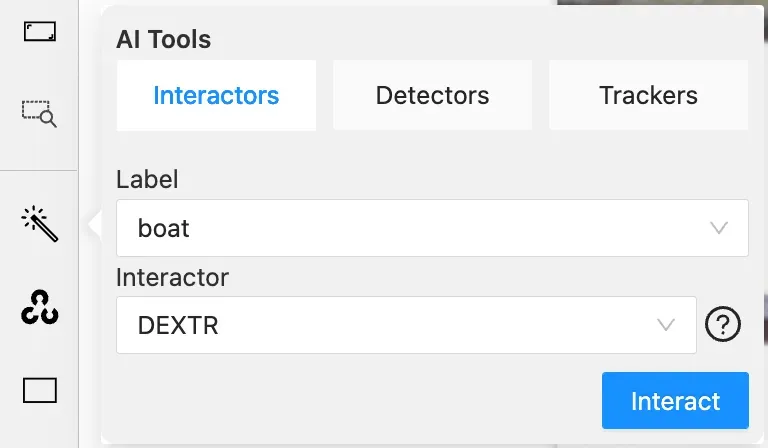

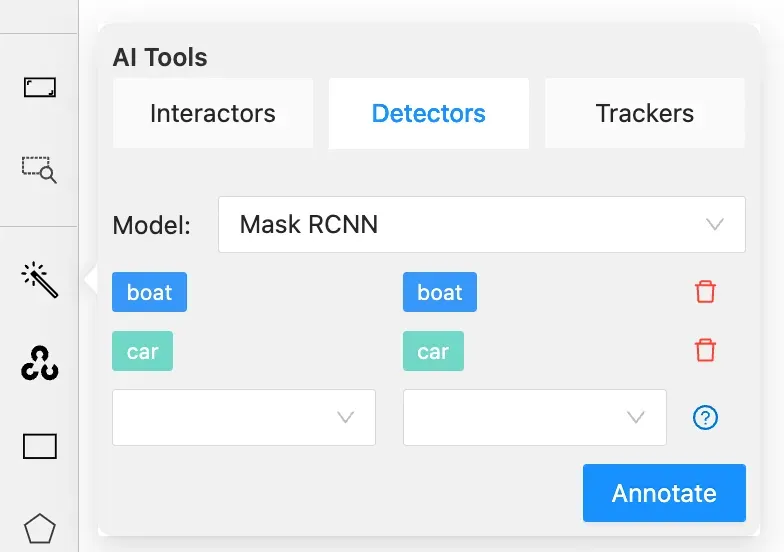

In the AI Tools section, you'll find Interactors and Detectors which help create polygons.

Interactors include Deep Extreme Cut (DEXTR), Feature Backpropagating Refinement Scheme (f-BRS), High Resolution Net (HRNet), and Inside-Outside-Guidance.

Detectors include Mask RCNN and Faster RCNN which are deep learning models suitable for specific labels. If your dataset includes objects that these models support, you'll be able to very quickly label those objects thanks to the labels being applied automatically.

CVAT's variety of labeling options give you different ways to annotate your data for a given project's purpose. When using CVAT, it's important to define your classes and labeling method before starting the project to ensure you do not need to spend time updating annotations.

Exporting Annotations From CVAT

You first want to click "Save". CVAT does not automatically save work.

Then click "Menu", in CVAT you will see the following options:

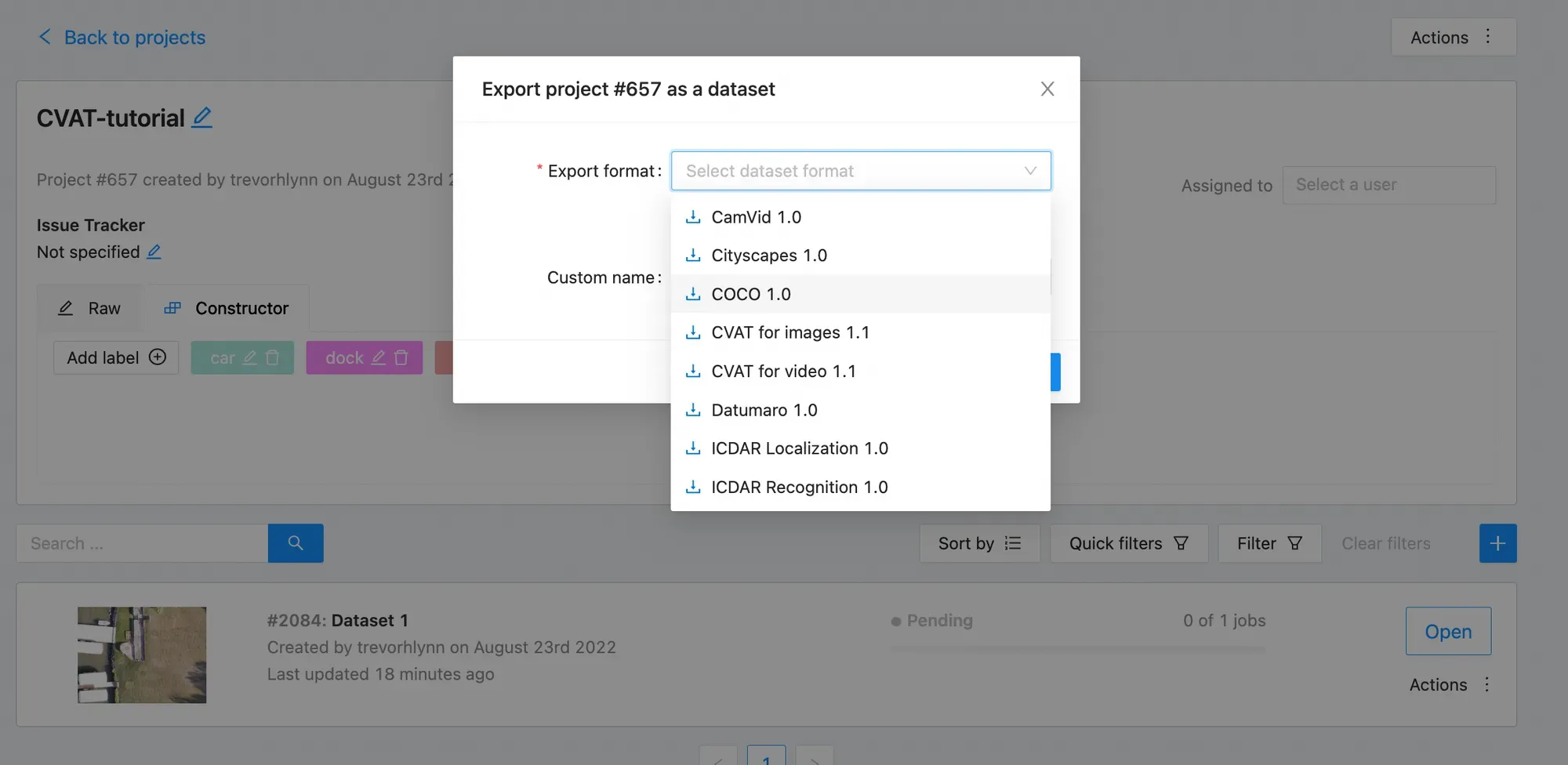

Then you want to click "Export task dataset" and you can choose among different formats: label VOC XML, label COCO JSON, label YOLO annotations, etc. Before exporting, be sure to know the format needed to train your specified model of choice. In the even you need to convert your annotations to a format CVAT does not support, Roboflow offers free dataset conversions to 26+ formats.

Congrats! Now you have a labeled dataset.

Install CVAT on Your Local Machine

If you are serious about CVAT, you can configure it on local. The CVAT website has these limitations:

- No more than 10 tasks per user

- Uploaded data is limited to 500Mb

On local you will not be subject to these limitations because your machine will be doing the heavy lifting.

To launch CVAT on local, first clone the CVAT repository in your terminal window.

git clone https://github.com/opencv/cvat.git

cd cvatThen, if you don't have Docker, install Docker. See that Docker is sucessfully installed:

docker versionNow we build CVAT on local and launch with the following commands:

docker-compose build

docker-compose up -d

This will take a while to run. It is building CVAT dependencies in your local machine. Then you create your username within your local CVAT service by executing into it:

docker exec -it cvat bash -ic 'python3 ~/manage.py createsuperuser'Now, navigate to your browser and type

http://localhost:8080/This will navigate to your local CVAT!

You can come back later and restart the service. If you are having trouble logging into CVAT, you can rebuild with no-cache:

docker-compose build --no-cache

docker-compose up -dCVAT Labeling Tips, Tricks, Best Practices

When you're operating in CVAT, carefully annotate objects with your downstream model in mind. Keep these labeling best practices in mind while working through your dataset:

1) Label entirely around the object

2) For occluded objects - label them entirely

3) Generally label objects that are partially out of frame

4) Beware of labeling many boxes that overlap or are entirely contained within each other. This can really confuse your model.

CVAT Tips

- Start your labels list with the most represented class. It will be the default when you draw a box

- Label all objects in each class first - you can focus on them and change all of their labels at once

- Type "N" to draw a new box

CVAT Alternatives

CVAT is just one of many computer vision labeling tools. If you're wondering if it's right for you, you may want to read our Ultimate Guide to Object Detection or try Roboflow Annotate, which is designed to simplify many of the rough edges open source tools like CVAT have.

Common CVAT alternatives include:

- LabelImg: LabelImg is a free image annotation tool written in the Python programming language.

- LabelMe: LabelMe was created by MIT as a free-to-use annotation tool for computer vision tasks.

Looking to Get Started with Annotating Data?

Roboflow provides easy annotation with smart auto-suggested defaults. It's no surprise users annotate faster with Roboflow.

Next Steps After Labeling Your Computer Vision Dataset in CVAT

Once your dataset is labeled in CVAT, it is time to move to the creation of your computer vision model!

Roboflow makes it easy to load in your data (just drag and drop your images and your annotation file from CVAT). You can generate even more data with augmentations such as flipping images for CV, random cropping, and creating synthetic computer vision data. If you are interested in using data augmentations to increase the number of your training images (to spend less time in CVAT), this is a good guide on using data augmentation in computer vision.

When you are ready, use Roboflow Train to train a model with one-click and quickly test your model using our web app or your webcam. Alternatively, you can export your data from Roboflow to any format and start training your computer vision model.

Our posts on How to Train YOLOv4 and How to Train EfficientDet are good starting points to train your model and then from model evaluation, you can gauge how much more data you may need to collect and annotate.

Frequently Asked Questions

What is CVAT used for?

CVAT is used for annotating images for object detection, classification, and segmentation tasks. You can annotate images manually or use model-assisted labelling where a model will recommend annotations to add to an image.

What are the advantages of CVAT?

CVAT provides a robust web interface through which you can annotate images. By offering a web interface, you can use CVAT without installing software. CVAT offers a range of different annotator tools such as ellipse, bounding box, polygon, and model-assisted labelling.

Cite this Post

Use the following entry to cite this post in your research:

Jacob Solawetz. (Mar 21, 2022). What is CVAT (Computer Vision Annotation Tool)?. Roboflow Blog: https://blog.roboflow.com/cvat/