An oil spill in the sea is a devastating environmental incident that occurs when oil is released into the marine environment. Oil spills pose significant threats to marine ecosystems, wildlife, and coastal communities, and they can have long-lasting and far-reaching consequences.

Due to the logistical difficulties in getting to impacted areas, cleaning up an oil spill is an expensive endeavor. Before sending out a clean-up crew, it is essential to precisely measure the spill's characteristics, such as the volume, thickness, and extent, in order to maximize resources and save costs. This is where computer vision becomes an essential tool.

This post will show you how to label data using to train an instance segmentation model for remotely understanding and evaluating an oil spill. Let's get started!

How Computer Vision Helps Clean Oil Spills

Capturing high-resolution images or videos of the oil spill from an aerial perspective can be analyzed using computer vision models to enable accurate measurements of the spill. The acquired information helps experts determine the most suitable and efficient cleaning method for the specific spill.

For example, if the spill is relatively thin and widespread, mechanical containment and recovery techniques, such as deploying booms and skimmers, may be sufficient. On the other hand, if the spill is thicker or dispersed over a larger area, burning or the application of chemical dispersants might be more appropriate.

By understanding the spill's characteristics through computer vision analysis, the response team can opt for the most cost-effective and environmentally friendly cleaning approach.

Once the clean up is complete, computer vision can also facilitate post-cleaning assessment and monitoring of the affected area. By comparing pre- and post-cleaning images, experts can evaluate the effectiveness of the cleaning operation, identify any residual contamination, and plan for further remediation measures if necessary.

The integration of computer vision into oil spill response processes offers significant benefits in terms of cost savings, efficient resource allocation, and improved decision-making.

Labeling Oil Spill Thickness for Computer Vision

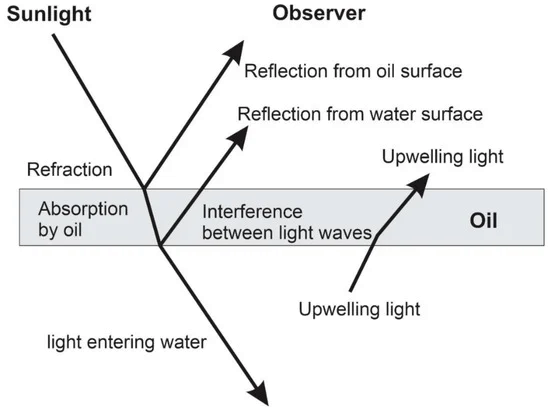

In this project, the aim is to detect the thickness of an oil spill using computer vision. The thickness estimation will be based on the visual appearance of the oil on the water's surface, particularly the color characteristics.

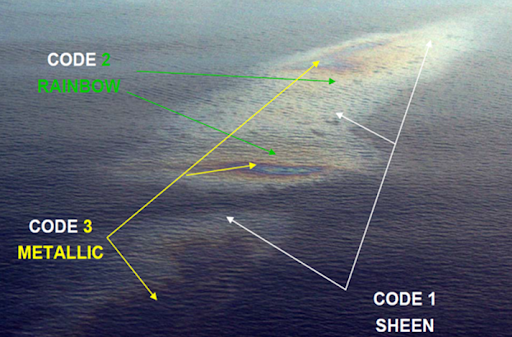

The following image shows the aerial view of the oil spill and its different color codes.

Sample aerial view of Bonn agreement oil appearance thickness codes (Bayramov, E. Et al)

Oil Spill Data Collection and Labeling

In this project, the thickness of oil is detected using image segmentation, specifically instance segmentation. The following three classes are used to label the dataset and detect oil thickness:

- Rainbow

- Sheen

- True Color

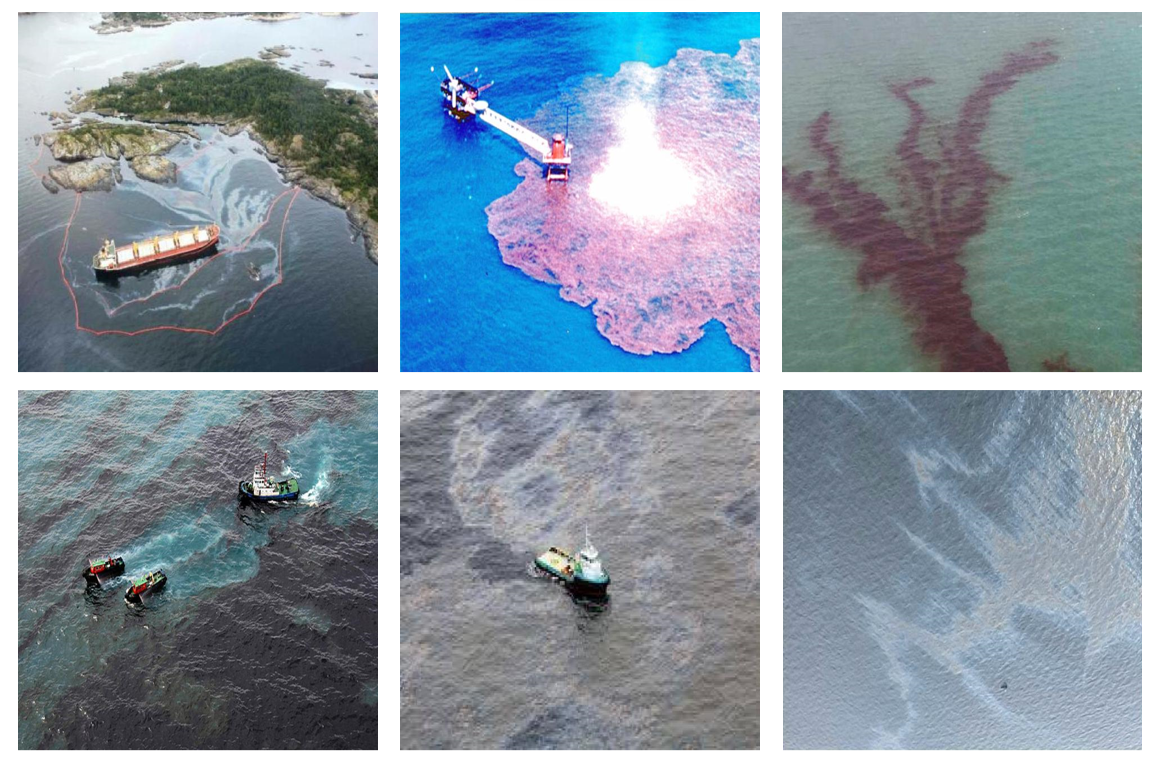

The oil spill dataset for this project is obtained from open source images on Roboflow Universe. The following are the sample images used in the project.

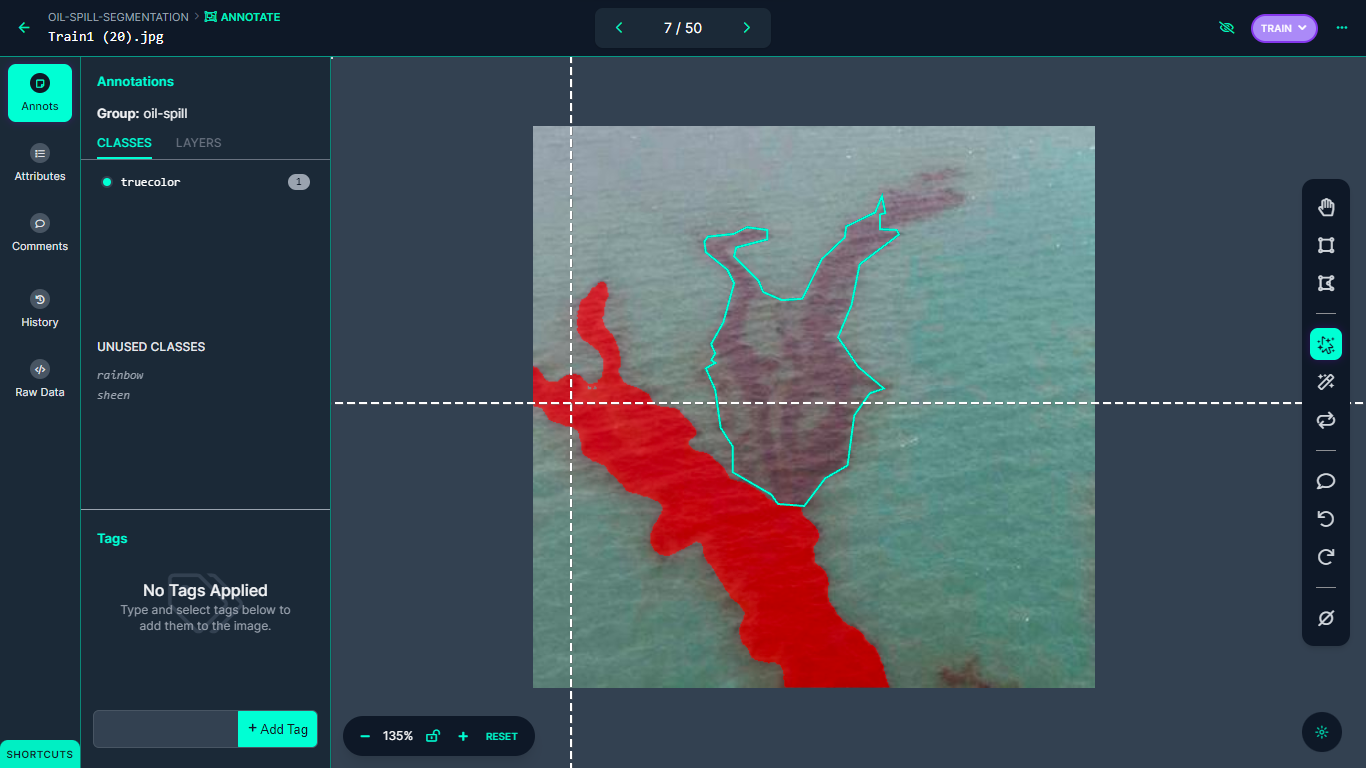

The dataset is then annotated for instance segmentation using Roboflow Annotate. The Smart Polygon tool is used for labeling the images for this project.

After annotating all images in the dataset, the dataset is then generated to allow for model training as a next step.

Oil Spill Model Training and Testing

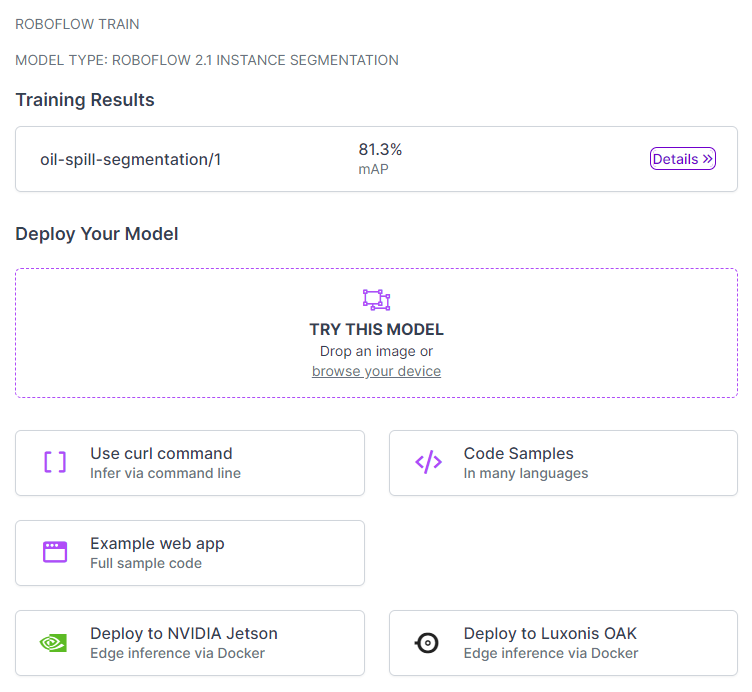

After the dataset is prepared, it is ready for training using Roboflow Train, which not only facilitates the model training process but also optimizes it for seamless deployment.

In this project, the focus is on training the model for instance segmentation, enabling accurate detection and delineation of individual objects within the images.

The model, as illustrated in the following figure, has been trained with an impressive mean average precision (mAP) score of 81.3%. This score is a proof that the model is accurately detecting and segmenting objects within the images for which it is trained.

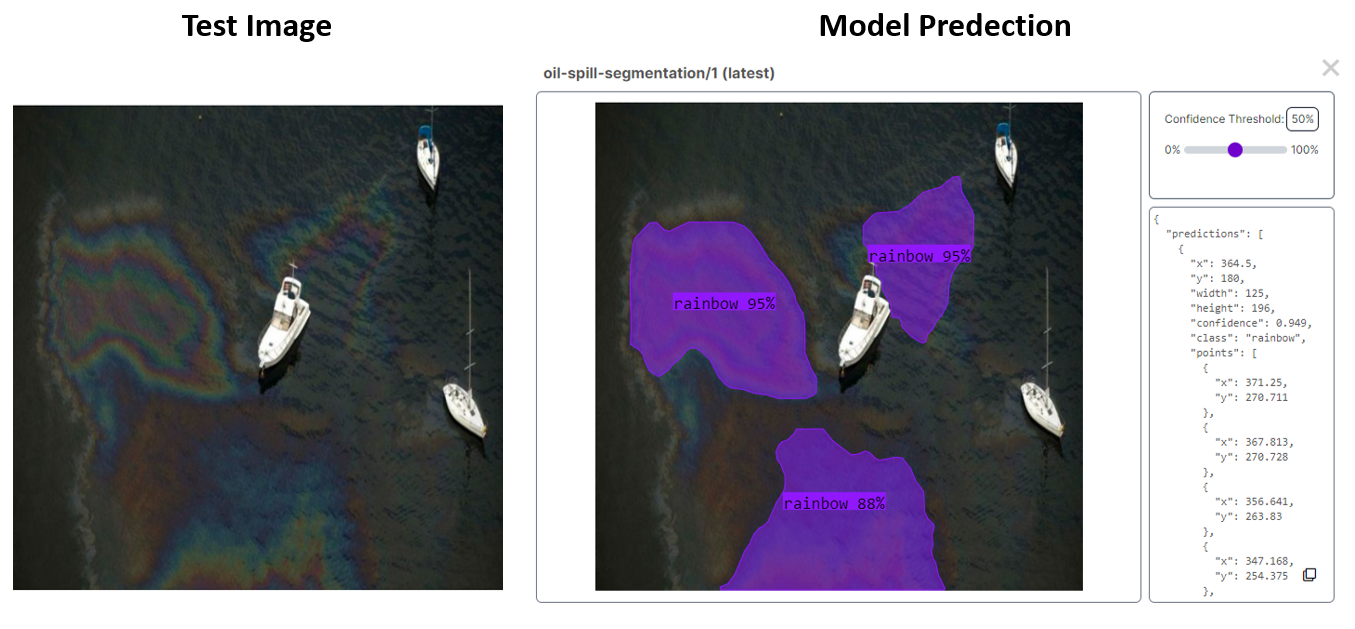

During the testing phase on Roboflow, the model exhibited promising outcomes, as shown in the following figure. Notably, the model accurately identified an oil spill categorized as 'Rainbow,' which proves that the model is effective in detecting and classifying different types of oil spills from image.

Now that we have a working model, it's time to use the model to build an application for evaluating and monitoring oil spills.

Oil Spill Monitoring System Overview

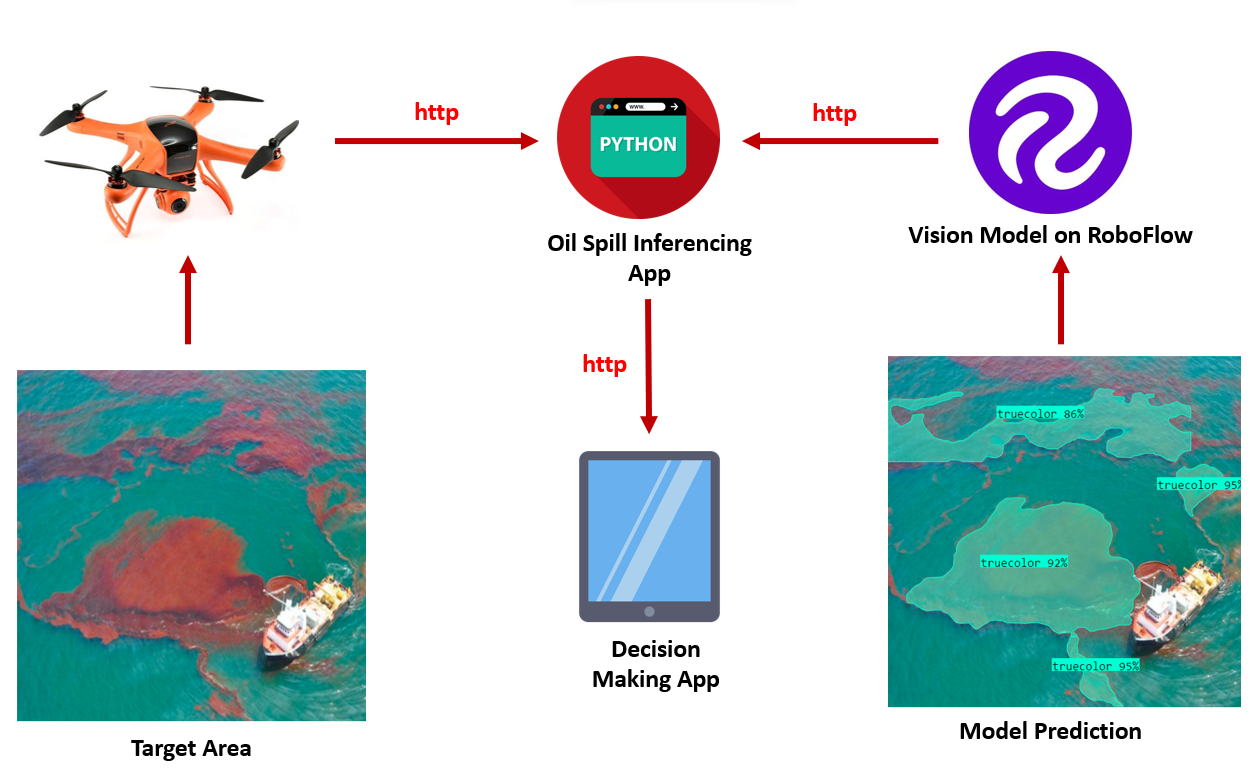

The trained model on Roboflow cloud is accessible through an API, allowing for predictions on received images or video.

In the context of an oil spill detection system, a system architecture can be designed to incorporate a drone embedded with a camera and GSM module to inspect sea areas and capture images of the target area. These images are then sent for processing, utilizing the deployed model, to predict the presence and extent of oil spills.

The resulting predictions can then inform decision-making processes regarding the appropriate actions to remove the oil spill.

The decision-making app receives the predicted results and applies predefined criteria or algorithms to assess the severity and extent of the oil spill. Based on this information, decisions can be made regarding the appropriate course of action to remove or mitigate the oil spill.

These decisions may involve deploying cleaning crews, initiating containment measures, or implementing further assessments. By integrating the drone, oil spill inferencing app, and the decision-making app, the system provides a comprehensive solution for efficient oil spill detection and response.

Example output of oil spill detection system

The output video file was generated through the code repository here which shows the detection of oil spill for ‘true color’ class using Ultralytics YOLOv8. Follow this tutorial to learn how to train a YOLOv8 Instance Segmentation model with a custom dataset.

Conclusion

The project focuses on developing a system for detecting and segmenting oil spills in drone footage using instance segmentation. This computer vision based system helps to accurately identify and delineate oil spill regions for selecting efficient cleanup tactics. This approach enables real-time monitoring and assessment of oil spills, facilitating prompt decision-making and effective response strategies.

Cite this Post

Use the following entry to cite this post in your research:

Timothy M. (Jul 25, 2023). Detect and Segment Oil Spills Using Computer Vision. Roboflow Blog: https://blog.roboflow.com/detect-analyze-oil-spills-computer-vision/